The minimum maximum cost of steps suggests the use of a minimum spanning tree (MST) on the steps. Once the MST is built, one can use methods such as Kruskal Reconstruction Tree or binary lifting (my preference) to query for path maximums on the MST. This is where the idea of using Boruvka's algorithm first appeared, since someone once told me that Boruvka's is good for MSTs on graphs with many edges; unfortunately, I could not finish the problem using it although the intended solution apparently uses it.

I first coded out the direct MST solution, adding all steps between ancestors and nodes to the graph that would be MST'ed on.

#include "teleport.h"

#include <bits/stdc++.h>

using namespace std;

#define ll long long

#define iloop(m, h) for (auto i = m; i != h; i += (m<h?1:-1))

#define jloop(m, h) for (auto j = m; j != h; j += (m<h?1:-1))

#define kloop(m, h) for (auto k = m; k != h; k += (m<h?1:-1))

#define pll pair<ll, ll>

#define INF 2000000000000000LL

ll n, p[50005], a[50005], x, y, z;

vector<ll> adj[50005];

vector<pair<ll, pll>> ae;

vector<pll> adj2[50005];

ll twok[17][50005], twok2[17][50005], dp[50005], cc;

ll par[50005], sz[50005];

ll find(ll x) {

return (par[x] == x ? x : par[x] = find(par[x]));

}

ll merge(ll x, ll y) {

x = find(x), y = find(y);

if (x == y) return 0;

if (sz[x] < sz[y]) swap(x, y);

par[y] = x;

sz[x] += sz[y];

return 1;

}

void dfs(ll nd, ll pr) {

for (auto it : adj[nd]) if (it != pr) {

a[it] ^= a[nd];

dfs(it, nd);

}

}

void dfs2(ll nd, ll pr) {

twok[0][nd] = pr;

iloop(1, 17) {

if (twok[i-1][nd] == -1) break;

twok[i][nd] = twok[i-1][twok[i-1][nd]];

if (twok[i][nd] != -1) twok2[i][nd] = max(twok2[i-1][nd], twok2[i-1][twok[i-1][nd]]);

}

for (auto it : adj2[nd]) if (it.second != pr) {

dp[it.second] = dp[nd] + 1;

twok2[0][it.second] = it.first;

dfs2(it.second, nd);

}

}

ll lca(ll x, ll y) {

ll cans = 0;

if (dp[x] < dp[y]) swap(x, y);

iloop(16, -1) if (dp[x] - dp[y] >= 1<<i) {cans = max(cans, twok2[i][x]); x = twok[i][x];}

if (x == y) return cans;

iloop(16, -1) if (twok[i][x] != twok[i][y]) {

cans = max({cans, twok2[i][x], twok2[i][y]});

x = twok[i][x], y = twok[i][y];

}

cans = max({cans, twok2[0][x], twok2[0][y]});

return cans;

}

void init(int N, vector<int> P, vector<int> W) {

n = N;

memset(twok, -1, sizeof(twok));

p[0] = -1, a[0] = 0;

iloop(1, n) {

p[i] = P[i], a[i] = W[i];

adj[p[i]].push_back(i);

}

dfs(0, -1);

ae.clear();

iloop(0, n) {

x = p[i];

while (x != -1) {

ae.push_back({a[x] ^ a[i], {x, i}});

x = p[x];

}

}

sort(ae.begin(), ae.end());

cc = 0;

iloop(0, n) par[i] = i, sz[i] = 1;

for (auto it : ae) {

if (merge(it.second.first, it.second.second)) {

cc++;

adj2[it.second.first].push_back({it.first, it.second.second});

adj2[it.second.second].push_back({it.first, it.second.first});

//cout << it.first << " " << it.second.first << " " << it.second.second << endl;

if (cc == n-1) break;

}

}

dfs2(0, -1);

}

int minimum_energy(int U, int V) {

x = U, y = V;

return lca(x, y);

}

This got 20 points In $$$O(N^2\log N)$$$.

Next, I was eyeing the 28 points subtask $$$W \lt 128$$$ along with the $$$9$$$ points from the easier $$$W \le 1$$$. This suggests that having a small number of possible edge weights (and thus path costs) may mean something. Using this, I obtained an observation:

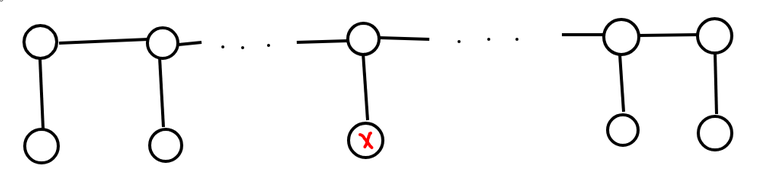

Given a node, if 2 of its ancestors have the same path cost from it, there exists an MST where at most 1 of these steps is included.

This is immediate after noticing from XOR properties that the step cost between the 2 ancestors must be 0.

Using this, I come up with the following algorithm: for each node, find all unique path costs to an ancestor, then include only 1 of each such path cost in the computation of the MST. There are only $$$NW$$$ path costs in the MST computation now, so the time complexity is $$$O(NW\log{NW})$$$. To implement, note that XOR properties allow us to simply store the parents by their prefix XORs from the root. The modified dfs function is as follows, with the part in the main computation function adding edges removed:

vector<ll> prv[130];

void dfs(ll nd, ll pr) {

iloop(0, 128) if (prv[i].size()) ae.push_back({i^a[nd], {prv[i].back(), nd}});

prv[a[nd]].push_back(nd);

for (auto it : adj[nd]) if (it != pr) {

a[it] ^= a[nd];

dfs(it, nd);

}

prv[a[nd]].pop_back();

}

This motivates the idea that not all edges may be useful. After deliberating on this thought, I obtain a stronger version of the previous observation that can be used to solve the problem:

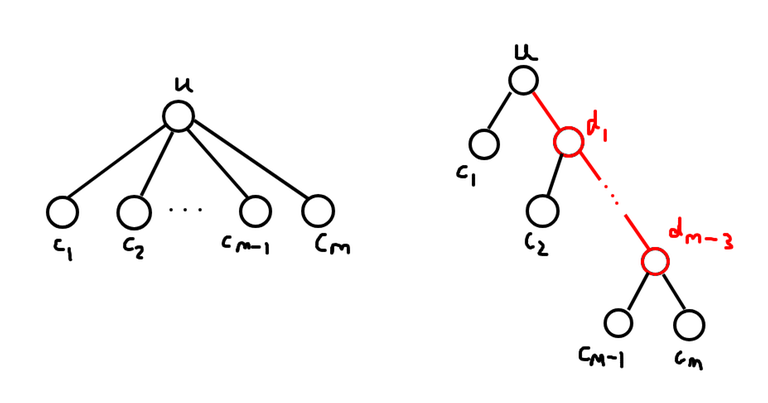

Given a node, if the path costs to 2 of its ancestors have the same most significant bit, there is an MST solution with at most 1 of these steps. In particular, the step with the larger cost should not be needed.

This is immediate after noticing that the path costs between the 2 ancestors must, by XOR properties, have a smaller most significant bit and thus smaller value.

If we only include steps from nodes to their ancestors which, out of all other such steps sharing the same most significant bit, is minimal, there will be only $$$O(N\log W)$$$ edges to check for in the MST. This should be fast enough.

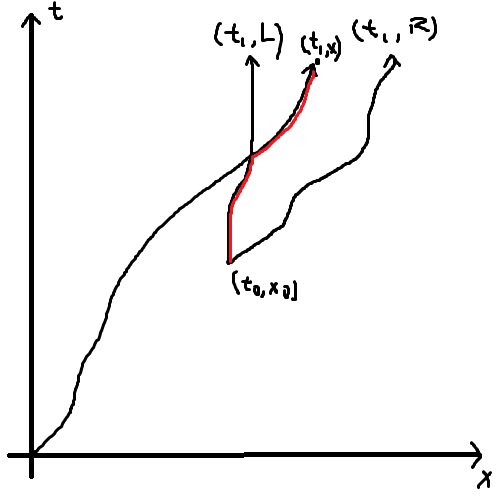

To pull this off, I use a binary trie to store all values of the prefix XORs from the root. At every node, the code checks through the binary trie to find the minimal value for each most significant bit, as well as which node it is from. This is done as such:

- If the current traversal has deviated from the prefix XOR to the node, the most significant bit has been passed and thus it is optimal to minimise the XOR, i.e. match the bits in the prefix XOR to the node whenever possible.

- If the current traversal perfectly matches the prefix XOR to the node, visit both children in the trie.

This gets all $$$O(\log W)$$$ ancestors. After that, just run the MST as per usual.

The code with the trie and the modified dfs function is as follows. The gt flag in the trav (traversal) function in the trie is 1 if the path has deviated from the prefix XOR to the node and 0 otherwise.

vector<ll> useful;

struct node {

node *l, *r;

vector<ll> vs;

ll cn = 0, dpt;

node (ll dpn) {

dpt = dpn;

if (dpt == g) return;

l = new node(dpn + 1);

r = new node(dpn + 1);

}

void add(ll X, ll V) {

cn++;

if (dpt == g) {

vs.push_back(V);

return;

}

if (X%2 == 0) l->add(X>>1, V);

else r->add(X>>1, V);

}

void rm(ll X) {

cn--;

if (dpt == g) {

vs.pop_back();

return;

}

if (X%2 == 0) l->rm(X>>1);

else r->rm(X>>1);

}

void trav(ll V, bool gt) {

if (cn == 0) return;

if (dpt == g) {

useful.push_back(vs.back());

return;

}

if (gt) {

if (V%2 == 0) {

if (l->cn) l->trav(V>>1, 1);

else r->trav(V>>1, 1);

}

if (V%2 == 1) {

if (r->cn) r->trav(V>>1, 1);

else l->trav(V>>1, 1);

}

return;

}

if (V%2 == 0) {

l->trav(V>>1, 0);

r->trav(V>>1, 1);

}

else {

l->trav(V>>1, 1);

r->trav(V>>1, 0);

}

}

} *root;

void dfs(ll nd, ll pr) {

useful.clear();

ll cv = 0, ctm = a[nd];

iloop(0, g) {cv = cv*2 + (ctm%2); ctm >>= 1;}

root->trav(cv, 0);

//for (auto it : useful) cout << nd << ":" << it << endl;

for (auto it : useful) ae.push_back({a[it]^a[nd], {it, nd}});

root->add(cv, nd);

for (auto it : adj[nd]) if (it != pr) {

a[it] ^= a[nd];

dfs(it, nd);

}

root->rm(cv);

}

This runs in $$$O(N\log{W}\log{N\log{w}})$$$.