Hello there, welcome to my first Codeforces blog post! Today I’d like to share an educational — and frankly a fun observation — on optimizing the process of finding the square root of large integers using binary search. This may not be something you’d use in your everyday code (after all the built-in sqrtll() is hard to beat in speed), but it’s a neat mathematical trick that can literally save you seconds when processing many numbers.

Before we dive in, a huge thanks to chromate00. An answer of mine getting hacked in one of his contests sparked this discovery.

Aim

In a typical binary search algorithm for computing the integer square root of a number S, we initialize the search range with:

low = 0high = S

This approach finds the square root in O(log(S)) iterations. But if we have to compute square roots for too many numbers (like $$$10^8$$$), it can become laggy. However, if we can choose low and high more intelligently based on the structure of S, we can reduce the search range even further, which can be a significant improvement for very large numbers.

Code

Here is the optimized version of finding the integer square root for some integer val:

Concepts Needed (the Geeky Part)

Exponential Representation:

Any numberxcan be written as:

x = $$$e^{\ln(x)}$$$ (Or, equivalently, x = $$$2^{\log_2(x)}$$$)Floor and Ceiling:

We have:

$$$\text{floor}(x)$$$ $$$\leq$$$ $$$x$$$ $$$\leq$$$ $$$\text{ceil}(x)$$$Bit Shifting and Powers of 2:

For valid integers, $$$2^x$$$ is equivalent to1 << x.Integer and Fractional Parts:

Every real number on the number linexcan be decomposed as:

x=[x]+{x}where[x]is the integer part and{x}is the positive fractional part.Scaling the Fraction: Dividing the fractional part by a constant

k(greater than or equal to 1) is equivalent to{x}/k={x/k}

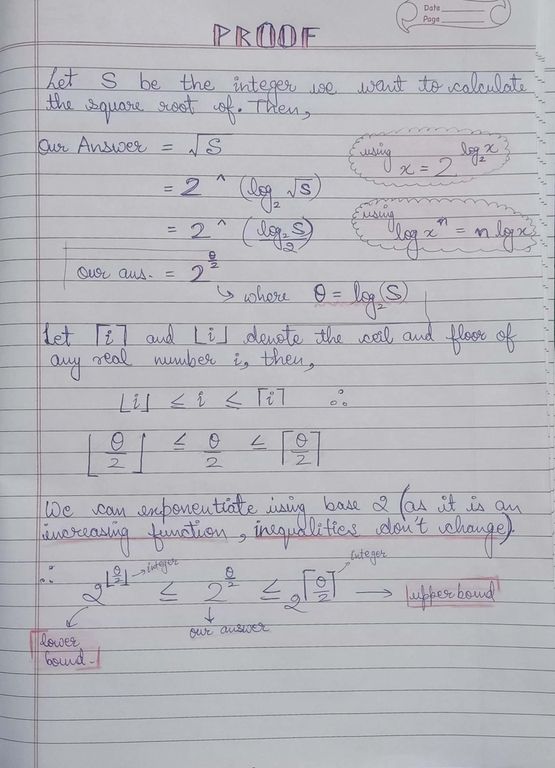

The Proof

Let’s denote: - $$$\text{S}$$$ as our input number, - $$$\text{A}$$$ as its square root, so $$$\text{A}$$$ = $$$\sqrt{S}$$$

Since any number can be expressed using logarithms, we have:

$$$\text{A}$$$ = $$$\sqrt{S}$$$ = $$$2^{\log_2(\sqrt{S})}$$$

Because we are interested in the integer part of $$$\text{A}$$$ (let’s call it $$$\text{P}$$$), by applying floor/ceiling properties we get:

$$$2^{\text{floor}(\log_2(\sqrt{S}))}$$$ $$$\leq$$$ $$$\text{P}$$$ $$$\leq$$$ $$$2^{\text{ceil}(\log_2(\sqrt{S}))}$$$

Using the equivalence with bit shifting, we can rewrite these bounds as:

$$$\text{(1}$$$ << $$$\text{floor}(\log_2(\sqrt{S}))$$$) $$$\leq$$$ $$$\text{P}$$$ $$$\leq$$$ $$$\text{(1}$$$ << $$$\text{ceil}(\log_2(\sqrt{S})))$$$

Now, here’s the key insight:

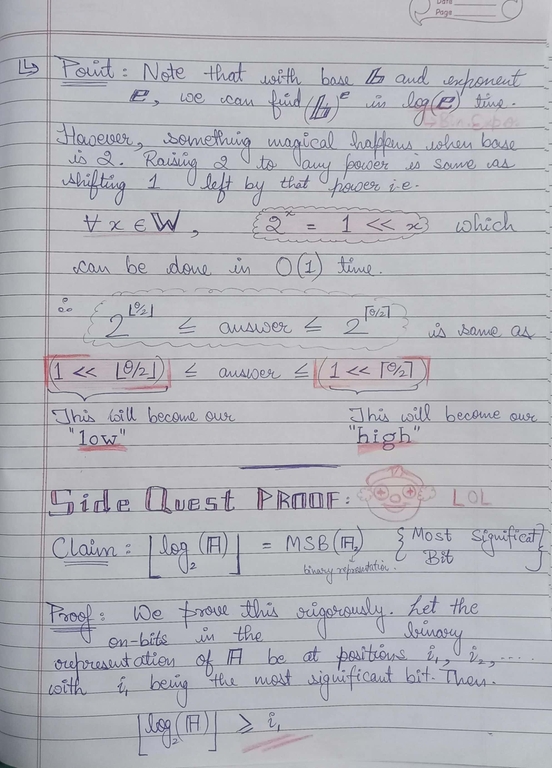

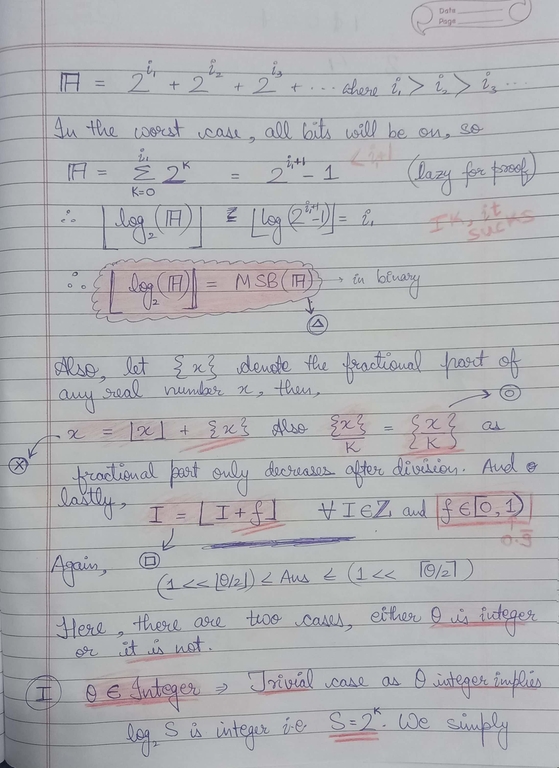

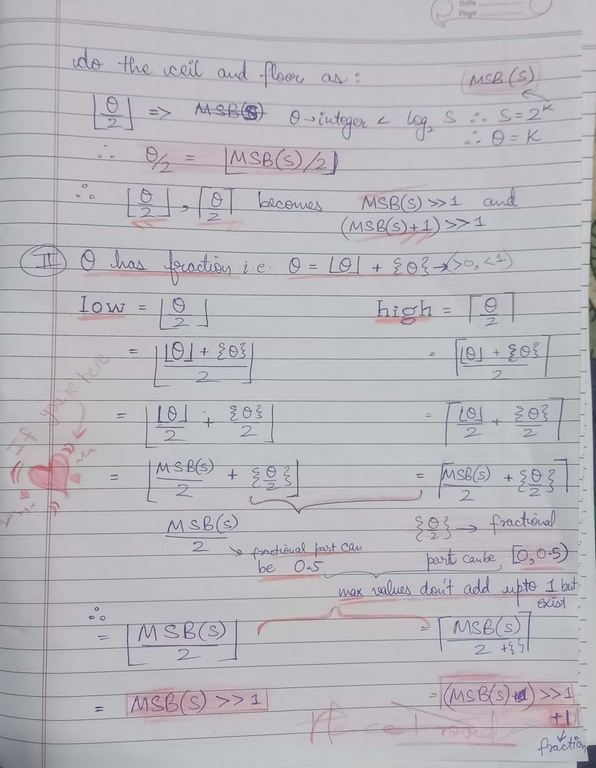

When $$$\text{S}$$$ is represented in binary, the floor of $$$\log_2(S)$$$ corresponds to the index of the most significant set bit $$$\text{(MSB)}$$$. Now, $$$\text{MSB}(x)$$$ can be found as follows:

$$$\text{MSB}(S)$$$ = $$$63 -$$$ __builtin_clzll(S)

then we can estimate:

- Lower bound:

$$$\text{P}$$$ $$$\geq$$$ $$$\text{1}$$$ << $$$floor(\left(\text{MSB}(S) \, \text{divided by } 2\right))$$$

(Using bit-shift: $$$\text{1}$$$ << $$$(\text{MSB}(S)$$$ >> $$$\text{1})$$$

- Upper bound:

$$$\text{P}$$$ $$$\leq$$$ $$$\text{1}$$$ << $$$floor(\left(\text{MSB}(S) \, \text{divided by } 2\right)) + 1$$$

(Using bit-shift: $$$\text{1}$$$ << $$$((\text{MSB}(S)$$$ >> $$$\text{1}) + 1)$$$

These bounds are computed in O(1) time and reduce the range for binary search from $$$[0, S]$$$ to a much smaller interval around the actual square root.

Results

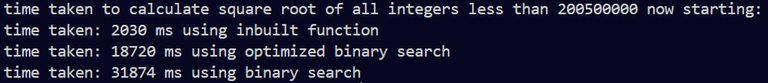

I benchmarked the square root calculation for all integers less than $$$2 \times 10^8$$$:

Standard binary search (with range 0 to S): 29 seconds

Optimized binary search (with our computed bounds): 12 seconds

Built-in function (

sqrtll()): 5 seconds

While the built-in function remains the fastest (thanks to hardware acceleration), the optimized binary search is significantly faster than the standard approach. Here is the time duration:

Hell, you run this on your machine and see the difference:

P.S.

I realize that the proof might be a bit dense at first glance — so I’ve attached a detailed and easy-to-understand handwritten version of proof that for sure WILL help clarify the details further. If you find any flaw in this observation, please do let me know. Up to $$$2 \times 10^8$$$, there is no noticeable precision error—even when testing edge cases like Int64_max.

Final Thoughts

While this optimized approach isn’t likely to replace the built-in functions in production code, it’s a fun and educational exercise that showcases how a deep understanding of binary representations and bitwise operations can lead to practical performance improvements. If you’re interested in algorithm optimization or just love geeky math, I hope you found this exploration as exciting as I did.

Happy coding and keep optimizing!

Feel free to leave your comments, suggestions, or critiques below. I’d love to hear your thoughts and any further optimizations you might suggest!