Preface

Recently, numerous methods, techniques, and suggestions have been proposed to combat cheaters, providing short-term positive results. However, in the long run, cheaters become smarter and inevitably find ways to circumvent protections and continue their deceptive activities on the platform.

Why Common Methods Fail in the Long Term

Anti-Plagiarism and Timings

Code rapidly generated by Large Language Models (LLMs) often appears human-like due to standardized coding styles, predefined function templates, abbreviated variable names, etc. Additionally, appropriate delays between submissions can be easily simulated by monitoring the timing of other participants.

SMS Verification

Online services offering disposable phone numbers or additional virtual numbers provided by telecom operators render SMS verification largely ineffective. Anyone active on platforms like CodeForces or having even a minimal interest in bypassing verification knows about inexpensive online services providing temporary SMS activation or voice verification. Such services cost mere pennies, allowing number rentals for specific periods or the issuance of additional numbers linked to a primary SIM card (e.g., as offered by some Russian operators like MegaFon for a negligible daily fee). Thus, telephone-based verification is currently insufficient to deter determined cheaters.

Strict Bans

A user losing rating early on can simply create a new account, resetting the cycle. Those with established high ratings, familiar with key detection metrics, will easily evade bans by staying cautious.

Main Idea – Don't Ban, Conceal!

The idea of introducing a reporting system has been suggested previously, but I want to emphasize it further. Let's implement a delayed reporting system and likes from trusted users (elo rating >2000), influencing a suspect’s trust factor. Rather than banning a user for suspicious activity during a round—thus indicating exactly where and how they slipped—we should let them believe they've successfully evaded detection again. Meanwhile, their account receives a hidden mark, and all subsequent competitions involving that user are segregated from the main participant pool. The cheater continues to see their supposed rating and rank, although their scores are excluded from the main leaderboard calculations affecting honest competitors. Suspect participants are shifted into a "hidden pool," competing solely against each other.

Trusted Reporting System

Who can report?

Participants with a rating above 2000

Users significantly contributing to the platform’s community

Trusted participants and coordinators

Report Form:

- Suspicion of AI usage? [Yes/No]

- Key anomalies (timings, coding style, atypical solutions)

- Brief comment

Weighting of Reports:

Reporters are assigned a "weight" based on their current rating, registration date, community contribution, and past report accuracy. The higher these metrics, the greater the credibility assigned. Consequently, reports against highly trusted users don't significantly impact their reputation unless refuted by equally trusted members.

Likes and Reputation System

- Likes can be given by any participant registered at least seven days with a valid rating after round completion.

- Code evaluation: readability, clarity, presence, and comprehensiveness of comments.

Hidden Pool Mechanics

flowchart LR

A[Participant] --> B[Competition]

B --> C{Data Collection}

C --> D[Trusted Reports]

C --> E[Likes/Dislikes]

C --> F[Telemetry]

D & E & F --> G[Bayesian Model]

G --> H{TF ≥ 0.5?}

H -- Да --> I[Hidden Pool]

H -- Нет --> J[Main Pool]

I --> K[Visualization (visible only to cheater)]

J --> L[Real Results]

- Reports and historical data collected before the contest.

- Telemetry gathered during the contest: coding time, submission frequency, page navigation.

- Post-contest Bayesian probabilistic model makes the final decision regarding pool assignment.

UI/UX for "Hidden" vs. "Honest" Participants

Honest participants see their usual rankings without awareness of hidden "attributes" participants.

Hidden participants see fake ratings and rankings in the general leaderboard.

Moderators have access to dynamic trust-factor graphs, detailed telemetry logs and suspect profiles.

Automated Trust Factor Calculation

The trust factor increases or decreases after each contest based on reputation over a specified interval or is adjusted following manual moderator review.

What are your thoughts on this idea? Share your comments below.

Don't take this comment the wrong way, I think that your ideas are really creative and have great potential. But I do want to share some of my thoughts on improving this

Firstly, if you conceal who the cheaters are, there will also be no way for people to tell who is a cheater. Many cheaters cheat to get job offers or bragging rights, and this doesn't prevent that.

Secondly, "readability, clarity, presence, and comprehensiveness of comments" are things that AI does very good while humans don't (especially when trying to speedrun rounds), so it shouldn't be used in a likes and reputation system. Codeforces already has a reputation system in contribution

Contributing to the community is not the same as reputation, because contribution can be upgraded or downgraded if you have written something on the blog or replied to comments, no other way. And rating will be responsible for decency in solving contests, which will be regulated by reports from other members

bro what the hell are you talking about.How hypocrite can you become.Firstly take a look at your own skipped solution before giving knowledge to others.Talking about so much take a look at your own stuff and dont speak nonsense cheater

never cook again (aka stfu)

you know what buddy i can also abuse like you but i rather not because if i did things will escalate real quick

learn english ;-;

Don't worry, I speak facts in every language — even the ones your brain can't process :)

skipped solution isn't always because of cheating dumbass

Check this comment

You are the one who is speaking nonsense here. His submissions are skipped not because he cheated, but because he has a more recent Accepted submission in contest.

If exposing double standards sounds like 'nonsense' to you, maybe the only thing grand about you is your insecurity.

Sir you are not really getting the point. If you cheat in a contest, all your submissions from that contest get skipped. Also, if u submit >= two AC codes to the same problem during the contest, all except the last submission to that particular problem get skipped.

Thus getting skipped on a problem doesn't necessarily imply cheating, I hope u get it.

Sometimes, if you wrote another AC code while already passed it in a contest, your previous AC submission would be skipped during the system test to reduce unnecessary judge time waste.

Next time learn more about codeforces before you comment.

Which submission are you talking about?

Why you mad, bro? How come you used Python for all the tasks, but solved the last one in C++? Talk about hypocrisy :)

The submission is skipped because i submitted another solution. Maybe study up on how Codeforces works first before making accusations

Can everyone just ignore this cheater instead of wasting your time arguing with someone who doesn't know English or coding?

Funny how you're so desperate for attention that you keep replying to someone you claim to ignore.If I don’t know English or coding, what does that say about you wasting time arguing with me?Maybe focus less on labels and more on logic it might help your next contest

maybe try not cheating, it might help your next contest

yeah i did and i solved 3 in the next contest

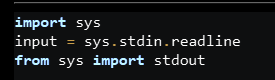

python for the first 2 problems and C++ for the last is weird. Also, using stdin for A then input for B is another sign that u could be cheating

which idiot would use this abomination of 3 lines(that was completely unnecessary):

just because i wrote first 2 in python and other in cpp means i am a cheater great. Good job

Why is the second to last bullet point in Russian?

Translation: A hidden participant unsuspectingly sees his rating and place in the overall top (google translate said "illusionically" but that isn't even a word)

Thank you, i was edited already

That's genius, solves the problem for codeforces purpose at least... Also, don't you think some cheater would get the access to report somehow and get to report innocent people? What I mean is that the number of people with access to the report people should be minimal to avoid such scenarios... which doesn't apply on yer condition of +2000> rating and some contribution. Or making it open to everybody (or at least pupil>) as the idea was, providing a username to avoid repeated reports on same user... (set haha) and get a huge no-life mod team to inspect all of them :)

In my post, I mentioned a criterion called the "weight" of a report, which also depends on the number of false reports made recently. Besides, how many cheaters do you know who've reached and maintained a rating of 2000+? This minimum rating threshold is just an example and could be set even higher. In any case, rating isn't the only factor determining the "weight" of a report.

There are actually more 2000+ cheaters than you would expect because LLMs are so powerful now

I don't even know what to say, I just mostly only see gray, green, and turquoise nicknames getting sent to ban right in the round and those who manage to get away with it

Are you talking about yourself?

I just got an additional idea!

Make a system that tracks unusual rating growth for a user. it can be +300> in 3 contests or idk (im not familiar with unusual ratings)

Unusual growth is pretty hard to define. I had been practicing USACO for several months before I started seriously doing CF. As a result my initial growth was really fast (several ~200 delta contests in a row), though I can assure you I didn't cheat

(at this point I'm putting all my suggestions here ;-;)

So another idea is making the problem statements pdf... because pdf kinda messes up the text format and as a comment i saw before said "even AI can be confused"... I personally experienced the horrific text format on copy-paste of PDF files.

But still these are hopeless suggestions i thought of at 3:00AM :)

You can take screenshots and feed it to the AI. The AI is even better now at reading text from screenshots.

Why cant the reputation based system be made as an external website ?

Most cheaters cheat to get job offers and/or flex, both of which are destroyed the moment evidence of cheating is made public and easily accessible to recruiters and their peers.

If enough good people endorse it then companies too will start taking cheating allegations posted there seriously before hiring.

Because we're now talking about a localized issue specific to this platform, where introducing an internal rating and trust-factor system would dramatically impact the cheating situation overall. Besides, what kind of companies hire employees based solely on their CodeForces account without conducting their own interviews or coding tests? If a candidate demonstrates incompetence or performs at a lower level compared to their CodeForces rating when solving tasks provided by the recruiter, it would simply prove that their rating was obtained dishonestly.

Probably the best solution I've seen on this platform. It's like a "second sort". I think trusted users should be people who actively wrote rounds before the advent of AI, and showed good results(Not newbies, for example)

Did I solve the problem?

I think this is a great solution. If you give feedback that is too high signal, cheating will never become detectable the same way spraying too much antibacterial just leaves the bacteria growing stronger and stronger.

Instead, you can assign each participant a "honesty" value -- crucially, though, rating changes should reflect this. Participants with low honesty values should see smaller gains and bigger losses.

Also, the systems should be largely automatic. I assume the reason many ideas have been floated and none have been implemented by Mike (he really could implement these quickly, if he thought they were big problems) is because they take work over time to sustain. So however you design your anti cheat method, it should be largely automated (otherwise it will never happen.)

Yes! Your ideas will work...!

Interesting and original idea! The problem I see here is that a cheater can create a second (honest) account and after login into this one (or even after not login at all) he can see that "Ups, my other account is not in the general leaderboard where it should be — I must have been detected".

There are however other ways that such reputation system could make life worse for those with low score, like longer queue times. Unfortunately, such annoyances wouldn't take the main incentive, aka rating increase, away.

I understand what you mean. However, it largely depends on how far he has advanced in the ratings. If his rating is high, the cheater will probably be puzzled and stay on his account since switching to another account and starting from scratch would become problematic for him, proportionally significant to the amount of time he has already invested into boosting his account. He would still be unaware of the specific reason why he ended up in the "hidden pool." Nevertheless, moderators on the platform will likely catch him cheating faster and eventually ban him. Again, if he creates a new account and becomes fully convinced that his participation is not helping him rise in the rankings, he will either leave the platform altogether—assuming his goal was purely achieving a high rating and dominating honest players—or simply won't pay much attention to it. Either way, his former motivation will simply disappear; that's exactly the intention.

I still believe that right now there is a significant lack of a system to control multi-accounting. Although such a rule apparently exists, it's currently not enforced at all, and users can register even ten accounts from a single IP address without any repercussions, despite MikeMirzayanov explicitly stating in one of his posts that having multiple accounts violates the platform’s policies. Then why haven't they implemented adequate countermeasures against those who violate this rule?

https://mirror.codeforces.com/blog/entry/124418#:~:text=Creating%20and%20using%20additional%20accounts,a%20company%2C%20etc.).

Since you deleted your other topic to hide my comment, I'll just repeat everything here: You're a cheater yourself, so what is your motive here for posting this?

Evidence: 311116185

Dehashed code by ChatGPT: https://chatgpt.com/share/6810bde2-3cf8-8006-958b-7eddf77ca7ce

Don't bother deleting this one, I'll just create a topic in that case.

yeah, even just after looking at his profile I was damn sure that he is a pro cheater doing some nice try diddy thing !

xd, cool evidence :)

Can I ask how hashing code is useful?

Not really. When I first registered on the platform, I didn't know all its rules at the time, including the fact that you can't send encrypted code. My motives at the time were to test the platform, I was interested to see how the coordinators and other participants responded. But on the other hand, I don't see this violation as an advantage over other participants. Besides, it happened only once in my very first contest and never happened again.

tibinyte2006 this profile is so suspicious, check it

first of all it looks like you're also one of them who're using AI during contest. The code template is different for you in every contest. And in 1 month you became Master. Haha

You're getting compiler error-

Reason- Why I'm pretty sure? you copied from gpt, add your template try to edit code with macros-> hit submit gets compile error.

nice try Diddy!

On the Hidden / Honest Sorting : A cheater will try to see his rankings from some other alt account and he can find it out that his account is hidden in the ranking.

My idea is give rated contest access only to trusted accounts , now question is how do you verify trustability?Appoint some trust checkers ,they will check each particular account's trustability within $$$X$$$ contests periods and give rated contest access accordingly and $$$X$$$ will increase/decrease over time : For example :

first $$$X$$$ contests no rated contest access

if anything suspicious found for first time no access will be given

After $$$X$$$ more contest trustability will be rechecked and new access giving decision will be given

Note : 2nd time suspicious activity will lead to permanent ban

Rules for changing $$$X$$$ : there can be 4 states (bit on the left represents rated access state in previous stage and on the right represents new state) if 0 : no access if 1 : access is given :

0 0 : X+=Z (if not banned for 2nd time suspicion)

0 1 : X=A(where A $$$\le$$$ X)

1 0 : X+=Z

1 1 : X=min(X+B,H)

No need for these things. Codeforces just need to stop copy text and screenshots. It will probably drastically reduce cheating

That is not possible within a browser, it would require a lockdown app

Most of the discussion regarding AI cheating revolves around direct code generation, but what about asking for the idea and implementing it yourself? The actual challenge competitive programming imposes is not about code implementation, it's about the underlying, abstract ideas behind it.

How can you prevent users from asking an LLM for an idea or an approach? How can you detect such form of cheating? I don't see how it's possible without utilizing very invasive methods such as webcam monitoring which are obviously out of the question.

Personally I no longer feel it is reasonable to dedicate the time and effort involved in competing and trying to climb up the ladder while the solution for a problem could be one simple prompt away from me and the thousands of random people I compete against. This might render online competitive programming simply a thing of the past.

I still enjoy solving the problems in an offline setting and think the platform still holds great value even when stripping away the rating and competitions system completely. However, to be frank and respectfully, posts like that strike me as a bit of a cope and failing to acknowledge how the death of free and publicly open online competitive programming platforms is imminent.

Assuming online competitive programming will simply disappear is such unbelievable pessimism imo. First you are assuming the amount of cheaters will be overwhelming to the point of TRULY making it pointless to take part in online competitions.

And, the most absurd to me, you are assuming that a community of 15+ years full of brilliant people will not be able to find good enough methods to stop cheaters.

I don't know, comments like these just sound extremely alarmant to me.

It might not completely disappear but unless very drastic measures are taken and fundamental changes are made to the platforms it will certainly suffer from major loss of quality and trust.

It's not really about the actual amount of cheaters. It's about the mere fact that the actual amount of cheaters remains a mystery. In order for a participant to take their rating or placing within a certain contest seriously and ascribe value and meaning to it (which is what makes competitive systems worthy in the first place), in any competitive platform, they have to have some sort of assurance that cheating opponents are at the very least a rare phenomena even if they exist. When cheating behavior is obvious we can at least find peace in the fact that it is detectable and thus punishable. However I can't wholeheartedly compete knowing that I am actually competing against thousands of random people around the globe which I cannot trust not to cheat when proper cheating is basically undetectable and also so easily accessible.

This could be really helpful,further more,for those who wanna get job through rating,I have an idea,The company could get a hidden permission to get one's true "ranking",thus they could know if the employee were cheating or not

Where is chapter 1 btw?

https://mirror.codeforces.com/blog/entry/140786