We apologize for the late editorial.

2090A - Treasure Hunt

Idea: _Lucien

Let's calculate how many operations we need. The number of pairs of operations is $$$\left\lfloor\frac{a}{x + y}\right\rfloor$$$. Additionally, we add $$$+1$$$ if $$$(a \bmod (x + y)) \ge x$$$, because in this case, the first player will be able to make one more move. Note that the first part is always even, which means the answer depends only on whether $$$(a \bmod (x + y)) \ge x$$$, and in this case, the answer is YES.

#include <bits/stdc++.h>

using namespace std;

void solve() {

int x, y, a;

cin >> x >> y >> a;

if (a % (x + y) < x) {

cout << "NO\n";

} else {

cout << "YES\n";

}

}

int main() {

ios_base::sync_with_stdio(0);

cin.tie(0);

int t;

cin >> t;

while (t--)

solve();

}

2090B - Pushing Balls

Idea: Ecrade_

We can transform the problem into: select a row / column and replace the leftmost / topmost $$$0$$$ with $$$1$$$. Then the solution is clear: if, for each $$$1$$$ in the grid, there does not exist any $$$0$$$ on its top, or does not exist any $$$0$$$ on its left, then the answer is YES; otherwise NO.

But how to prove that if the condition is satisfied, we can always construct a valid series of ball-pushing operations? We can consider the problem in reverse order: given the final state, if, for an $$$1$$$ in the grid, there does not exist any $$$0$$$ on its top, or does not exist any $$$0$$$ on its left, then we can turn it into $$$0$$$, and our goal is to turn all $$$1$$$ s into $$$0$$$ s. We can always reach the goal if we choose $$$1$$$ s in descending order of $$$i+j$$$, where $$$(i,j)$$$ is their coordinates in the grid.

#include<bits/stdc++.h>

using namespace std;

typedef long long ll;

ll t,n,m,a[59][59],vis[59][59];

char s[59];

inline ll read(){

ll s = 0,w = 1;

char ch = getchar();

while (ch > '9' || ch < '0'){ if (ch == '-') w = -1; ch = getchar();}

while (ch <= '9' && ch >= '0') s = (s << 1) + (s << 3) + (ch ^ 48),ch = getchar();

return s * w;

}

int main(){

t = read();

while (t --){

n = read(),m = read();

for (ll i = 1;i <= n;i += 1){

scanf("%s",s + 1);

for (ll j = 1;j <= m;j += 1){

a[i][j] = s[j] - '0';

vis[i][j] = 0;

}

}

for (ll i = 1;i <= n;i += 1){

for (ll j = 1;j <= m;j += 1){

if (!a[i][j]) break;

vis[i][j] = 1;

}

}

for (ll j = 1;j <= m;j += 1){

for (ll i = 1;i <= n;i += 1){

if (!a[i][j]) break;

vis[i][j] = 1;

}

}

bool fl = 1;

for (ll i = 1;i <= n && fl;i += 1){

for (ll j = 1;j <= m;j += 1){

if (a[i][j] && !vis[i][j]){

fl = 0;

break;

}

}

}

puts(fl ? "YES" : "NO");

}

return 0;

}

2090C - Dining Hall

Idea: myee

Original idea from myee has $$$4$$$ cases, which is a medium difficulty problem using deletable heap. You may find the original statement in zh-cn on GitHub later.

The Codeforces version by FairyWinx has only $$$2$$$ cases, in order to meet the difficulty as Div2C.

The En/Ru statement changes for times after checking. Sorry for the inconvenience.

Firstly we can get the distance of each cell by BFS or Math Way: $$$d(x,y)=x+y+2[x\bmod3=y\bmod3=2]$$$.

The proof is trivial.

Then we can sort out the first $$$4n$$$ table cell with the lowest distance in $$$O(n)$$$ time: by BFS or enumerating possible $$$d(x,y)=k$$$.

Suppose we record whether a table cell is occupied or not by a bool array. Then we can check whether a cell can be allowed to go by someone $$$o=0/1$$$ in $$$O(1)$$$ time.

Suppose the shortest table cell we can choose is $$$x_0$$$ -th and $$$x_1$$$ -th ones, then $$$x_1\le x_0\le4n$$$. We can keep trying the smallest $$$x_o$$$ until it's okay.

The time complexity is $$$O(n)$$$.

#include <bits/stdc++.h>

using uint = unsigned;

using bol = bool;

const uint T=2000;

const uint R=200000;

uint Ord[R+5];

uint Nxt(uint p,uint o)

{

uint x=p/T,y=p%T;

if(o&1)y=y/3*3+3-y%3;

if(o&2)x=x/3*3+3-x%3;

return x*T+y;

}

bol G[2][T*T];uint Ans[2];

int main()

{

#ifdef MYEE

freopen("QAQ.in","r",stdin);

freopen("QAQ.out","w",stdout);

#endif

for(uint i=2,tp=0;i<T&&tp<R;i++)if(i%3==2)

{

for(uint x=1;x<i&&tp<R;x+=3)Ord[tp++]=x*T+i-x;

}

else if(!(i%3))

{

for(uint x=1;x<=i-2&&tp<R;x++)

if(x%3==1)Ord[tp++]=x*T+i-x;

else if(x%3==2)Ord[tp++]=x*T+i-x-2,Ord[tp++]=x*T+i-x;

Ord[tp++]=(i-1)*T+1;

}

uint t;scanf("%u",&t);

while(t--)

{

uint q;scanf("%u",&q);

for(uint i=0;i<q*4;i++)G[0][Ord[i]]=G[1][Ord[i]]=0;

Ans[0]=Ans[1]=0;

while(q--)

{

uint t;scanf("%u",&t);while(G[t][Ord[Ans[t]]])Ans[t]++;

t=Ord[Ans[t]];printf("%u %u\n",t/T,t%T);

G[1][t]=1;for(uint o=0;o<4;o++)G[0][Nxt(t,o)]=1;

}

}

return 0;

}

2089A - Simple Permutation

Idea: QuietBeautifulThoughts

Lemma (Bertrand's postulate): For each positive integer $$$x$$$, there is a prime $$$p$$$ inside the interval $$$[x,2x]$$$.

Find a prime number $$$p$$$ between $$$\lfloor \frac{n}{3} \rfloor$$$ and $$$\lceil \frac{2n}{3} \rceil$$$, and construct the permutation in an alternating way: $$$p, p-1, p+1, p-2, p+2, \dots$$$. Place the remaining numbers arbitrarily.

In this construction, we have $$$c_1 = c_3 = c_5 = \dots = c_{2 \lfloor \frac{n}{3} \rfloor - 1} = p$$$, which meets the requirement with at least $$$\lfloor \frac{n}{3} \rfloor$$$ numbers being prime.

#include<bits/stdc++.h>

using namespace std;

bool isPrime(int x){

if(x <= 1)

return 0;

for(int i = 2 ; i * i <= x ; ++i)

if(x % i == 0)

return 0;

return 1;

}

vector<int> generateSol(int n, int p){

vector<int> ans;

ans.push_back(p);

for(int i = 1 ; i <= n ; ++i){

if(p - i > 0)

ans.push_back(p - i);

if(p + i <= n)

ans.push_back(p + i);

}

return ans;

}

int main(){

int t;

cin >> t;

for(int i = 1 ; i <= t ; ++i){

int n;

cin >> n;

vector < int > ans;

for(int x = 0 ; ; ++x){

if(isPrime(n / 2 - x)){

ans = generateSol(n , n / 2 - x);

break;

}

if(isPrime(n / 2 + x)){

ans = generateSol(n , n / 2 + x);

break;

}

}

for(int i = 0 ; i < n ; ++i)

cout << ans[i] << " \n"[i == n - 1];

}

return 0;

}

2089B1 - Canteen (Easy Version)

Idea: Ecrade_

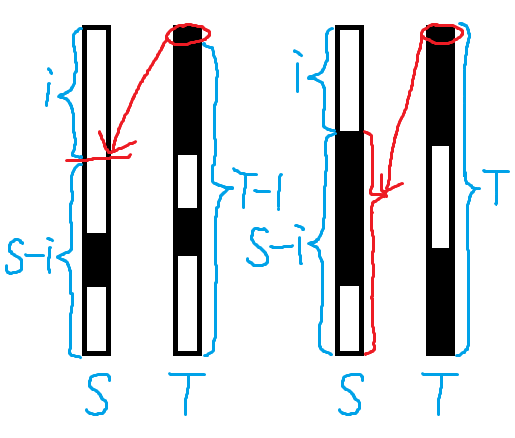

For each $$$ a_i $$$, calculate the minimum number of cyclic right shifts required to reduce it to zero, denoted as $$$ d_i $$$. The answer is the maximum $$$ d_i $$$ across all $$$ i $$$.

For cyclic problems, we can consider breaking the cycle into a chain (i.e., let $$$ a_{i+n} = a_i $$$, $$$ b_{i+n} = b_i $$$).

Consider constructing a parenthesis sequence $$$ s $$$ by concatenating $$$ a_0 $$$ left parentheses, $$$ b_0 $$$ right parentheses, $$$ a_1 $$$ left parentheses, $$$ b_1 $$$ right parentheses, ... ,$$$ a_{2n-1} $$$ left parentheses and $$$ b_{2n-1} $$$ right parentheses. The operation in the original problem corresponds to the process of matching parentheses in $$$ s $$$:

- "Set the smaller of $$$(a_i, b_i)$$$ to zero and the larger to their difference" $$$\Rightarrow$$$ "Match the parentheses corresponding to $$$(a_i, b_i)$$$".

- "Cyclically right-shift $$$ a $$$" $$$\Rightarrow$$$ "Unmatched left parentheses continue to find matching right parentheses".

The first time $$$ a_i $$$ is reduced to zero depends on which $$$ b_j $$$ ’s right parenthesis matches the leftmost parenthesis corresponding to $$$ a_i $$$.

We can use prefix sums to find matchings of the parenthesis: Let $$$ c_i = \sum\limits_{j=0}^i (a_j - b_j) $$$. Define $$$ p_i $$$ as the smallest index satisfying $$$ p_i \gt i $$$ and $$$ c_{p_i} \leq c_i $$$, then $$$ d_i = p_i - i $$$. We can compute all $$$ p_i $$$ with a monotonic stack.

It should be pointed out that matching parentheses is just an intuitive way of understanding the solution. You can, of course, also draw the above conclusion by direct observation.

The time complexity is $$$ O(\sum n) $$$.

#include<bits/stdc++.h>

using namespace std;

typedef long long ll;

ll t,n,k,a[400009],stk[400009];

inline ll read(){

ll s = 0,w = 1;

char ch = getchar();

while (ch > '9' || ch < '0'){ if (ch == '-') w = -1; ch = getchar();}

while (ch <= '9' && ch >= '0') s = (s << 1) + (s << 3) + (ch ^ 48),ch = getchar();

return s * w;

}

int main(){

t = read();

while (t --){

n = read(),k = read();

for (ll i = 1;i <= n;i += 1) a[i] = read();

for (ll i = 1;i <= n;i += 1) a[i] = a[i + n] = a[i] - read();

for (ll i = 1;i <= 2 * n;i += 1) a[i] += a[i - 1];

ll tp = 0,ans = 0;

for (ll i = 2 * n;i >= 1;i -= 1){

while (tp && a[stk[tp]] > a[i]) tp -= 1;

if (i <= n) ans = max(ans,stk[tp] - i);

stk[++ tp] = i;

}

printf("%lld\n",ans);

}

return 0;

}

2089B2 - Canteen (Hard Version)

Idea: Ecrade_

We can use binary search on the answer $$$ x $$$, and calculate the minimum number of changes needed to make $$$a$$$ zero within $$$ x $$$ rounds.

However, direct computation on the cyclic chain of $$$ 2n $$$ elements is troublesome (due to overlapping contributions). Instead, we can cyclically shift $$$ a $$$ and $$$ b $$$ such that $$$ c_{n-1} = \min\limits_{i=0}^{n-1} c_i$$$, which ensures that no $$$ a_{n-1} \gt 0 $$$ will be cyclically shifted to $$$a_0$$$, enabling us to only consider the first $$$n$$$ elements of the chain.

For the adjusted $$$ a $$$ and $$$ b $$$, we can greedily process $$$ i $$$ from $$$ n - x - 1 $$$ to $$$ -1 $$$: If $$$ \min\limits_{j=i+1}^{i+x}c_j \gt c_i $$$, then we should apply $$$ \min\limits_{j=i+1}^{i+x}c_j - c_i $$$ changes to $$$ a_{i+1} $$$ (assume that $$$c_{-1}=0$$$). We can also use a monotonic stack to maintain the whole process.

Note that if $$$\sum\limits_{i=0}^{n-1} a_i=k$$$, we should output $$$0$$$.

The time complexity is $$$ O(\sum n\log k) $$$.

Bonus: Find an $$$O(\sum n)$$$ solution.

#include<bits/stdc++.h>

using namespace std;

typedef long long ll;

ll t,n,k,pos,a[400009],stk[400009];

inline ll read(){

ll s = 0,w = 1;

char ch = getchar();

while (ch > '9' || ch < '0'){ if (ch == '-') w = -1; ch = getchar();}

while (ch <= '9' && ch >= '0') s = (s << 1) + (s << 3) + (ch ^ 48),ch = getchar();

return s * w;

}

bool judge(ll x){

ll l = 1,r = 0,res = 0;

for (ll i = pos;i >= pos - n;i -= 1){

if (l <= r && stk[l] - i > x) l += 1;

if (pos - i >= x) res += max(0ll,a[stk[l]] - a[i]);

if (res > k) return 0;

while (l <= r && a[stk[r]] > a[i]) r -= 1;

stk[++ r] = i;

}

return 1;

}

int main(){

t = read();

while (t --){

n = read(),k = read();

ll suma = 0;

for (ll i = 1;i <= n;i += 1) a[i] = read(),suma += a[i];

for (ll i = 1;i <= n;i += 1) a[i] -= read(),a[i + n] = a[i];

if (suma == k){puts("0"); continue;}

for (ll i = 1;i <= 2 * n;i += 1) a[i] += a[i - 1];

pos = n;

for (ll i = n + 1;i <= 2 * n;i += 1) if (a[i] < a[pos]) pos = i;

ll l = 1,r = n,ans = n;

while (l <= r){

ll mid = (l + r) >> 1;

if (judge(mid)) ans = mid,r = mid - 1;

else l = mid + 1;

}

printf("%lld\n",ans);

}

return 0;

}

2089C2 - Key of Like (Hard Version)

Idea: SpiritualKhorosho

Background

This problem is inspired by a minigame from a recently released party video game, but the story was modified to comply with the title. We tried to isolate the artistic part and the theoretical part, and I apologize if you still feel like working on reading comprehension exercises.

Solution

Noticing that each member's strategy is not maximizing the total outcome (which is the expected number of successful matches between keys and locks), but only maximizing the outcome in her current turn, and equally likely to adopt each of optimal (pure) strategies, it will be ideal if there is some simple form of the strategy that only depends on $$$L$$$ and $$$K$$$.

Analyzing the Strategy

At the very beginning, when there is no information about the relationship between keys and locks, the first member in her first turn must randomly choose a key and a lock.

What about the second member, if the first one is not lucky enough? Since she will not choose the same pair of keys and locks, there are three different types of (pure) strategies:

Choose a different key to open the same lock;

Choose the same key to open a different lock;

Choose a different key and a different lock.

For the first type of strategy, since there are $$$(L+K-1)$$$ keys remaining (after the very first attempt), and among them is only one correct key, the probability of opening the same lock is $$$1/(L+K-1)$$$.

For the second type, it can be proven that the probability is also $$$1/(L+K-1)$$$. Another way to think of this is, add $$$K$$$ imaginary locks and match each of them with a counterfeit key. Then, it is apparent that a new lock matches the selected key with the given probability.

The key selected by the first member can either be a real key or a counterfeit one. Denote the event of the key being authentic as $$$A$$$, and that of it failing to open the lock at the first turn as $$$B$$$. Then, according to the Bayes' theorem along with the law of total probability,

\begin{equation} \begin{split} P(A|B) &= \frac{P(B|A)P(A)}{P(B)} = \frac{P(B|A)P(A)}{P(B|A)P(A) + P\left(B\left|\bar{A}\right.\right)P\left(\bar{A}\right)} \\ &= \frac{((L-1)/L)\cdot (L/(L+K))}{((L-1)/L)\cdot (L/(L+K)) + 1\cdot(K/(L+K))}\\ &= \frac{L-1}{L+K-1},\\ P\left(\left.\bar{A}\right|B\right) &= 1 — P(A|B) = \frac{K}{L+K-1}. \end{split} \end{equation}

Thus, the probability that the same key selected by the first member can open another lock randomly chosen by the second member (denoted as $$$C$$$) is

\begin{equation} \begin{split} P(C|B) &= P(A|B)\cdot P(C|AB) + P\left(\left.\bar{A}\right|B\right)\cdot P\left(C\left|\bar{A}B\right.\right)\\ &=\frac{L-1}{L+K-1}\cdot\frac{1}{L-1} + \frac{K}{L+K-1}\cdot 0\\ &= \frac{1}{L+K-1}. \end{split} \end{equation}

For the third type of strategy, its probability can be computed from either the first type or the second type, as $$$(L+K-1)$$$ different outcomes are equally likely to happen:

\begin{equation} \frac{1-1/(L+K-1)}{L+K-1}=\frac{L+K-2}{(L+K-1)^2}<\frac{1}{L+K-1}. \end{equation}

In conclusion, the second member will either choose the same key or the same lock. Because there are $$$(L - 1)$$$ pairs of the same key and other locks, and $$$(L + K - 1)$$$ pairs of other keys and the same lock, the second member will use the same key with probability $$$(L - 1)/(2L + K - 2)$$$, or check the same lock with probability $$$(L + K - 1)/(2L + K - 2)$$$.

As for all the following attempts, a similar Proof shows that, everyone will imitate the strategy adopted by the second member. That is to say, if the second member decides to continue with the same key, everyone will keep using this same key, until a lock is opened or all the locks are checked; if the second member picks a different key for the same lock, everyone will challenge this same lock, until it is eventually settled (and obviously it will).

Furthermore, whenever a key is identified as counterfeit, or a lock is opened, the information 'collapses', which turns the original problem into a subproblem with either one less counterfeit key (corresponding to $$$K' = K - 1$$$), or both one less valid key and one less lock ($$$L' = L - 1$$$), respectively.

To sum up, the process of the game is:

- Whenever a lock is opened, the original problem is reduced to a subproblem with $$$L' = L - 1$$$;

- Whenever a key is proven counterfeit, the original problem is reduced to a subproblem where $$$K' = K - 1$$$; -The first member randomly chooses a key and a lock to check if they match;

- If the first member fails, the second member adopts the same key with probability $$$(L - 1)/(2L + K - 2)$$$, or investigates the same lock with probability $$$(L + K - 1)/(2L + K - 2)$$$;

- If the second member fails as well, all the following attempts will replicate the choice of whether to employ the same key or the same lock.

Design the Algorithm with Strategies Solved

Knowing the concrete strategies, the task now becomes how to compute the expected values efficiently. As you might have imagined, it can be achieved using dynamic programming due to the existence of overlapping subproblems. But let's examine another approach which might be easier to understand: compute $$$p_i (l, k)$$$, the total probability that the $$$i$$$-th member begins a subproblem with $$$l$$$ locks and $$$k$$$ counterfeit keys, and accumulate $$$e_i$$$'s during the computation.

Transitions for $$$p$$$ itself include (and those from $$$p$$$ to $$$e$$$ will be similar to):

- The first member manages to open the lock without any prior information;

- The second member makes a successful guess;

- Any of the succeeding attempts succeeds;

- Everyone uses the same key but it fails on all the locks.

For each $$$p_i(l, k)$$$, all the transition can be easily processed in $$$\Theta(1)$$$ time, except the third one. A verbatim implementation requires $$$O(L + K)$$$ time per state, which is apparently not efficient enough. Since $$$N \ll L$$$, a possible work-around is to compute the transition coefficient for each member, instead of each attempt. Intuitively, all attempts should share the same probability of success, regardless of the order. A simplified model is to draw all balls sequentially without replacement from a box of $$$1$$$ red ball and $$$(x-1)$$$ white balls, in which the red ball appears at the $$$i$$$-th attempt with an equal probability of $$$1/x$$$. Of course, the observation for our problem can also be proven mathematically, but let's omit it for simplicity.

This important observation reduces the time complexity of the third type of transition to $$$O(N)$$$, as we can first compute the maximum number of attempts for each member, and then multiply it with the common probability of a single attempt. However, this is still not enough for passing the problem (and we adjusted the constraints to prevent so).

The final trick to pass all testcases requires revisiting the transition coefficients we just computed. Notice that, if all the members have $$$a$$$ attempts in total (but for the third type of transition only, which excludes the first two), then:

If $$$a$$$ is a multiple of $$$N$$$, then every member can have at most $$$a/N$$$ attempts;

Otherwise, the first $$$a \bmod N$$$ members (right after the second member, who decides to advance with the same key or the same lock) can have at most $$$\lceil a/N\rceil$$$ attempts, while all the others can have at most $$$\lfloor a/N\rfloor$$$ attempts.

This implies that the transition coefficients consists of at most $$$3$$$ maximal contiguous subsequences of the same coefficients (in fact, if the coefficients are treated as a circular sequence, then there will be exactly $$$1$$$ or $$$2$$$ for the two cases respectively). Using techniques such as prefix sums helps further decrease the complexity of the computation to $$$\Theta(1)$$$ per state.

The total time complexity is $$$O(NLK)$$$.

#include <cstdio>

#include <cstring>

using namespace std;

#define MOD 1000000007

#define MAXN 108

#define MAXL 5004

#define MAXK 27

int e[MAXN], p[2][MAXK][MAXN], inv[2 * MAXL + MAXK];

inline void _update(int * const a, const int lbd, const int rbd, const int base, const int diff) {

(a[0] += base) %= MOD;

(a[lbd] += diff) %= MOD;

(a[rbd] += MOD - diff) %= MOD;

return ;

}

#define Hill(_a, _lbd, _rbd, _base, _diff) _update(_a, _lbd, _rbd, _base, _diff)

#define Valley(_a, _lbd, _rbd, _base, _diff) _update(_a, _rbd, _lbd, ((_base) + (_diff)) % MOD, MOD - (_diff))

#define Update(_a, _lbd, _rbd, _base, _diff) { if (_lbd < _rbd) Hill(_a, _lbd, _rbd, _base, _diff); else Valley(_a, _lbd, _rbd, _base, _diff); }

int like() {

int n, l, k, i, j, a, b, dh, now, nxt, totk, ntry, prob, pd, pb, lbd, rbd;

scanf("%d %d %d", &n, &l, &k);

if (n == 1) {

printf("%d\n", l);

return 0;

}

inv[1] = 1;

j = 2 * l + k;

for (i = 2; i <= j; ++i) inv[i] = 1ll * (MOD - MOD / i) * inv[MOD % i] % MOD;

memset(p, 0, sizeof(p));

memset(e, 0, sizeof(e));

p[0][0][0] = 1;

p[0][0][1] = -1;

now = 0; nxt = 1;

for (i = 0; i < l; ++i) {

for (j = 0; j <= k; ++j) {

totk = l + k - i - j;

for (a = 1; a < n; ++a) (p[now][j][a] += p[now][j][a - 1]) %= MOD;

for (a = 0; a < n; ++a) {

// Match at the first try

prob = 1ll * p[now][j][a] * inv[totk] % MOD;

(e[a] += prob) %= MOD;

if (a != n - 1) (e[a + 1] += MOD - prob) %= MOD;

(p[nxt][j][(a + 1) % n] += prob) %= MOD;

if (a != n - 2) (p[nxt][j][(a + 2) % n] += MOD - prob) %= MOD;

prob = 1ll * prob * inv[totk + l - i - 2] % MOD;

// Same lock

pd = 1ll * prob * (ntry = totk - 1) % MOD;

pb = 1ll * (ntry / n) * pd % MOD;

if (dh = ntry % n) {

lbd = (a + 1) % n;

rbd = (a + dh) % n + 1;

Update(e, lbd, rbd, pb, pd);

(++lbd) %= n;

++(rbd %= n);

Update(p[nxt][j], lbd, rbd, pb, pd);

}

else {

(e[0] += pb) %= MOD;

(p[nxt][j][0] += pb) %= MOD;

}

// Same key

pd = 1ll * prob * (ntry = l - i - 1) % MOD;

if (j < k) {

pb = 1ll * pd * (k - j) % MOD;

b = (a + l - i) % n;

(p[now][j + 1][b] += pb) %= MOD;

if (b != n - 1) (p[now][j + 1][b + 1] += MOD - pb) %= MOD;

}

pb = 1ll * (ntry / n) * pd % MOD;

if (dh = ntry % n) {

lbd = (a + 1) % n;

rbd = (a + dh) % n + 1;

Update(e, lbd, rbd, pb, pd);

(++lbd) %= n;

++(rbd %= n);

Update(p[nxt][j], lbd, rbd, pb, pd);

}

else {

(e[0] += pb) %= MOD;

(p[nxt][j][0] += pb) %= MOD;

}

}

}

now ^= 1;

nxt ^= 1;

memset(p[nxt], 0, sizeof(int) * MAXK * MAXN);

}

for (i = 1; i < n; ++i) (e[i] += e[i - 1]) %= MOD;

for (i = 0; i < n; ++i) printf("%d%c", e[i], " \n"[i == n - 1]);

return 0;

}

int main() {

int t;

scanf("%d", &t);

while (t--) {

like();

}

return 0;

}

2089D - Conditional Operators

Idea: E.Space

Each operation transform three adjacent characters into one. If the string starts with 11, the answer should be yes since we can first transform the remaining part into a single 0 or 1 and then the value of 11? is always $$$1$$$.

If the string ends with 1, only 101 has no solution, otherwise it starts with 11 or 0, or we can transform 10? in the front of the string into a 0. Then transform the rest part of the string into a single character to make the last operation 0?(anything):1 makes 1.

If an operation transformed a string ending with 0 into one ending with 1, it must be 1?1:0 makes 1.

Consider the case where the strings contains 11 as a substring. After transforming the remaining part, ?11?? or ??11? will be derived. The cases are 01100, 00110, 01110, 10110. All other cases start with 11 or end with 1.

All cases of ??11? have a solution:

(0?0:1)?1:0 makes 1.

(0?1:1)?1:0 makes 1.

1?(0?1:1):0 makes 1.

However, 01100 has no solution.

Since 0?0:1 equals 1, before the last 1, any two adjacent 0's with no 1 in between can be eliminated. Thus, if there are two 1's with an even number of 0's in between, and there are an even number of characters in front the former 1, i.e., (..)*1(00)*1(..)*0 in regular expression, the answer should be yes, since after eliminating the 0's in between, the 1's become adjacent. The parity of the characters before the former 1 guarantees that it will not become 01100.

Another case is that the string ends with 0 and every pair of adjacent 1's has an odd number of 0's in between. Since 0(1?0:1)0 makes 000, 1(0?1:0)1 makes 101, 10(1?0:0)01 makes 10001, there is no way to change the parity of the consecutive 0's to make 11 to change the ending 0 into 1.

In the remaining case, there are even 0's between some pair of adjacent 1's, but the number of characters before the first 1 is odd, there must be only one pair. Suppose the string is 1-indexed. It is because the indices of such pair of 1's must be [odd, even]; while the indices in the previous case must be [even, odd]. Thus, if there are two such pairs of this case [odd, even], in the parity sequence [odd, even, ..., odd, even] there must be an [even, odd] as a substring.

Therefore, any other pair of adjacent 1's must have odd 0's in between, i.e., $$$0(00)^*\color{blue}{(10(00)^*)^*}\color{red}1\color{black}(00)^*\color{red}1\color{blue}{((00)^*01)^*}\color{black}(00)^+$$$ in regular expression. Any operation remains the string in this case, except that the string is $$$0(00)^*\color{blue}{(10(00)^*)^*}\color{red}1\color{red}1\color{black}(00)^+$$$ and the operation is $$$\color{red}1\color{black}?\color{red}1\color{black}:0$$$ makes 1. Since it must ends with 00, it becomes a string ending with 0 and every pair of adjacent 1's has odd 0's in between, which is a case mentioned before that has no solution.

In conclusion, it has a solution if and only of at least one of the two following conditions is met:

- It ends with

1but it is not101. - It has two adjacent 1's such that there are even 0's in between and the number of characters before the former

1is even.

#include<bits/stdc++.h>

char s[333333];

char val(char x,char y,char z){

return x=='1'?y:z;

}

std::pair<std::string, char> fold(int l,int r){

std::string str;

char v=s[r];

for(int i=l;i<r;i+=2){

str+=s[i];

str+='?';

str+=s[i+1];

str+=':';

}

str+=s[r];

for(int i=r-2;i>=l;i-=2){

v=val(s[i],s[i+1],v);

}

return std::make_pair(str,v);

}

void solve(){

int n;

scanf("%d",&n);

n=2*n+1;

scanf("%s",s+1);

int last=0;

for(int i=1;i<=n;++i){

if(s[i]=='1'&&last>0){

if(i%2==0&&last&1){

puts("Yes");

std::string s4=fold(last+1,i).first;

auto tmp=fold(i+1,n);

std::string s5=tmp.first;

char v5=tmp.second;

if(last==1){

printf("1?(%s):(%s)\n",s4.c_str(),s5.c_str());

return;

}

tmp=fold(1,last-2);

std::string s1=tmp.first;

char v1=tmp.second;

if(v1=='1'){

printf("(%s)?(%c?1:(%s)):(%s)\n",s1.c_str(),s[last-1],s4.c_str(),s5.c_str());

}

else{

printf("((%s)?%c:1)?(%s):(%s)\n",s1.c_str(),s[last-1],s4.c_str(),s5.c_str());

}

return;

}

}

if(s[i]=='1'){

last=i;

}

}

if(s[n]=='1'&&std::string(s+1)!="101"){

puts("Yes");

auto left=s[1]=='0'?fold(1,1):fold(1,3);

auto mid=s[1]=='0'?fold(2,n-1):fold(4,n-1);

printf("(%s)?(%s):1\n",left.first.c_str(),mid.first.c_str());

return;

}

puts("No");

return;

}

int main(){

int T;

scanf("%d",&T);

while(T--){

solve();

}

return 0;

}

2089E - Black Cat Collapse

Idea: abruce

Firstly, consider a classical dp to calculate $$$dp_{u,k}$$$ as the number of delete $$$k$$$ vertices in subtree $$$u$$$ without considering deleting vertex $$$n-i+1$$$ everyday. This is trivial.

Then, due to the existence of an “automatic deletion” process, we cannot view the selected points within the subtree of $$$u$$$ as operations with only relative order, because they may be illegal. However, we consider the final operation sequence, putting the vertex chosen on the $$$i$$$ th day in the position $$$n-i+1$$$. That is, if we firstly choose $$$4$$$, then $$$3$$$, then $$$1$$$, it will be $$$0431$$$. Then, one vertex $$$u$$$ must be put in position $$$u$$$ or later.

Then, we find that for the subtree of vertex $$$u$$$ with DFS order interval $$$[l,r]$$$, all vertices can't be put in position before $$$l$$$, and it is always legal to put in position after $$$r$$$ if parent-child relations are satisfied.

So we consider a dp $$$f_{u,i,j,k}$$$ which represents that the subtree of $$$u$$$ leaves position $$$i$$$ for ancestors (which means nothing can be placed at position $$$i$$$ or before, and if $$$i=0$$$, then nothing is left for ancestors), there are $$$j$$$ empty spaces (not including the one left for ancestors), and $$$k$$$ vertices need to be put into positions after the subtree of $$$u$$$. In the $$$i=0$$$ case, we merge subtrees, and the subtree with smaller DFS order can put some backward operations into the space of the subtree with bigger DFS order.

Specifically, consider the sons of $$$u$$$ enumerated in reverse order of DFS, and the son is $$$v$$$. Then enumerate $$$w$$$ as how many backward operations of $$$v$$$ will fill in space of $$$u$$$. The transition is $$$f_{u,0,j+j1-w,k+k1-w}\leftarrow f_{u,0,j+j1-w,k+k1-w}+f_{u,0,j,k}\times f_{v,0,j1,k1}\times \binom{j}{w}\times\binom{k+k1-w}{k}$$$. That is, after putting $$$w$$$ operations from backward operations of $$$v$$$ into spaces of $$$u$$$, we merge the spaces of $$$u$$$ and $$$v$$$, then the backward operations of $$$u$$$ and $$$v$$$.

For $$$f_{v,i,j,k}(i\neq 0)$$$, it won't interfere with the transition with its following siblings. And for $$$f_{u,i,j,k}$$$,any operations from $$$v$$$ can't put into the subtree of $$$u$$$ and $$$v$$$, so we only need to merge backward operations of $$$u$$$ and $$$v$$$.

After finishing the transition of subtrees, we consider where to put $$$u$$$.

- If we don't put $$$u$$$, there will be one more space, and we can consider whether to leave it for ancestors if $$$i=0$$$.That is $$$f_{u,i,j,k}\rightarrow f_{u,i,j+1,k},f_{u,0,j,k}\rightarrow f_{u,u,j,k}$$$.

- We put $$$u$$$ into the position left for ancestors, that is $$$f_{u,i,j,k}\rightarrow f_{u,0,j+1,k}$$$. And we can leave another position for ancestors of $$$u$$$, that is $$$f_{u,i,j,k}\rightarrow f_{u,i1,j+1,k}(i1 \lt i)$$$.

- We put $$$u$$$ into position $$$u$$$, in that case, we can't leave position for ancestors of $$$u$$$, that is $$$f_{u,0,j,k}\rightarrow f_{u,0,j,k}$$$.

- We put $$$u$$$ backward, then we can't put anything in the subtree of $$$u$$$, but we can leave position for ancestors of $$$u$$$, that is $$$f_{u,i,siz_u-2,k}\rightarrow f_{u,i,siz_u-1,k+1},f_{u,0,siz_u-1,k}\rightarrow f_{u,0,siz_u,k+1}$$$.

The answer for $$$i$$$ operations is just we leave position $$$n-i+1$$$ for $$$1$$$, and there are $$$n-i$$$ spaces (therefore the spaces are before $$$n-i+1$$$) and no backward operations.

The time complexity is $$$O(\sum n^5)$$$.

#include<bits/stdc++.h>

using namespace std;

typedef long long ll;

int read() {

int x=0,f=1;

char c=getchar();

while(c<'0'||c>'9') {

if(c=='-')f=-1;

c=getchar();

}

while(c>='0'&&c<='9')x=x*10+c-'0',c=getchar();

return x*f;

}

namespace tokido_saya {

const int maxn=81,mod=998244353;

typedef vector<int>::iterator iter;

int n,lp[maxn],rp[maxn],siz[maxn],t;

vector<int> v[maxn];

ll f[maxn][maxn][maxn][maxn],dp[maxn][maxn],fac[maxn],inv[maxn];

int zc[maxn][maxn][maxn][maxn];

ll qpow(ll x,int y)

{

ll w=1;

while(y)

{

if(y&1)w=w*x%mod;

x=x*x%mod,y>>=1;

}

return w;

}

ll C(int x,int y)

{

if(y<0||y>x)return 0;

return fac[x]*inv[x-y]%mod*inv[y]%mod;

}

void dfs1(int u)

{

lp[u]=rp[u]=u;

for(iter it=v[u].begin();it!=v[u].end();it++)dfs1(*it),rp[u]=max(rp[u],rp[*it]);

}

void dfs2(int u)

{

f[u][0][0][0]=1,dp[u][0]=1;

for(iter it=v[u].begin();it!=v[u].end();it++)

{

int v=*it;

dfs2(v);

for(int i=siz[u];i>=0;i--)

for(int j=siz[v];j;j--)dp[u][i+j]=(dp[u][i+j]+dp[u][i]*dp[v][j]%mod*C(i+j,j))%mod;

for(int j1=0;j1<=siz[v];j1++)

for(int k1=0;k1<=min(j1+1,siz[v]);k1++)

{

memset(zc[j1][k1],0,sizeof(zc[j1][k1]));

for(int j=0;j<=siz[u];j++)

for(int k=0;k<=j+1;k++)

for(int w=0;w<=min(k1,j);w++)zc[j1][k1][j+j1-w][k+k1-w]=(zc[j1][k1][j+j1-w][k+k1-w]+f[u][0][j][k]*C(j,w)%mod*C(k+k1-w,k))%mod;

}

memset(f[u][0],0,sizeof(f[u][0]));

for(int j=0;j<=siz[v];j++)

for(int k=0;k<=j+1;k++)

for(int j1=0;j1<=siz[u]+siz[v];j1++)

for(int k1=0;k1<=min(siz[u]+siz[v],j1+1);k1++)

if(zc[j][k][j1][k1])

{

const int w=zc[j][k][j1][k1];

f[u][0][j1][k1]=(f[u][0][j1][k1]+f[v][0][j][k]*w)%mod;

for(int i=lp[v];i<=rp[v];i++)f[u][i][j1][k1]=(f[u][i][j1][k1]+f[v][i][j][k]*w)%mod;

}

for(int i=rp[v]+1;i<=rp[u];i++)

for(int j=siz[u]-1;j>=i-rp[v]-1;j--)

for(int k=j+1;k>=0;k--)

{

const int val=f[u][i][j][k];

f[u][i][j][k]=0;

for(int p=0;p<=siz[v];p++)

for(int w=0;w<=min(p,j-(i-rp[v]-1));w++)f[u][i][j+siz[v]-w][k+p-w]=(f[u][i][j+siz[v]-w][k+p-w]+dp[v][p]*val%mod*C(j-(i-rp[v]-1),w)%mod*C(k+p-w,k))%mod;

}

siz[u]+=siz[v];

}

for(int i=siz[u];i>=0;i--)dp[u][i+1]=(dp[u][i+1]+dp[u][i])%mod;

if(u!=1)for(int j=siz[u];j>=0;j--)

for(int k=min(j+1,siz[u]);k>=0;k--)

{

ll sum=0;

for(int i=rp[u];i>=lp[u];i--)

{

const int w=f[u][i][j][k];

f[u][i][j+1][k]=(f[u][i][j+1][k]+w)%mod;

if(j==siz[u]-1)f[u][i][j+1][k+1]=(f[u][i][j+1][k+1]+w)%mod;

f[u][i][j][k]=sum,sum=(sum+w)%mod;

}

const int w=f[u][0][j][k];

f[u][lp[u]][j][k]=(f[u][lp[u]][j][k]+w)%mod,f[u][0][j+1][k]=(f[u][0][j+1][k]+w+sum)%mod;

if(j==siz[u])f[u][0][j+1][k+1]=(f[u][0][j+1][k+1]+w)%mod,f[u][lp[u]][j][k+1]=(f[u][lp[u]][j][k+1]+w)%mod;

}

siz[u]++;

}

int main() {

int x,y;

t=read(),fac[0]=1;

for(int i=1;i<=80;i++)fac[i]=fac[i-1]*i%mod;

inv[80]=qpow(fac[80],mod-2);

for(int i=80;i;i--)inv[i-1]=inv[i]*i%mod;

while(t--)

{

n=read(),memset(f,0,sizeof(f)),memset(dp,0,sizeof(dp)),memset(siz,0,sizeof(siz));

for(int i=1;i<=n;i++)v[i].clear();

for(int i=1;i<n;i++)

{

x=read(),y=read();

if(x>y)swap(x,y);

v[x].push_back(y);

}

for(int i=1;i<n;i++)sort(v[i].begin(),v[i].end()),reverse(v[i].begin(),v[i].end());

dfs1(1),dfs2(1);

for(int i=n;i>1;i--)printf("%lld ",f[1][i][i-2][0]);

puts("1");

}

return 0;

}

}

int main() {

return tokido_saya::main();

}