Introduction

Most of DP algorithms I studied online, there are some subtleties that I didn't find any proof of. May be they are found somewhere already, but not to my knowledge.

I think most people find the things that I'm going to prove here intuitive and need no proof, but for if you're really picky about being uncertain of something if you didn't see a proof (like me), it can bother you. Also, knowing the proofs can sometimes better help the thinking process.

The purpose of this blog is to provide examples of proofs of famous DP algorithms to, first show them, and second serve as a general pattern of techniques on how would one prove a DP algorithm.

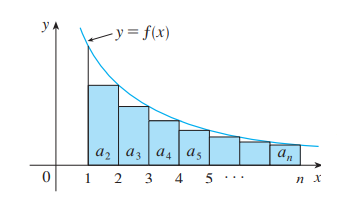

Now, most DP problem involve a set of states $$$V$$$, and a set of transitions $$$E$$$ such that $$$(V, E)$$$ constitutes a directed acyclic graph or DAG (sometimes weighted).

Thus, we will continue discussing DP calculations on a DAG.

Conventions

A graph $$$G = (V, E)$$$ is a pair of sets $$$V$$$ and $$$E$$$ where $$$E \subseteq V^2$$$. In this blog, we will assume $$$(u, u) \notin E$$$ for all $$$u \in V$$$.

For $$$u \in V$$$, we define $$$N(u) = \{ v : (u, v) \in E \}$$$

A path is a sequence $$$(a_1, a_2, \dots, a_k)$$$ of pairwise distinct vertices where $$$(a_i, a_{i + 1}) \in E$$$ for all $$$1 \leq i \lt k$$$.

For a path $$$p = (a_1, a_2, \dots, a_k)$$$, we say $$$v \in p$$$ if $$$a_i = v$$$ for some $$$1 \leq i \leq k$$$, and we say that an edge $$$(u, v) \in p$$$ if $$$a_i = u$$$ and $$$a_{i + 1} = v$$$ for some $$$1 \leq i \lt k$$$.

The notation $$$\mathbf{1}[\text{condition}]$$$ denotes a predicate that is $$$1$$$ when the condition is true and $$$0$$$ otherwise.

Warmup: Counting DP

Fix $$$S \subseteq V$$$, and let $$$P_u$$$ be the set of all paths starting at $$$u$$$ and ending at $$$s \in S$$$.

Define $$$D_u = |P_u|$$$. We want to show the following recursive formula for all $$$u \in V$$$

if there doesn't exist a path from $$$u$$$ to $$$s \in S$$$, then $$$D_u = 0$$$, (which is trivially correct since $$$P_u = \emptyset$$$)

otherwise $$$\displaystyle D_u = \sum_{v \in N(u)} D_v + \mathbf{1}[u \in S]$$$.

If so, we can compute $$$D$$$ using the following algorithm (or you can also use recursion)

- Initialize $$$D$$$ to $$$0$$$ s.

- Initialize $$$D_s = 1$$$ for all $$$s \in S$$$.

- Let $$$L$$$ be a topological order of $$$G$$$ in reverse.

- For each $$$u \in L$$$,

- For each $$$v \in N(u)$$$:

- $$$D_u := D_u +D_v$$$.

The correctness of such an algorithm can be just the recursive formula an induction on the order in the topological sorting.

Define $$$P_{u, v}$$$ for $$$(u, v) \in E$$$ denote the set of all paths from $$$u$$$ to $$$s \in S$$$ that starts with $$$u$$$ and $$$v$$$, and define

Then, clearly

Moreover, when $$$v, v' \in N(u)$$$ have $$$v \neq v'$$$, $$$P_{u, v}$$$ and $$$P_{u, v'}$$$ are disjoint, and clearly $$$S_u$$$ and $$$P_{u, v}$$$ are disjoint.

Thus,

It remains to see that $$$|P_{u, v}| = |P_v|$$$, by showing that the map $$$(u, v, \dots, s) \mapsto (v, \dots, s)$$$ is a bijection between $$$P_{u, v}$$$ and $$$P_v$$$ (which is left as an exercise), and the conclusion follows.

This can serve as a template for most DP counting problems.

More advanced counting

Weigh the edges of $$$G$$$ with a weight function $$$w : E \to \mathbb{R}$$$, and define $$$D_u$$$ and $$$S$$$ as before.

For a path $$$p = (a_1, a_2, \dots, a_k)$$$, define its weight as

Also, define

That is, $$$F_u$$$ sums the weights of edges over all paths from $$$u$$$ to an $$$s \in S$$$ (convention is that empty sum is $$$0$$$ and empty product is $$$1$$$).

We want to show that we can calculate $$$F$$$ this way:

If there doesn't exist a path of at least one edge from $$$u$$$ to an $$$s \in S$$$, then $$$F_u = 0$$$.

Otherwise,

Thus, we can compute it this way

- Initialize $$$F$$$ to $$$0$$$ s.

- Let $$$L$$$ be a topological order of $$$G$$$ in reverse.

- For each $$$u \in L$$$,

- For each $$$v \in N(u)$$$:

- $$$F_u := F_u + w(u, v) D_v + F_v$$$.

Assume otherwise. Then,

as desired.

Knapsack DP

Redefine $$$F$$$ as follows: for any $$$u$$$ where $$$P_u \neq \emptyset$$$ (if $$$P_u = \emptyset$$$, define $$$F_u = -\infty$$$),

In other words, $$$F_u$$$ is the maximum sum of weights over all paths from $$$u$$$ to $$$s$$$.

(Note that the empty path from $$$s$$$ to $$$s$$$ has weight $$$0$$$, and so $$$F_s = 0$$$).

We want to show that

Thus, we compute $$$F$$$ this way

- Initialize $$$F_u$$$ to $$$-\infty$$$ for all $$$u \in V$$$

- Set $$$F_s = 0$$$ for all $$$s \in S$$$.

- Let $$$L$$$ be a topological order of $$$G$$$ in reverse.

- For each $$$u \in L$$$,

- For each $$$v \in N(u)$$$:

- $$$F_u := \max(F_u, w(u, v) + F_v)$$$.

We can show it this way:

Everything else is the same when we're doing $$$\min$$$ instead of $$$\max$$$ (switching $$$-\infty$$$ to $$$\infty$$$).

Exercise. build the implicit DAG for the actual knapsack problem. Show that the maximum weight of a path on such a DAG is actually what the problem requires.

Expected Value DP

Keep the definitions as they are, and keep the weight function $$$w$$$, however, here, for simplicity, we consider $$$S = \{ s \in V : N(s) = \emptyset \}$$$.

Define a probability distribution $$$\Pr$$$ on each neighbourhood of a vertex.

In other words, $$$\Pr : E \to \mathbb{R}_{\geq 0}$$$ is a function that satisfies the following constraint

- for any $$$u \in V$$$, where $$$N(u) \neq \emptyset$$$,

We want to solve the following classical problem:

- Start at a node $$$u$$$

- Choose $$$v \in N(u)$$$ with probability $$$w(u, v)$$$.

- Walk through the edge $$$(u, v)$$$, i.e. set $$$u := v$$$.

- Terminate if $$$u \in S$$$, otherwise go to step 2.

We want the expected sum of weights of edges.

To state that problem formally, we can define for any path $$$p$$$

In other words, if we let $$$F_u = 0$$$ when $$$u \in S$$$, and when $$$u \notin S$$$, we have

we want to calculate $$$F$$$. We can do that by showing that for all $$$u \in V$$$,

So, that we can compute $$$F$$$ as follows

- Initialize $$$F_u$$$ to $$$0$$$ for all $$$u \in V$$$

- Let $$$L$$$ be a topological order of $$$G$$$ in reverse.

- For each $$$u \in L$$$, if $$$u \notin S$$$:

- For each $$$v \in N(u)$$$:

- $$$F_u := F_u + \Pr[u, v] (w(u, v) + F_v)$$$.

To proceed with the proof, we need to prove the following lemma.

Lemma 1. For any $$$u \in V$$$,

Proof. We can define $$$G_u = 1$$$ when $$$N(u) = \emptyset$$$, and otherwise

We have

Thus, the above equations represent a system of equations that has a unique solution $$$G_u = 1$$$ for all $$$u \in V$$$ (the rank of the matrix is clearly $$$|V|$$$ if we order the nodes by topological sorting).

Now, I think you know where I'm going with this:

(Note: if we want the expected sum of values of an array $$$a_u$$$, we can define $$$F_u = a_u$$$ when $$$N(u) = \emptyset$$$ and replace $$$w(u, v)$$$ with $$$a_u$$$).

Abstract Operations

We can replicate the knapsack DP arguments for abstract operations.

Let $$$\otimes$$$ and $$$\odot$$$ be commutative and associative operations over a set $$$R$$$ where for any $$$a, b, c \in R$$$, we have

You can think of $$$\otimes$$$ as the multiplication $$$\times$$$, and $$$\odot$$$ as addition $$$+$$$.

Moreover, let $$$0_\odot \in R$$$ be the identity of the operation $$$\odot$$$ (similar for $$$0$$$ for addition) and $$$1_\otimes$$$ is the identity of the operation $$$\otimes$$$ (similar for $$$1$$$ for multiplication).

In other words, for all $$$a \in R$$$

$$$0_\odot \odot a = a$$$, and

$$$1_\otimes \otimes a = a$$$.

We also require the additional assumption that for all $$$a \in R$$$,

(In formal terms, $$$(R, \odot, \otimes)$$$ is a commutative semiring).

Now, suppose we redefine the weight function $$$w$$$ so that $$$w : E \to R$$$. Moreover, for any path $$$p$$$, we can define

Fix $$$S \subseteq V$$$ (as usual). Let $$$P_u$$$ denote all paths starting from $$$u$$$ to a node $$$s \in S$$$. We want to find for each $$$u \in V$$$

Let $$$T_u = 1_\otimes$$$ if $$$u \in S$$$ and $$$0_\odot$$$ otherwise.

We claim that

and so we can use the following algorithm to compute $$$F$$$:

- Initialize $$$F$$$ to $$$0_\odot$$$.

- For each $$$s \in S$$$, set $$$F_s := 1_\otimes$$$.

- Let $$$L$$$ be a topological sorting of $$$V$$$ in reverse order.

- For each $$$u \in L$$$:

- For each $$$v \in N(u)$$$:

- $$$F_u = F_u \odot (w(u,v) \otimes F_v)$$$.

- For each $$$v \in N(u)$$$:

We can prove the recursive formula in exactly the same way: for $$$u \notin S$$$,

Exercies:

Problem 1. given a weighted graph with $$$n \leq 20$$$ vertices, and a source node $$$s$$$, with weights $$$0 \leq w(u, v) \lt 10^9 + 7$$$ for $$$(u, v) \in E$$$.

Let $$$P$$$ be the set of all hamiltonian paths starting from $$$s$$$, and let $$$w(p)$$$ be the product of weights over all edges on the path $$$p$$$.

Find

modulo $$$10^9 + 7$$$.

Problem 2. given positive integers $$$N, M \leq 10^3$$$, and an array of positive integers $$$a_1, a_2, \dots, a_N \leq 10^9$$$.

Let $$$\mathcal{S}$$$ be the set of all subsets of $$$S \subseteq \{ 1, 2, \dots, N \}$$$ where

is divisible by $$$M$$$.

Moreover, for any subset $$$S \in \mathcal{S}$$$, define $$$A(S)$$$ to be the bitwise AND of all $$$a_i$$$ for $$$i \in S$$$.

Find