Do you know the monotonic stack? You’ll learn something easier to understand with shorter code!

We’re going to discuss a common fundamental problem that you may face as part of other problems. The Next Smaller Element (NSE) problem.

Problem Statement

Given an array $$$a$$$, for each index $$$i$$$, find $$$next_i$$$, which is the first index to the right of $$$i$$$ that is smaller than $$$a_i$$$.

Example

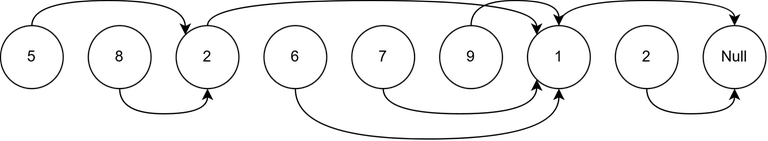

Let's say $$$a = [5, 8, 2, 6, 7, 9, 1, 2]$$$. The output (zero-based) is $$$next = [2, 2, 6, 6, 6, 6, 7, 7]$$$.

NSJ Algorithm

Let’s begin with a simple, non-optimal solution.

for (int i = n - 1; i >= 0; --i) {

next[i] = i + 1;

while (next[i] != n && a[i] <= a[next[i]])

++next[i];

}

The time complexity of the above code is $$$\mathcal{O}(n^2)$$$. What if I reduce it to $$$\mathcal{O}(n)$$$ by changing only one line?

Think before you continue...

For a better understanding, check out the GIF below:

Trying to generate counterexamples? I did a lot at the beginning, too. Let’s prove it.

Why the next values are correct

We know that $$$next_i$$$ is either $$$n$$$ or $$$a_{next_i} \lt a_i$$$. So we need to prove that it really is the first smaller index—i.e., no $$$j$$$ with $$$i \lt j \lt \text{next}_i$$$ has $$$a_j \lt a_i$$$. Let’s define $$$nextChain_i$$$ to be a sequence of indices we see in our while loop to finally find the $$$next_i$$$. Formally, $$$nextChain_{i, 0} = i + 1$$$, $$$nextChain_{i, k} = next_{nextChain_{i, k - 1}}$$$. The sequence continues until $$$a_i \gt a_{nextChain_{i, k}}$$$.

Consider there exists a $$$j$$$ s.t. $$$i \lt j \lt next_i$$$ where $$$a_j \lt a_i$$$. One can simply observe that $$$j$$$ can not be contained in the $$$nextChain$$$, because the algorithm will stop at that. So there exists a $$$k$$$ where $$$nextChain_{i, k - 1} \lt j \lt nextChain_{i, k}$$$. As we know $$$a_j \lt a_i \lt a_{nextChain_{i, k - 1}}$$$, it follows that $$$next_{nextChain_{i, k - 1}} = j$$$. This is a contradiction, so no such $$$j$$$ exists—and our argument is complete.

Why this is O(n)

Lemma

Consider the line next[i] = next[next[i]]. Let k = next[i] (before the update). The pointer next[i] effectively skips over k to point to next[k]. An index k can be skipped over in this manner at most once.

Proof of lemma

Consider two indices $$$i \lt i'$$$ that skip over $$$k$$$ while finding their $$$next$$$. We know $$$a_{i'} \lt a_k$$$ and $$$i' \lt k$$$, so when we were finding $$$next_i$$$, we should reach $$$i'$$$ before we reach $$$k$$$, and then we never meet $$$k$$$. Formally, there is integer $$$l$$$ where $$$nextChain_{i, l} \lt i' \lt nextChain_{i, l + 1}$$$. Given $$$a_{i'} \lt a_k \lt nextChain_{i, l + 1}$$$, $$$next_{nextChain_{i, l}}$$$ is not $$$nextChain_{i, l + 1}$$$ because there is at least an element ($$$a_{i'}$$$) which is smaller than $$$a_{nextChain_{i, l}}$$$ and it's nearer to $$$nextChain_{i, l}$$$ than $$$nextChain_{i, l + 1}$$$. This is a contradiction, and our argument is proven.

Now, with the lemma, we know the number of times next[i] = next[next[i]] happens is $$$\mathcal{O}(n)$$$, so the total time complexity is $$$\mathcal{O}(n)$$$.

Shorter proofs are welcome!

Why This Was Undiscovered?

The NSE problem is very popular and fundamental, I think I faced it 50 times over the past 13 years since I started CP. But I never saw anyone know NSJ. I checked dozens of submissions on Codeforces and LeetCode—none used the NSJ trick. I also queried several AI tools to see whether this algorithm had been used or discovered anywhere and found out it is used in this paper to solve another problem. However, it hasn’t become popular enough in the community to replace the monotonic stack. Why do you think that is?

I think the reason is that people try to learn from others instead of discovering things on their own. If you tried finding a solution for the NSE problem, what was the probability that you ended up with monotonic stack? How about NSJ?

This again confirms my teaching method slogan: "Be a fellow traveller, not a travel leader" (see my profile picture, and check out more here).

Problems for practicing:

- CSES — Nearest Smaller Values Python Solution C++ Solution

- HackerRank — The Next Greater Element

- LeetCode — Final Prices With a Special Discount in a Shop

- Codeforces — 547B

- CodeChef — COCU3

- Codeforces — 675E

Update (June 10): I know monotonic stack has other applications. But NSJ seems like a magic that you think gets TLE, but it works, and it's worth learning.

What you have described is just monotonic stack without the stack.

$$$\text{next[i]}$$$ is just what is on top of the stack right before you push $$$i$$$ to it, the repeated assignment $$$\text{next}[i] = \text{next[next[i]]}$$$ is equivalent to poping the stack to find the correct elements.

They are solving the same problem, they are both trying to find next[i]. The difference is the way they find next[i]. A person who doesn't even know stack can understand NSJ, but they can't understand the monotonic stack. That's the difference.

I think neither methods are particularly hard to understand, in fact you kind of need to use the definition to prove the correctness monotonic stack. If you have the monotonic stack knowledge, proving this is trivia. In fact, when I first learned monotonic stack, I was taught this method and the proof is just that it's equivalent to monotonic stack.

As for why this is not popular, I think it has to do with monotonic stack being more diverse while not really harder to implement. For some problem, $$$\text{next[i]}$$$ is needed in multiple places, so this might be better than implementing a stack just to extract $$$\text{next[i]}$$$. However, if you only need the value as you are iterating, then monotonic stack save memory. If you want to, you can also perform binary search on the stack to obtain result like smallest value in a range $$$[l, r]$$$, as you are iterating $$$l$$$ or $$$r$$$.

not exactly for the NSE problem, but i have used a similar technique before on this problem

Code: 260593506

The proof of why this works is basically the monotonic stack technique itself. It is much more worth it to learn monotonic stack than this specific trick; it is still more general, and proving its correctness and time complexity is much simpler for beginners.

iasmimqf this is exactly the technique you used to solve the nearest smaller value problem when I showed it to you. I'll still call it "Iasmim technique" :)

I am sure it is little-known but definitely not new. I am personally using this for years instead of stack version and do not even remember how I know about it.

I learned this from tmw's code 306423247.

Egocentric?

It's a nice way to look on it, but "monotonic stack is useless" is a bit of an overstatement. Sometimes you need to do binary search on intermediate states of the stack, and also monotonic stack is still a good stepping stone and foundation for developing monotonic queue.

I agree with you.

The $$$next[i] = next[ next[i] ]$$$ trick and proof can be used to solve JOI Long Mansion

Once you have the

nextarray, you can calculateprev[i]= closest guy beforeiand smaller-equal toa[i]like:Then

a[i]is the min value in subarray[prev[i]+1, next[i]-1]Also you can calculate Cartesian tree like

me like stacks