Hello old friends!

I recently decided to run an experiment: could I build an entire programming challenges platform using nothing but an AI assistant?

This post covers how that went, what I ended up with, and a few “you can’t make this up” moments along the way. You can check it out here (and solve problems in the ongoing contest) — feedback is welcome.

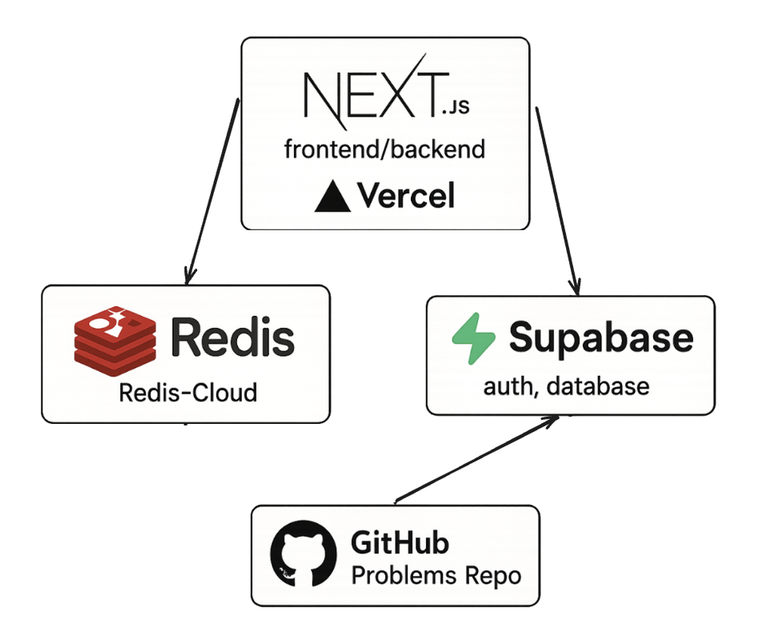

Final architecture

- Next.js frontend + backend on Vercel

- Supabase Postgres for Users, Contests, Problems (including input/output files), and Submissions

- Private GitHub repo for problem development, plus a script to upsert them into the DB

- Redis cluster on Redis-Cloud for leaderboards and caching

Development anecdotes

Day 1: instant success → instant disaster

July 28. I open Cursor IDE, set it to claude-4-sonnet, and say: “Make me a programming contest site with multiple contests, output-based submissions (no code execution), scalable to hundreds of people.”

Minutes later, it spits out a seemingly working site: contest list, problem pages with download/upload buttons, and a leaderboard. I’m amazed.

Then I ask it to “clean something up” before I commit to Git. It deletes the entire source code. Not kidding — I even saw it panic in its “thoughts” log when I asked why. Too late.

Rebuild: the honeymoon’s over

I rerun the same prompt… and the site looks worse. A lot worse. Leaderboards are just static pages. Output checkers are on the client side. “Login” is literally a toggle button. To be fair, I have no idea if everything worked on the old page either. We'll never know.

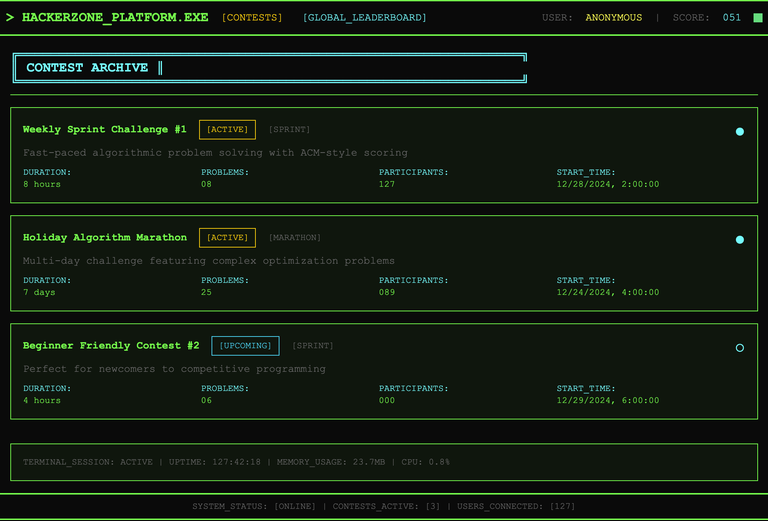

This is a snapshot from the first GitHub commit:

The styling was equally cursed. It made my leaderboard name orange, then picked an almost identical color for the highlight — so my name disappeared entirely. Basically, anything it couldn’t verify with a test was suspect.

Leaderboards from hell

Cursor swore its DB was optimized for hundreds of users. Turns out its “optimization” was: whenever someone submits, delete all leaderboard data, rescore every submission, rebuild the ranking. I moved leaderboards to Redis before it could melt.

I briefly considered async updates via a queue (leaderboard can be eventually consistent), but the added complexity didn’t feel worth it. Redis updates happen inline now.

Getting Cursor to actually implement leaderboard logic was painful. Wrong-attempt counting, penalty times, optimization problem scoring — it kept getting the rules wrong. I ended up with a giant suite of unit tests that ran on every small change just to keep it from breaking things.

The Upstash facepalm

Cursor suggested Upstash (free 100K requests/month). I hooked it up… then realized:

- No persistent connections — every Redis call is a REST API call with extra latency.

- One leaderboard page load makes dozens of calls.

Yeah, no. Switched to Redis-Cloud. Still no clue why it thought Upstash was a good fit.

Security fun

Supabase’s anonymous key has to be public for client access. But production dashboard immediately warned me: without Row Level Security (RLS), this means anyone could run:

fetch(`${SUPABASE_URL}/rest/v1/submissions?id=neq.fake`, {

method: 'DELETE',

headers: {

'apikey': ANON_KEY,

'Authorization': `Bearer ${ANON_KEY}`

}

})

...and nuke all submissions. Guess who had no RLS? :D

Final thoughts

~55 hours of Cursor time, ~ $30 in Enterprise fees, and zero frontend experience later, I’ve got a working — and maybe scalable — contest platform.

In terms of cost, the current architecture can be free, but not for long:

- Vercel allows 1M "function invocations" per month on the free tier. Any API call / SSR page load is a function invocation. So I can reasonably expect a user to make hundreds of these during a contest. To scale beyond 1M calls, you gotta pay $20 per month + $0.6 per additional 1M calls.

- Redis-Cloud offers a tiny instance (30MB RAM/dataset size) for free; a 1GB RAM instance costs something like $5/month

- Supabase offers 500MB database for free; for $25/month you get 8GB and other perks

So running this architecture at scale would cost over $50/month (and if it really took off, it would be prohibitively expensive because of all these pay-for-what-you-use providers).

Future

Assuming there is interest, I plan to host some contests on the platform — e.g., replay Google Code Jam rounds, bring problems from some old obscure contests most of you have never seen, and maybe even host an original round. Surely it can't get too popular :P

Downvoted

Upvoted (blog not the comment)

me too (your comment)

This is pretty amazing to see, great job!! Write another blog when you host an original contest, I'd love to try it out :)

Thank you!

There is actually an active contest running with some mildly interesting puzzles (I was hoping to get some testing out of this announcement!). I can announce when I conduct another one, with more interesting challenges.

cool!

This is amazing! Can you share the repository?

So there are two reasons why I can't share it:

Sorry!

I also created a Code Submission and Evaluation System, but by myself not ai. here it is you can check. https://github.com/MrXaid/OnlineJudge, but currently contest feature is not there, because i couldn't think an efficient way to do the leaderboard and stuff. maybe i will do it using some temporary database.

The first contest on the platform has concluded! Thank you to the few Codeforces users who submitted solutions — it even helped find yet-another Redis leaderboard bug. (If you're curious — every WA was removing penalty time from the ZSET score, pulling the user at the top of the list people who had the same number of problems.)

Next, I wanna run a daily contest — similar to AoC, it will run for several days, and a couple new problems will be revealed every day. Something around September 3-10. Could y'all help me choose the time of day by upvoting comments in this thread?

Edit: when you downvote this comment, don't forget to upvote your preferred time ;(

America morning, Europe evening

America evening, Europe morning

Downvote this for karmic balance