UPD. The rating finally roll back. Cheers

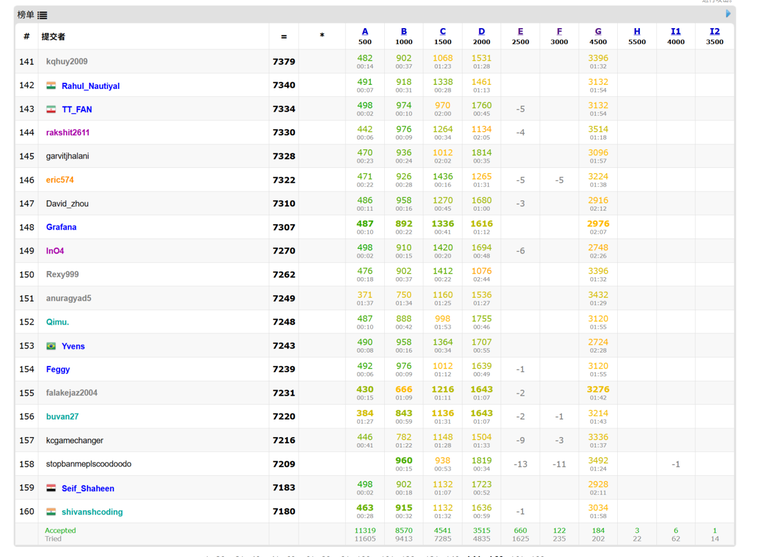

Today’s Global Round 29 felt fundamentally broken. Problem G was tailor-made for language models: a math task where a fluent explanation and a few lines of code could be produced on demand. The result was a scoreboard flooded with sudden G solves from accounts that otherwise struggled, while many honest Div.1 regulars—who actually reasoned through the problem—were pushed down. That isn’t competition; it’s a prompt-engineering race.

The damage is real: unfair rating shifts, polluted editorials, and a growing sense that integrity checks aren’t keeping up—especially when AI can generate proof-like text and lightly paraphrased code that slips past plagiarism filters. If Codeforces wants to protect the competitive spirit, we need tougher post-contest verification (e.g., short solution justifications for late problems), targeted rejudges with hidden variants, stricter account/device controls, clearer and harsher penalties for AI-assisted cheating, and more problems emphasizing interaction, constructions, or rigid invariants that resist autocompletion.

Practice rooms can embrace tools; contests cannot.