A. Find Min Operations

By nishkarsh

How many operations will have $$$x = 0$$$.

Try $$$k = 2$$$.

Answer will be the number of ones in binary representation of $$$n$$$.

If $$$k$$$ is 1, we can only subtract 1 in each operation, and our answer will be $$$n$$$.

Now, first, it can be seen that we have to apply at least $$$n$$$ mod $$$k$$$ operations of subtracting $$$k^0$$$ (as all the other operations will not change the value of $$$n$$$ mod $$$k$$$). Now, once $$$n$$$ is divisible by $$$k$$$, solving the problem for $$$n$$$ is equivalent to solving the problem for $$$\frac{n}{k}$$$ as subtracting $$$k^0$$$ will be useless because if we apply the $$$k^0$$$ subtraction operation, then $$$n$$$ mod $$$k$$$ becomes $$$k-1$$$, and we have to apply $$$k-1$$$ more operations to make $$$n$$$ divisible by $$$k$$$ (as the final result, i.e., 0 is divisible by $$$k$$$). Instead of doing these $$$k$$$ operations of subtracting $$$k^0$$$, a single operation subtracting $$$k^1$$$ will do the job.

So, our final answer becomes the sum of the digits of $$$n$$$ in base $$$k$$$.

The complexity of the solution is $$$O(\log_{k}{n})$$$ per testcase.

#include <bits/stdc++.h>

using namespace std;

int find_min_oper(int n, int k){

if(k == 1) return n;

int ans = 0;

while(n){

ans += n%k;

n /= k;

}

return ans;

}

int main()

{

int t;

cin >> t;

while(t--){

int n,k;

cin >> n >> k;

cout << find_min_oper(n,k) << "\n";

}

return 0;

}

B. Brightness Begins

By nishkarsh

The final state of $$$i$$$th bulb (on or off) is independent of $$$n$$$.

The final state of the $$$i$$$th bulb tells us about the parity of number of divisors of $$$i$$$.

For any bulb $$$i$$$, its final state depends on the parity of the number of divisors of $$$i$$$. If $$$i$$$ has an even number of divisors, then bulb $$$i$$$ will be on; else it will be off. This translates to, if $$$i$$$ is not a perfect square, bulb $$$i$$$ will be on; else it will be off. So now the problem is to find the $$$k$$$th number which is not a perfect square. This can be done by binary searching the value of $$$n$$$ such that $$$n- \lfloor \sqrt{n} \rfloor = k$$$ or the direct formula $$$n$$$ = $$$\lfloor k + \sqrt{k} + 0.5 \rfloor$$$.

For the proof of the second formula you can refer this book page 141 E18

#include <bits/stdc++.h>

using namespace std;

int main(){

int t;

cin >> t;

while(t--){

long long k, l = 1, r = 2e18;

cin >> k;

while(r-l > 1){

long long mid = (l+r)>>1;

long long n = mid - int(sqrtl(mid));

if(n >= k) r = mid;

else l = mid;

}

cout << r << "\n";

}

return 0;

}

#include <bits/stdc++.h>

using namespace std;

int main(){

int t;

cin >> t;

while(t--){

long long k;

cin >> k;

cout << k + int(sqrtl(k) + 0.5) << "\n";

}

return 0;

}

C. Bitwise Balancing

By P.V.Sekhar

Try to find some independent operations/combinations.

The expression is independent for each digit in binary representation

The first observation is that the expression $$$a$$$|$$$b$$$ - $$$a$$$&$$$c = d$$$ is bitwise independent. That is, the combination of a tuple of bits of $$$a, b, and\ c$$$ corresponding to the same power of 2 will only affect the bit value of $$$d$$$ at that power of 2 only.

This is because:

We are performing subtraction, so extra carry won't be generated to the next power of 2.

Any tuple of bits of $$$a$$$, $$$b$$$, and $$$c$$$ corresponding to the same power of 2 won't result in -1 for that power of 2. As that would require $$$a|b$$$ to be zero and $$$a$$$&$$$c$$$ to be one. The former condition requires the bit of $$$a$$$ to be zero, while the latter requires it to be one, which is contradicting.

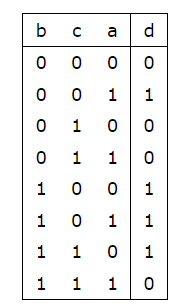

Now, let us consider all 8 cases of bits of a, b, c and the corresponding bit value of d in the following table:

So, clearly, for different bits of $$$b, c,$$$ and $$$d$$$, we can find the value of the corresponding bit in $$$a$$$, provided it is not the case when bit values of $$$b, c,$$$ and $$$d$$$ are 1,0,0 or 0,1,1, which are not possible; so, in that case, the answer is -1.

#include <bits/stdc++.h>

#define ll long long

using namespace std;

void solve() {

ll a = 0, b, c, d, pos = 1, bit_b, bit_c, bit_d, mask = 1;

cin >> b >> c >> d;

for (ll i = 0; i < 62; i++) {

if (b&mask) bit_b = 1;

else bit_b = 0;

if (c&mask) bit_c = 1;

else bit_c = 0;

if (d&mask) bit_d = 1;

else bit_d = 0;

if ((bit_b && (!bit_c) && (!bit_d)) || ((!bit_b) && bit_c && bit_d)) {

pos = 0;

break;

}

if (bit_b && bit_c) {

a += (1ll-bit_d)*mask;

} else {

a += bit_d*mask;

}

mask<<=1;

}

if (pos) {

cout << a << "\n";

} else {

cout << -1 << "\n";

}

}

int main() {

ll t;

cin >> t;

while (t--) {

solve();

}

}

D. Connect the Dots

By P.V.Sekhar

The value of $$$d$$$ is very small.

Try using Disjoint Set Union (DSU).

The main idea is to take advantage of the low upper bound of $$$d_i$$$ and apply the Disjoint Set Union.

We will consider $$$dp[j][w]$$$, which denotes the number of ranges that contain the $$$j$$$ node in connection by the triplets/ranges with $$$w$$$ as $$$d$$$ and $$$j$$$ is not $$$a\ +\ k\ *\ d$$$, and $$$id[j][w]$$$, which denotes the node that represents an overall connected component of which $$$j$$$ node is part of for now.

The size of both $$$dp$$$ and $$$id$$$ is $$$max_j * max_w = (n) * 10 = 10\ n$$$.

We will maintain two other arrays, $$$Start_cnt[j][w]$$$ and $$$End_cnt[j][w]$$$, which store the number of triplets with $$$j$$$ as $$$a_i$$$ and $$$a_i+k_i*d_i$$$, respectively, and with $$$w$$$ as $$$d_i$$$, to help us maintain the beginning and end of ranges.

We will now apply Disjoint Set Union. For each $$$i$$$th triplet, we assume $$$a_i$$$ will be the parent node of the Set created by $$$a_i$$$, $$$a_i$$$ + $$$d_i$$$, ..., $$$a_i+k_i*d_i$$$.

The transitions of $$$dp$$$ are as follows:

1) if $$$j \ge 10$$$ (max of $$$d_i$$$): for all $$$w$$$, $$$dp[j][w]$$$ are the same as $$$dp[j-w][w]$$$, just with some possible changes. These changes are due to $$$j$$$ being the start or the end of some triplet with $$$d_i$$$ as $$$w.$$$ So, let us start with $$$dp[j][w]$$$ as $$$Start_cnt[j][w] - End_cnt[j][w]$$$. If $$$dp[j-w][w]$$$ is non-zero, then perform a union operation (of DSU) between the $$$j$$$ node and $$$id[j-w][w]$$$, increment $$$dp[j][w]$$$ by $$$dp[j-w][w]$$$, and assign $$$id[j][w]$$$ as $$$id[j-w][w]$$$. This unites the ranges over the $$$j$$$ node.

2) if $$$j \lt 10$$$ (max of $$$d_i$$$): we do the same as above; rather than doing for all $$$w,$$$ we would restrict ourselves with $$$w$$$ from $$$0$$$ to $$$j-1$$$.

The net time complexity = updation of $$$dp[j][w]$$$ value by $$$Start_cnt$$$ and $$$End_cnt$$$ + union operations due to union of $$$id[j-w][w]$$$ with $$$j$$$ over all $$$w$$$ + incrementing $$$dp$$$ values (by $$$dp[j-w][w]$$$) + copying $$$id$$$ values = $$$10\ n + 10\ nlogn + 10\ n + 10\ n= 10 nlogn + 30n$$$ (in worst case) = $$$O(max_d*n*logn)$$$.

#include <bits/stdc++.h>

#define ll long long

using namespace std;

const ll N = 2e5+2;

const ll C = 10 + 1;

vector<ll> par(N), sz(N, 0);

vector<vector<ll>> dp(N, vector<ll> (C, 0)), ind(N, vector<ll> (C, 0)), start_cnt(N, vector<ll> (C, 0)), end_cnt(N, vector<ll> (C,0));

ll find_par(ll a) {

if (par[a] == a) return a;

return par[a] = find_par(par[a]);

}

void unite(ll a, ll b){

a = find_par(a), b = find_par(b);

if (a == b) return;

if (sz[b] > sz[a]) swap(a, b);

sz[a] += sz[b];

par[b] = a;

}

void reset(ll n) {

for (ll i = 1; i <= n; i++) {

par[i] = i;

sz[i] = 1;

for (ll j = 1; j < C; j++) {

dp[i][j] = start_cnt[i][j] = end_cnt[i][j] = 0;

ind[i][j] = i;

}

}

}

void solve() {

ll n, m, a, d, k;

cin >> n >> m;

reset(n);

for (ll i = 0; i < m; i++) {

cin >> a >> d >> k;

start_cnt[a][d]++;

end_cnt[a + k * d][d]++;

}

for (ll i = 1; i <= n; i++) {

for (ll j = 1; j < C; j++) {

dp[i][j] = start_cnt[i][j] - end_cnt[i][j];

if (i-j < 1) continue;

if (dp[i-j][j]) {

unite(ind[i-j][j], i);

ind[i][j] = ind[i-j][j];

dp[i][j] += dp[i-j][j];

}

}

}

ll ans = 0;

for (ll i = 1; i <= n; i++) {

if (find_par(i) == i) ans++;

}

cout << ans << "\n";

}

int main() {

ll t;

cin >> t;

while (t--) {

solve();

}

}

E. Expected Power

By nishkarsh

Try to find the expected value of $$$f(S)$$$ rather than $$$(f(S))^2$$$.

Write the binary representation of $$$f(S)$$$ and find $$$(f(S))^2$$$.

Let the binary representation of the $$$Power$$$ be $$$b_{20}b_{19}...b_{0}$$$. Now $$$Power^2$$$ is $$$\sum_{i=0}^{20} \sum_{j=0}^{20} b_i b_j * 2^{i+j}$$$. Now if we compute the expected value of $$$b_ib_j$$$ for every pair $$$(i,j)$$$, then we are done.

We can achieve this by dynamic programming. For each pair $$$i,j$$$, there are only 4 possible values of $$$b_i,b_j$$$. For every possible value, we can maintain the probability of reaching it, and we are done.

The complexity of the solution is $$$O(n.log(max(a_i))^2)$$$.

#include <bits/stdc++.h>

using namespace std;

int fast_exp(int b, int e, int mod){

int ans = 1;

while(e){

if(e&1) ans = (1ll*ans*b) % mod;

b = (1ll*b*b) % mod;

e >>= 1;

}

return ans;

}

const int mod = 1e9+7;

const int bits = 11;

int inv(int n){

return fast_exp(n,mod-2,mod);

}

const int inverse_1e4 = inv(10000);

int dp[bits][bits][2][2];

void transition(int a, int p){

p = (1ll*p*inverse_1e4) % mod;

int negp = (mod+1-p) % mod;

int bin[bits];

for(int i = 0; i < bits; i++){

bin[i] = a&1;

a >>= 1;

}

for(int i = 0; i < bits; i++){

for(int j = 0; j < bits; j++){

int temp[2][2];

for(int k : {0,1}) for(int l : {0,1}) temp[k][l] = (1ll*dp[i][j][k][l]*negp + 1ll*dp[i][j][k^bin[i]][l^bin[j]]*p) % mod;

for(int k : {0,1}) for(int l : {0,1}) dp[i][j][k][l] = temp[k][l];

}

}

}

int main() {

int t;

cin >> t;

while(t--){

int n;

cin >> n;

int a[n],p[n];

for(int i = 0; i < n; i++) cin >> a[i];

for(int i = 0; i < n; i++) cin >> p[i];

for(int i = 0; i < bits; i++) for(int j = 0; j < bits; j++) dp[i][j][0][0] = 1;

for(int i = 0; i < n; i++) transition(a[i],p[i]);

int ans = 0;

for(int i = 0; i < bits; i++){

for(int j = 0; j < bits; j++){

int pw2 = (1ll<<(i+j)) % mod;

ans += (1ll*pw2*dp[i][j][1][1]) % mod;

ans %= mod;

for(int k : {0,1}) for(int l : {0,1}) dp[i][j][k][l] = 0;

}

}

cout << ans << "\n";

}

return 0;

}

F. Count Leaves

By nishkarsh

$$$f$$$ satisfies a special property for fixed d.

$$$f$$$ is multiplicative i.e. $$$f(x.y)$$$ = $$$f(x) * f(y)$$$ if $$$x,y$$$ are coprime, for fixed d.

It can be observed that the number of leaves is equal to the number of ways of choosing $$$d$$$ integers $$$a_0,a_1,a_2...a_d$$$ with $$$a_d = n$$$ and $$$a_i divides a_{i+1}$$$ for all $$$(0 \le i \le d-1)$$$.

Lets define $$$g(n) = f(n,d)$$$ for given $$$d$$$. It can be seen that the function $$$g$$$ is multiplicative i.e. $$$g(p*q) = g(p)*g(q)$$$ if $$$p$$$ and $$$q$$$ are coprime.

Now, lets try to calculate $$$g(n)$$$ when $$$n$$$ is of the form $$$p^x$$$ where $$$p$$$ is a prime number and $$$x$$$ is any non negative integer. From the first observation, we can say that here all the $$$a_i$$$ will be a power of $$$p$$$. Therefore $$$a_0,a_1,a_2...a_d$$$ can be written as $$$p^{b_0},p^{b_1},p^{b_2}...p^{b_d}$$$. Now we just have to ensure that $$$0 \le b_0 \le b_1 \le b_2 ... \le b_d = x$$$. The number of ways of doing so is $$$\binom{x+d}{d}$$$.

Now, lets make a dp (inspired by the idea of the dp used in fast prime counting) where $$$dp(n,x) = \sum_{i=1, spf(i) \gt = x}^{n} g(i)$$$. Here $$$spf(i)$$$ means smallest prime factor of $$$i$$$.

Our required answer is $$$dp(n,2)$$$.

The overall complexity of the solution is $$$O(n^{\frac{2}{3}})$$$.

#include <bits/stdc++.h>

#define ll long long

#define pb push_back

#define mp make_pair

#define F first

#define S second

#define pii pair<int,int>

#define pll pair<ll,ll>

#define pcc pair<char,char>

#define vi vector <int>

#define vl vector <ll>

#define sd(x) scanf("%d",&x)

#define slld(x) scanf("%lld",&x)

#define pd(x) printf("%d",x)

#define plld(x) printf("%lld",x)

#define pds(x) printf("%d ",x)

#define pllds(x) printf("%lld ",x)

#define pdn(x) printf("%d\n",x)

#define plldn(x) printf("%lld\n",x)

using namespace std;

ll powmod(ll base,ll exponent,ll mod){

ll ans=1;

if(base<0) base+=mod;

while(exponent){

if(exponent&1)ans=(ans*base)%mod;

base=(base*base)%mod;

exponent/=2;

}

return ans;

}

ll gcd(ll a, ll b){

if(b==0) return a;

else return gcd(b,a%b);

}

const int INF = 2e9;

const ll INFLL = 4e18;

const int small_lim = 1e6+1;

const int mod = 1e9+7;

const int big_lim = 1e3+1;

ll primes_till_i[small_lim];

ll primes_till_bigger_i[big_lim];

vl sieved_primes[small_lim];

vl sieved_primes_big[big_lim];

vi prime;

int N,k,d;

void sieve(){

vi lpf(small_lim);

ll pw;

for(int i = 2; i < small_lim; i++){

if(! lpf[i]){

prime.pb(i);

lpf[i] = i;

}

for(int j : prime){

if((j > lpf[i]) || (j*i >= small_lim)) break;

lpf[j*i] = j;

}

}

for(int i = 2; i < small_lim; i++){

primes_till_i[i] = primes_till_i[i-1] + (lpf[i] == i);

}

}

ll count_primes(ll n, int ind){

if(ind < 0) return n-1;

if(1ll*prime[ind]*prime[ind] > n){

if(n < small_lim) return primes_till_i[n];

if(primes_till_bigger_i[N/n]) return primes_till_bigger_i[N/n];

int l = -1, r = ind;

while(r-l > 1){

int mid = (l+r)>>1;

if(1ll*prime[mid]*prime[mid] > n) r = mid;

else l = mid;

}

return primes_till_bigger_i[N/n] = count_primes(n,l);

}

int sz;

if(n < small_lim) sz = sieved_primes[n].size();

else sz = sieved_primes_big[N/n].size();

ll ans;

if(sz <= ind){

ans = count_primes(n,ind-1);

ans -= count_primes(n/prime[ind],ind-1);

ans += ind;

if(n < small_lim) sieved_primes[n].pb(ans);

else sieved_primes_big[N/n].pb(ans);

}

if(n < small_lim) return sieved_primes[n][ind];

else return sieved_primes_big[N/n][ind];

}

ll count_primes(ll n){

if(n < small_lim) return primes_till_i[n];

if(primes_till_bigger_i[N/n]) return primes_till_bigger_i[N/n];

return count_primes(n,prime.size()-1);

}

const int ncrlim = 3.5e6;

int fact[ncrlim];

int invfact[ncrlim];

void init_fact(){

fact[0] = 1;

for(int i = 1; i < ncrlim; i++) fact[i] = (1ll*fact[i-1]*i)%mod;

invfact[ncrlim-1] = powmod(fact[ncrlim-1], mod-2, mod);

for(int i = ncrlim-1; i > 0; i--) invfact[i-1] = (1ll*invfact[i]*i)%mod;

}

int ncr(int n, int r){

if(r > n || r < 0) return 0;

int ans = fact[n];

ans = (1ll*ans*invfact[n-r]) % mod;

ans = (1ll*ans*invfact[r]) % mod;

return ans;

}

ll calculate_dp(ll n, int ind){

if(n == 0) return 0;

if(prime[ind] > n) return 1;

ll ans = 1,temp;

if(1ll*prime[ind]*prime[ind] > n){

temp = ncr(k+d,d);

temp *= count_primes(n)-ind;

ans+=temp; ans %= mod;

return ans;

}

ans = 0;

ll gg = 1;

ll mult = d;

while(gg <= n){

temp = calculate_dp(n/gg,ind+1);

temp *= ncr(mult,d);

ans += temp;

ans %= mod;

mult += k;

gg *= prime[ind];

}

return ans;

}

int main(){

sieve();

init_fact();

int t;

sd(t);

while(t--){

sd(N);sd(k);sd(d);

plldn(calculate_dp(N,0));

for(int i = 1; i < big_lim; i++){

primes_till_bigger_i[i] = 0;

sieved_primes_big[i].clear();

}

}

return 0;

}

whats the point of allowing $$$O(n \cdot 1024)$$$ solutions in E

well, we reduced the bound on A to make sure nlog^2A passes.

We didn't knew that these solutions existed.

know*

I think $$$a_i \lt 2^{14}$$$ or something would've been the optimal choice if i am not mistaken $$$O(n \log^2 A)$$$ should pass as long as there is no bad constant factor and the more naive solution wouldn't have passed but i am sure it was hard to predict that such solutions would pop up + thanks for the amazing contest.

It's not hard to expect that the dp 1024n is too easy and obvious to anyone who has solved dp+ev before

Yes it is straight forward but $$$n$$$ is still upto $$$2 \cdot 10^5$$$ so passing shouldn't seem smooth, some people even got TLE.

What is amazing about this contest?

then you just killed the problem

my O(n * 1024) solution passed (4000ms), so I optimized to O(min(n, 1024) * 1024) and it passed 2500ms.

This also didn't seem intended but yeah this solution also exists and it runs in $$$O(\text{max } a_i^2)$$$.

can you please explain your optimized solution for problem E?

Sure. Here’s how I came up with the optimization:

n > 2^10, we have duplicates.2^10):pdp[i][0]— the probability of getting valueian even number of times.pdp[i][1]— the probability of getting it an odd number of times.Dp base for each number = {1, 0} (100% chance of getting zero times).

i(with probpi), we have two transitions:pdp[i] = { pdp[i][0] * (1-pi) + pdp[i][1] * pi, pdp[i][0] * pi + pdp[i][1] * (1-pi) }. I hope these transitions are clear to understand.O(n * 1024), but instead of iterating over alla[i], we will iterate only over unique a[i]. Instead ofp[i], use previously calculated probability dpprob_dp[a[i]]for calculating the new dp. Two transitions: if we take an even number ofa[i]or an odd number ofa[i].My solution (rust): https://mirror.codeforces.com/contest/2020/submission/283652607 In my case

cntarray ispdpprecalculations.Understood, thanks!

Getting tle with the same Approach :((

Can you check it once link : https://mirror.codeforces.com/contest/2020/submission/283786350

Thanks

Doesn't that give TLE? As you mentioned 2 * 1e5 * 1024 > 1e8. I implemented it 330934118. It doesn't work.

Idea is to have a bunch of test cases, all with n < 1024, but the sum of n over all test cases becomes 2e5.

This way, even if we consider unique values only, the worst case is 2e5 * 1023. Which is giving TLE.

it is still O(n*1024) ?

I came up with that solution at my first look at E after solving C, but $$$O(n⋅1024)$$$ would definitely TLE, and I couldn't improve my approach. It kinda makes me feel sad to know that such were ACed now :)

283614074 Brute force solution runs 0.9s. Much less than Time Limit 4s, and quite close to official solution which runs 0.7s. When I come to the problem, I get the idea same as the tutorial. But later I see $$$a_i \lt 1024$$$, so I decide to submit $$$O(1024 \min{\{n, 1024\}})$$$ solution which saves lines of codes.

say n = 1 across test cases , then u can have 2e5 test cases in each loop of 1024 makes the complexity O(1024*t) ryght ?

No. The statement says $$$t \le 10^4$$$, and the worst case is $$$2 \times 10^5 \times 1024$$$, nothing to do with $$$t$$$.

crrct , my point is that O(1024min{n,1024}) solution is not the case ,

Doesn't that give TLE? As you mentioned 2 * 1e5 * 1024 > 1e8.

I implemented it 330934118. It doesn't work.

Idea is to have a bunch of test cases, all with n < 1024, but the sum of n over all test cases becomes 2e5.

This way, even if we consider unique values only, the worst case is 2e5 * 1023. Which is giving TLE.

Maybe you've encountered a compiler bug. My idea is to stick with modern compilers like GCC-14 and don't use GCC-7.

330966332 Actually I submitted your code and it passed

Bruh. Will have to check this out then. Thanks. Can I fix this by just changing my compiler type while submitting codes? I don't want to mess with my local system setup right now. I'm asking this so that I can use better compilers if I'm ever getting TLE because of something like this, while not having to change my local setup right now.

I replaced 4 modulo operation with 1 in my solution. And it got passed

yeah, my O(n*1024) passed in 765ms

Can anyone explain how did we get the direct formula in B??

Every number has an even number of factors, except square numbers.

We can prove the first fact by finding a factor a of number n. Then, if n is not a square n/a is another factor of n.

If n is a square, then we double count sqrt(n), as we count n/sqrt(n) and sqrt(n) which are the same value. This leads to an odd number of factors(every other factor + 1).

Using this, if a switch is flipped an even number of times, it returns back to 1. If it flipped an odd number of times, it returns to 0.

Check the solution discution on YT.

This does not answer the question which was about how to get the direct formula: $$$n=⌊k+\sqrt{k}+0.5⌋$$$

This was a good round, unless I FST ofc, then it was a horrible round. I think that there should be more problems like this — problems that don't require so much IQ, but $$$do$$$ require coding. These problems are what might be called chill problems.

is 123gjweq2 a social experiment?

Now after seeing the solution of B problem.... I just want to cry ):

I was able to figure out that number of ON bulbs after n operations is related to sqrt(n) but was going in opposite track. Here only perfect squares are off and other are ON.

I understood the binary search solution for problem B. But, I am not able to figure out how direct formula $$$n = \lfloor k + \sqrt{k} +0.5 \rfloor$$$ came. Can anyone please explain it?

dont think abt that farmula number of number of perfect squares<=k and add to k(ie (int)sqrt(k)) now we can only cross atmost one more square in b/w [k,k+(int)sqrt(k)] which is ((int)sqrt(k)+1)^2 check for that and add 1 the farmula given directly checks it mathematically

I did a similar approach but got wrong answer. Mind explaining why? This

i think it has problem with sqrt funcuntion like some times it will give root 4=1.999 so it will become 1 so it is good to check if (r+1)(r+1)=k r++;

I am trying to submit binary search solution but I am receiving WA verdict. Even though it is giving correct answer for that test case in my machine. Can you please check what is the problem? https://mirror.codeforces.com/contest/2020/submission/283696903

Thanks

i think its the same problem with you the sqrt funcuntion gives error at intigers some times

Suppose $$$\lfloor \sqrt n \rfloor = m$$$, $$$m^2 \lt n \lt (m+1)^2$$$ (note that n wouldn't be a square, as the square lightbulb itself is closed)

So, $$$m^2-m \lt k \lt m^2+m+1, m-0.5 \lt \sqrt k \lt m+0.5$$$ (inequality is true as k is an integer)

$$$\lfloor \sqrt k +0.5 \rfloor = m = \lfloor \sqrt n \rfloor$$$, $$$ n = k + \lfloor \sqrt k +0.5 \rfloor$$$

In problem B, how do we derive the formula $$$( n = \left\lfloor k + \sqrt{k} + 0.5 \right\rfloor )$$$ from the equation $$$( n - \left\lfloor \sqrt{n} \right\rfloor = k )$$$?

Derivation:

Suppose $$$\lfloor \sqrt n \rfloor = m$$$, $$$m^2 \lt n \lt (m+1)^2$$$ (note that n wouldn't be a square, as the square lightbulb itself is closed)

So, $$$m^2-m \lt k \lt m^2+m+1, m-0.5 \lt \sqrt k \lt m+0.5$$$ (inequality is true as k is an integer)

$$$\lfloor \sqrt k +0.5 \rfloor = m = \lfloor \sqrt n \rfloor$$$, $$$ n = k + \lfloor \sqrt k +0.5 \rfloor$$$

Intuition:

Suppose $$$l^2 \leq k \lt (l+1)^2$$$, we have $$$n = k+l$$$, if $$$n \geq (l+1)^2$$$, we have to add 1 to compensate for that new square. So $$$n=k+l$$$ or $$$n=k+l+1$$$

$$$n \geq (l+1)^2 , k \geq l^2+l+1 \gt l^2+l+0.25, \sqrt k \gt l+0.5$$$

The formula follows.

I understand the derivation, but I am unsure how $$$m - 0.5 \lt \sqrt{k} \lt m + 0.5$$$ follows from the condition $$$m^2 - m \lt k \lt m^2 + m + 1$$$.

$$$m^2-m \lt (m^2-m+1/4) = (m-0.5)^2 \lt k \lt (m+0.5)^2 = m^2+m+1/4 \lt m^2+m+1$$$

As you can see, 1/4 is added for lower bound, 3/4 is removed for upper bound, so the inequality only works because k is a positive integer.

If maxa is sth like 2^20, E will be a good problem. What a pity.

A O(1) solution for C exists: if some solution exists both

b ^ dandc ^ dwill be solutions. This comes from simplifying the truth table.O(1) solution for B exists too

cout << k + (int)sqrtl(k + (int)sqrtl(k)) << endl;I don't think this is O(1) since sqrt is log(n) time complexity

It's considered to be constant in bit complexity, just as how we consider other arithmetic operations to have constant complexity.

Amazing

In problem C simply set a to b xor d and check if it works

I think it should be c xor d.

My submission uses b xor d, not c xor d. I believe both work.

You put image of editorial C to editorial D. By accident I suppose

I got the idea of problem B fast because of 1909E - Multiple Lamps.

Doing what problem B does solves this when $$$n \ge 20$$$.

its an extremely well known riddle, here is a youtube link for n = 100

https://www.youtube.com/watch?v=xwWdBj5WfCo

How was I supposed to solve that B problem, I literally had 0 idea that I will have to build a formulae for that, plus the observation required for the problem isn't normal at all.

Here is how I approached it. First I assumed it to be a dumb problem. And from my past experience and noticing that n and k are not far apart in the samples, and by thinking about prime factorization and number of factors (however I didn't dive into that because I was lazy) I suspect maybe only the first few bulbs are not on. And I printed a table of the first 100 n and k, and quickly realized that k=n-(int)sqrtl(n). Obviously then you may construct some weird formula to do it backwards, but I suddenly came up with binary search and solved it.

I didn't think of establishing a formula when solving this problem, but I found the following pattern by observing the case of n=24: 0110111101111110111111110 Using this rule, the problem is transformed into how many zeros need to be inserted into k ones

contest is too math

In B no need for a formula, you can just binary search for the answer, it only needs that observation

That observation is too much for me, tell me how much more I need to practice in order to get that B always right.

I saw that observation before in blogs but usually you just need practice to get observations fast, if you aren't sure how to practice correctly I think there's lots of cf blogs on it

Can someone help me with my code for E. I am finding probability for each bit to be active or not then for all number adding it's contribution to expectation.

z[i][0][j] represent prob in first i element our subset's xor will have j'th bit set

z[i][1][j] represent prob in first i element our subset's xor will not have j'th bit set

then for each number(0-1023) i add contribution number 5's contribution would be simply (z[n-1][0][0]*z[n-1][1][1]*z[n-1][2][0])*5*5

Squaring is not linear so you can't handle bit by bit without doing the editorial's trick, what works is z[i][j] represents probability in first i element that the xor is equal to j

i do understand editorial's way but can't find mistake in my own approch. can you may be give me little example

Oh I didn't understand your solution, it looks like it should work, there might be an implementation mistake somewhere

At first I thought of something similar for this problem. The issue is that the probability of getting a 1 on the ith bit is not independent to the probability of getting a 1 on the jth bit. An example of this may be that given the case:

1

3

5000

I can either take the 2^0 bit and the 2^1 bit or I can take neither, yet your implementation may give some probability to 2's contribution.

Makes sense I think that's the problem with the solution

Ahhh!! you are absolutely right i see problem now.

I had the same idea when implementing at first, but the bit-by-bit approach doesn't work because you're trying to use linearity of expectation on a non-linear operation (squaring). If you're given $$$A=[101_2]$$$ and $$$P=[0.5]$$$, then you should have a 50% chance of obtaining $$$0^2=0$$$ and a 50% chance of obtaining $$$(101_2)^2 = 25$$$. However, if you solve bit-by-bit, your solution will conclude that you have a 25% chance of obtaining $$$(000_2)^2=0$$$, a 25% for $$$(001_2)^2=1$$$, 25% for $$$(100_2)^2=16$$$, and 25% for $$$(101_2)^2=25$$$, which should be impossible since you can only xor by full numbers, not just their individual bits.

Let's say you only have one element in the array, 3. I believe your solution produces a non-zero expected value for the XOR to be 1, because the 0th bit is set in 3. But a XOR value of 1 cannot be achieved.

The editorial solution for E is too complicated. There is a much easier O(1024 * n) dynamic programming solution which comfortably fits within time limit.

Probably it was not intended. But I don't know why they even allowed O(1024n) to pass. Could've had a[i]<10^9 and time limit 4 seconds or something like that

They said they didn't know such solution exists: https://mirror.codeforces.com/blog/entry/134516?#comment-1203470

Why is Carrot not working !?

E can (accidentally) be solved with FWT: https://mirror.codeforces.com/contest/2020/submission/283601247

What is fwt?

fast wavelet transform

https://mirror.codeforces.com/blog/entry/71899 fast walsh hadamard transform

This works, but you can do it more efficiently in $$$O(N + max(A) \cdot log^2(max(A)))$$$ with a divide & conquer approach. Unfortunately it doesn't pass TLs because of high number of test cases: 283681726

Seems like you didnt consider two much easier solutions in D and E

I have solved D using different approach.I won't say it is easy but if someone wants to see here it it 283680740

I solved it with approach that if we fix d and a_i % d, the request is just to merge some vertices inside segment, so its just +1 on segment offline

Problem D has a much easier $$$O(nd^2 + m)$$$ solution.

I solved Problem d using 10 dsu (one for each d).283663343

Can anyone tell me what I am doing wrong looking at my profile, I am unable to reach pupil

solve more problems and implement fast!

$$$O(nd+m)$$$ solution to D: 283633809 We can brute force all edges and run dfs for connected components, since there are at most $$$2*d$$$ edges per node.

Can anyone help me debug my code, It failed test case 8. I tried so hard today but not enough :(

Submission:283668245

use sqrtl, because sqrt looses precision with big numbers (i didn't solve B because of that)

I struggled with this as well, until I finally decided to google for "c++ square root of int64" and was able to solve the problem just in time. Then I looked at the tutorial and discovered

sqrtl.For C, can someone explain why it is bit independent? I am unable to wrap my head around it

Assume it is not bit independent. Then there must be some i, for which (a|b)'s i-th bit is not set and (a&c)'s i-th bit is set. If (a&c)'s i-th bit is set, then a's i-th bit must also be set. But if a's i-th bit is set, then (a|b)'s i-th set must also be set, which is a contradiction. Therefore it is bit independent.

Subtraction in kth bit place in

p - q = raffect other places only if pk is 0 and qk is 1.0 - 0 = 01 - 0 = 11 - 1 = 0For this case to happen, (ak | bk) = 0 which means ak = 0

but (ak & ck) = 1 which means ak = 1 which is a contradiction

Hope the explanation is clear!

An alternative solution to the problem D in $$$O(max_d * n + m)$$$

Consider the point $$$x$$$, note that it can only be connected to the $$$max_d$$$ points that go in front of it. We will support $$$dp[x][d] = k$$$, where $$$x$$$ is the coordinate of a point on a numeric line, $$$d$$$ is the length of the reverse step, $$$k$$$ is the maximum number of steps that will be taken from point $$$x$$$ with step $$$d$$$. Then note that we can move from point $$$x - d$$$ to point $$$d$$$ only if $$$dp[x - d][d] \gt 0$$$. In this case, we will draw an undirected edge between the points $$$x - d$$$ and $$$d$$$. Recalculate the dynamics for point $$$x$$$ as follows $$$dp[x][d] = max(dp[x][d], dp[x - d][d] - 1)$$$

Dynamics base, initially for all $$$x$$$ and $$$d$$$, $$$dp[x][d] = 0$$$. Now for all m triples $$$a_i, d_i, k_i$$$ we get $$$dp[a_i][d_i] = max(dp[a_i][d_i], k_i)$$$

At the end, using dfs, we will find the number of connectivity components

Code of this solution: 283668708

In the editorial of F, I think it should be $$$f(p^{ik},d)$$$ instead of $$$f(p^{i}k,d)$$$

https://mirror.codeforces.com/contest/2020/submission/283572788

can anyone help me why above is wrong

might be a rounding issue. generally a good idea to avoid non-integer types unless absolutely necessary

Where's the originality?

Problem Codeforces 976 Div2 $$$F$$$ be directly copied from AtCoder Beginner Contest 370 G link: https://atcoder.jp/contests/abc370/editorial/10906

The core idea of both problems is absolutely identical, including the approach of solving them with a convolution technique. The only noticeable difference between the two problems lies in how the function $$$f(prime)$$$ and $$$f(prime^{ki}, b)$$$ is computed. Other than that, the rest of the structure, including the logic and solution techniques, are the same. This raises concerns about originality and fair practice in problem setting across competitive programming platforms.

Problem Codeforces 976 Div2 $$$E$$$ has solution with the most basic dp idea with $$$O(n \cdot 1024)$$$.

As someone who placed officially 12-th in Div 2, I’m absolutely disappointed with how Codeforces 976 Div2 F turned out.

An alternate solution to D which doesn't use DP:

Similar to the solution in the editorial I used a DSU to keep track of components, but to check whether elements $$$i$$$ and $$$i+d_i$$$ are connected, I simply need to check among operations $$$j$$$ where $$$d_j = d_i$$$, and $$$a_j \% d_j = i\%d_i$$$. I used a map to store sets of operations together based on $$$d_j$$$ and $$$a_j\%d_j$$$, and I stored each operation as a pair $$$[ a_j, a_j + k_j \cdot d_j ]$$$.

Now each set of operations can now simply be represented as sets of intervals, and I used a datastructure which I called an

IntervalSetwhich internally uses anstd::setto efficiently a insert intervals in amortized $$$O(\text{log n})$$$ and store them efficiently by combining overlapping intervals and query whether an interval is completely included in the set in $$$O(\text{log n})$$$ where $$$n$$$ is the number of intervals in the set. This allows me to simply query whether $$$[i,i+d_i]$$$ is included in the among the operations with $$$d_j = d_i$$$, and $$$a_j \% d_j = i\%d_i$$$ which makes the code very simple.My submission: 283669482

PS: I used ChatGPT to help in implementing the IntervalSet class so its not in a great state rn;) and I haven't seen any implementations of such an IntervalSet class, so I would love to learn about any other implementations you guys know about.

is that a percy jackson profile picture?

Yes it is!!

problem B solution without Binary search : solution

problem a can be solved by take log?

I also have the same question!

Yep it can be.

I try your solution but it got tle on testcase 3

hmm really?

check out this submission, my submission didn't get tle:

283695273

I found out my code got TLE because I miss "#define int long long" line, Thank you for the solution! It's very helpful!

Although I know $$$O(1204\cdot n)$$$ is not the standard solution to E, I have encountered a strange TLE in this solution by just moving $$$M = 10^9 + 7$$$ to the inside of the $$$solve()$$$. 283686661 283686119 Could anyone tell me what's going on?

if you put it outside pypy compiles it as constant, which is way faster.

For E I used the dumb O(1024*n) solution but idk why I'm TLEing. For this submission 283708821 it TLE test 12 since I did dp%MOD. But for the previous submission 283708742 it passes, but I all did was changing the MOD so that it MODs the whole thing instead of the dp itself. How in the world is this not the same thing???

Pls help if anyone know the issue thx <3

change to C++23, there will be probably some magic coming out...

damn do you know why this is?

F can also be solved using standard min_25 sieve trick: https://mirror.codeforces.com/contest/2020/submission/283749226

My O(N * D) solution for D

For problem C why is it giving WA on test-11?

283777193

Hello, did you find what the error is? I have a similar problem in WA 11.

j * (1 << i)is an integer, changing it toj * (1LL << i)should do!Thanks a lot, i had the same stupid mistake

in problem d can't we just make a graph with the help of dsu with the help of given nodes that we receive during input and then calculate the number of connected components?

My solution:https://mirror.codeforces.com/contest/2020/submission/283746432

i did same, don't know i expected tle but i got WA can somebbody explain

Looking at the tutorial of problem E, it isn't clear why the contribution of pairs of bits from both operands can be added together. When we think about the multiplication, we observe that multiple pairs $$$(i,j)$$$ can affect the same bit of the result and yield a carry over. Thus, we don't know a priori if that bit will be set (with some probability) or not, but the contribution should only count when the bit is set.

However, after some consideration, I've concluded that it doesn't matter whether the bit will be set. What matters is that the contribution of all pairs will add up (in terms of expected value), just as it would in the multiplication. Please correct me if I'm wrong in this reasoning.

f(S)^2 = \left(\sum_{i=0}^{B}{b_i \cdot 2^i}\right) \cdot \left(\sum_{j=0}^{B}{b_j \cdot 2^j}\right) \\

f(S)^2 = \sum_{k=0}^{2B}{\left(2^k \cdot \sum_{i+j=k}{b_ib_j}\right)} $$$

Yes, it doesn't really matter whether there is carry over. It's just convenient to look at the number in base 2, that's all.

bro $$$E$$$ is so easy so yet so less accepted submissions , I missed out too , easiest problem in the contest if you know how to do memory optimisation in DP

The same solution problem B in JAVA does not work

In problem E, I tried O(n * 1024) and got tle, so I spent a lot of time to optimize it and did not have enough time to solve D.

And after contest finish, I knew that just need to add ios::sync_with_stdio to get AC

Can someone tell me how I can solve B in java? As far as I know there's no equivalent of sqrtl() in java and Math.sqrt() introduces precision errors for larger inputs.

Thanks for the editorial

The binary search in Question B is still a bit difficult to understand. For example, when k=6, both n=8 and n=9 can be calculated on a computer as diff=n-sqet(n)=6=k. So how can this issue be avoided?

you have to find the smallest n satisfying n-sqrt(n) = k so in the code also when mid-sqrt(mid) >= k then r = mid and else l = mid which ensures that l will be the largest number such that l — sqrt(l) < k and r will be the smallest such that r-sqrt(r) >= k.

can someone explain why for A the following logic is giving WA

Min_25 is too hard!!!!!!!!!!!!!!!!!!!!!!!!!

thanks, now i know sqrtl

Problem D has a much simpler solution in $$$O(m+n \cdot d)$$$ time and $$$O(n \cdot d)$$$ space.

The idea is we can simply keep a map which maps $$$d$$$ to a map having $$$a_i$$$ mapped to max possible $$$k$$$.

So we just have to iterate over the last 10 vertices at max, if for that $$$d$$$ we have $$$i-d$$$ stored in the map, we try connecting them (using DSU), and store $$$mp[d][i] = max(mp[d][i],mp[d][i-d]-1)$$$.

This passes easily in the given time constraints!

My submission: 336833210 for reference.

What was that D problem's editorial! naahh hell naahh! lord save me

Anyone has the book in B's tut because the link didn't work

We can also look at the proof of problem B in this way. Number of factors of a number is

d(n) = (a+1) * (b+1) * (c+1) ...

where n = p1^a1 * (p2 ^a2) * (p3^a3)..

So, when n is a perfect square, d(n) would be always (odd*odd*odd). When n is not a perfect square, it will be (odd) * (odd) *(odd+1) = odd * odd * even. This would make d(n) even always.

Problem B can be solved far more efficiently following the solution: 371910904

It has a time complexity of log2(log10(n)).

Square rooting gives us the number of turned off bulbs. We should additionally turn these number of bulbs on. But these additional bulbs will also have some turned off bulbs due to being perfect squares. We keep square rooting until the offset comes down to zero.