This weekend concluded the first season of the Midnight Code Cup with a long-awaited 24-hour contest. 29 teams – the best from the qualification round, including the qualification winners Omogen Heap – competed on-site in Belgrade, Serbia, on June 7-8.

Team Omogen Heap jumped into first place around the midpoint of the contest and held the title until the very end. Congratulations to our first Midnight Code Cup champions on their dominant performance! Second place went to team GoodBye2023, and Jinotega finished in third.

Final results: https://2025.midnightcodecup.org/standings/

Problems: https://2025.midnightcodecup.org/problems/

Photos: https://drive.google.com/drive/folders/1i6Ap3OEz7hLNv2r5PAIQSwsINGpv9UxH?usp=drive_link

Instagram: https://www.instagram.com/midnightcodecup

Huge thanks to our jury: chief judge Aksenov239 and the team cdkrot, Gassa, isaf27, MaxBuzz, naagi, NALP, nsychev, PavelKunyavskiy, pvs, qwerty787788, Solenoid555, tourist, winger, George Marcus, Timofei Vasilevskii, Vadim Kharitonov. And our clutch testers: antonkov, Belonogov, enot110, izban, Psyho, subscriber, VArtem. Our generous sponsors and partners: Recraft, JetBrains, Jane Street, Neon, Pinely, Amazon and Nebius. And, of course, Codeforces, for hosting the qualification round.

Congratulations to the finalists! Please share your thoughts about the problems and the contest in the comments – and don’t forget to post the cutest solutions to problem I! Below is a write-up of the contest from the organizing team and judges.

How It Went

The contest took place at the Mona Plaza Hotel (teams also had two nights reserved here), so the transfer between rooms and the contest floor was just a short elevator ride. Highly recommended for the 24-hour contest format!

Team: Hi! Where will MCC take place? Will it be easy to get from the contest venue to the hotel at night to sleep, or will it be too far? Orgs: Same hotel! Just an elevator ride away from your room. Team: Is it safe to use the elevator at night? How is the neighborhood around the elevators?

After a brief opening ceremony and an introduction of the contest sponsors (JetBrains provided finalists with Junie AI coding agent licenses; Amazon – with 64-core compute for the duration of the contest), the contest began at 11:00. By the way, what do you think is the best time to start a 24-hour contest?

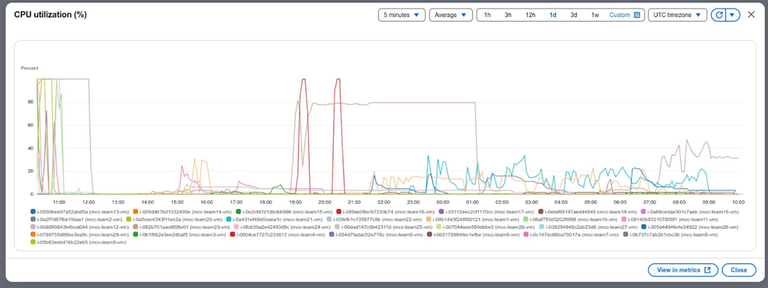

CPU utilization of AWS compute by teams

CPU utilization of AWS compute by teams

Teams looked… surprised when they saw 9 problems in the set. Even though problem F was just an easy scavenger hunt through all sponsor booths with a blind coding session at the end, and problem G gave 200 points to only one team, the contest clearly offered more than one problem per teammate. Many problems featured creative scoring systems – problem B, notably, included games that started every few minutes, so submitting early was key.

A: Answer Ask Answer the Questions! At first, the problem had five straightforward timed questions. Three hours in, each team could submit their own question. Moderated team-generated content was then shared as new questions with other teams. Correct answers earned points, and some hidden points were awarded for likes from competing teams. These were revealed only at the closing ceremony, shuffling places 4-11 but leaving the top three unchanged.

B: Boomerman. A battle-royale multiplayer Bomberman-like game. Matches ran every few minutes for all 24 hours. Submitting early was crucial because scores were scaled to give 5000 points to the best team, with everyone else scored proportionally. Team Probably worse than LLMs led by a large margin early on, until a scoring multiplier (a comeback mechanic for smarter late entries) kicked in. A match visualizer ran on the main screen for most of the event, and special maps were added for fun.

C: Competitive Skating. An optimization problem with open tests and brutal 2D geometry.

D: DSL Strikes Back. A reprise of the qualification problem, where contestants were asked to write optimized solutions to simple programming problems in a custom language representing interaction nets.

E: E Stands for Efficiency. Another optimization problem with open tests, this time in graph theory – inspired by Jane Street and the concept of decentralized crypto storages.

F: Follow the White Rabbit... A short scavenger hunt around the venue (600 points), designed to get participants to meet sponsors and stretch their legs – plus two tries at a 25-minute blind coding challenge for an additional 600 points.

G: Get Ready. A pop quiz that started at midnight just for fun and variety! In the final face-off, Yolki-palki’s midnight-themed art piece won over pengzoo’s, earning 200 points to the former (which didn’t ultimately affect the scoreboard).

H: Hack the Database. A forensics challenge using the Neon database – MCC ventures into CTF territory!

I: Image Archiver. A real-Recraft-inspired problem: split each image into a small number of single-colored connected components to best approximate the original. Among the 20 open tests, one featured a photo of our teams at the opening ceremony. Say “hi,” midnight coders!

To evaluate all this goodness nsychev developed a custom testing system which worked really smoothly!

Plenty of teams stayed up all night, which was easy with the help of late-night meals from Recraft, a variety of coffee bags from JetBrains and a selection of green candies from Pinely available throughout. The contest ended at 11:00, hidden points for problem A were added to the scores and in a few short minutes our champions were holding the trophy!

See you at Midnight (next year)!

Midnight Code Cup organizers:

sofia.tex, Karina Tinicheva and lperovskaya.

Ćao!

why is this on main

Nice contest! I focused on problems C, E, I.

I used ChatGPT a lot. Here is my entire conversation with ChatGPT.

In problem E we got the third place. I implemented several ideas, here are some examples:

In problem I, I did the following:

Problem C: ChatGPT misunderstood the statement, and I only realized it after several hours. I spent a lot of time getting a correct solution, and didn't have time to optimize it well.

Can anyone with better scores explain what they did?

We were trolled by problem F (Follow the White Rabbit...). We found some weird comments in the source code, and we thought they represented something in an esoteric programming language. It turned out that the comments were completely useless, and we had to follow an organizer with a white rabbit costume instead.

I was solving problems C, E, I for the whole contest, and very much enjoyed them, nice problems!

I started by doing a simple greedy — let's assume every pixel is its own component, and then while we have too many components, unite two neighbouring ones which affect our score the least. Since you only need $$$O(1)$$$ info for every component, it just works fast enough. Though one trick I had is to only recalculate penalty for two components when I extract a pair from my priority queue and see that it's outdated, instead of updating everything after each merge.

Then I added annealing — pick a pixel, check that it's not an articulation point, and assign it to the component of one of its neighbours. A few notes about that:

Actually checking that the pixel is an articulation point is too slow, so instead I checked that 8 of its neighbours form a single connected component, and if so, then it's safe to delete the pixel. Generally it worked great, but sometimes it did leave these long loops which it couldn't cut anywhere. After the contest I tried allowing it to actually run dfs once in a while to check for articulation points and it did cut the loops, though didn't improve the score that much. But I'm wondering if there is a better solution for that

A lot of pixels will have all the neighbours from the same component, so maintain the list of all pixels which are on the border of their component, it can be done in $$$O(1)$$$

And again, maintain all component scores in $$$O(1)$$$

Apparently that was enough to get the best score on some "normal looking" images, but worked terribly on tests like 3, 10 or 12 where you kind of needed to just hardcode a spanning tree/forest. For these tests I did the following:

Let's find all connected components, assuming that two pixels are connected if the color difference between them is small enough. For test 3 just set some threshold for black/white

Let's merge all small components into their larger neighbours, it especially helps in test 10 with all the holes in the images

Then do something like Kruskal's algorithm — on each iteration for every pair of components try to build a path between them, connect them, and check how it affects the score. Unite the two with the minimal effect. I didn't implement this in the most optimal way, so it took a few minutes to run, but having it work once was enough

And run my beloved annealing in the end

At this point I wanted my annealing to be able to split some component and then merge something else, so it could fix some non-optimal "edges" in tests 3 or 10. But I don't know how to do it in a sane way, would love to hear any ideas.

In the end I just ran a lot of annealings for the best solution of every test again and again, and it improved the score for most of them. I was especially surprised how it managed to fix test 11 — my final solution looks like this (two purple components on the black background):

But initially it looked something like this

and every annealing run which took a few seconds added another one or two letters to each component (thanks to intentionally high starting temperature I suppose).

It also did the similar thing on test 12, but there it didn't look that cool. Nevertheless, here are all of my final in-contest solutions: https://drive.google.com/drive/folders/15WX2bZ1TGCj3DzmVQ5QgZwvvT5VV9660?usp=drive_link. Just look at this beauty of a solution for test 4 for example:

For this problem, I implemented some not-very-advanced solution, and it surprisingly remained okay-ish until the end of the contest (2700 points compared to top 3300). My main ideas were:

R=2let's write a separate solution — every connected component must be a cycle, so let's write down the permutation and do annealing for it. Mutation would be just swapping any two nodes, then it's enough to recalculate scores only for paths starting or ending at one of these two nodes.Rtake the previous solution forR=2and try to add edges on top of it...I tried to come up with some way to efficiently do local optimizations/annealing on a final graph and couldn't. The idea from TheScrasse that you can just swap nodes even if your graph is not a cycle is quite beautiful. Is there something you can do for moving edges around?

I experienced death from geometry in this one, don't think I had any unique ideas here. Apparently it's crucial to have a "smooth" path all the way, and the most I managed to do during a contest was to check whether I can visit segments from

[i..j]in a single "stroke" (arc or segment), and then do dp on that (also let's markKevenly distributed points on every gate and then try to go from every point on gateito every point on gatejso that dp actually works)A few days after the contest I implemented some UI to draw a path manually and it (would have) improved our score on most tests by a factor of 2x-3x, apparently there are teams who just did that during the contest

How did you manage to connect distant components with simulated annealing? Did you calculate the distance between components and put it as a penalty in your formula?

Well that's the problem, I didn't do that with annealing because I don't know how. For tests like 3 or 10 I essentially ran that deterministic Kruskal-like algorithm only once during the whole contest to build a spanning forest (for every two components, try to connect the pair just by running BFS, check that this path didn't accidentally break anything else, pick the pair which affects RMSE the least, repeat from the start).

And only in the end on top of that I ran annealing, where one iteration of annealing could only change the color of a single pixel which wasn't an articulation point. Probably it wasn't very clear from my explanation, but I had only one version of annealing and it was this one, I just used it wherever I could.

If you're talking about tests 11/12 then the components there are only a few pixels away, it's just lucky random from high temperature, I optimized straight up the final score.

Got it, I had missed the last paragraph.