Hello,

I created a project for training and analyzing your performance during contests: cp-trainer.com. I believe it has some unique features not existing in competitive platforms and can provide as a more sensible tool for training, especially for more experienced users who have participated in bunch of contests already. Below I will briefly discuss its functionalities:

Problem recommender

Contest training picker

In this module user can choose one of the 4 contest modes depending what he wants to train:

- Speed — Average time difference with problems of specific tag.

- Hard problems — The contest consists of 3 problems from the list of 10 in the problem recommender.

- Weaknesses — The contest consists of 3 problems, all from topics, that user is weak at. The rough idea was to take the random 3 out of 5 tags with lowest value from the metrics compute_tag_rating and for each tag take the problem with rating as close as predicted rating on that tag. In practice there was used some comparator, which also takes into the accont the freshness of a problem. (We rather want to solve the problems from fresher contests, since problems have changed a lot in past years).

- Upsolve — The contest consists of 3 problems, that contestant didn't solve during contest (he either had rejected submissions on them or there were first problems during the contest that he didn't accept) with the best quality according to problem recommender.

Note : The duration of the contest (never greater than 5 hours) is calculated automatically based on the expected time to solve the problems (user should solve some problems to not get discouraged, but of course not all). The contestant can limit this time by setting the max duration (he doesn't have to), however it is not very recommended, because the system will just discard some problems from the problemset to adjust the time.

User stats

Compare yourself against the others

The idea for this section was to analyze for the user, how much he is worse than the average contestant with some specific rank. It was realized by taking statistics for 40 average random users across that specific rank , who participated in >=50 contests and were registered min 3 years ago. (that should approximate well the random user with some rank.)

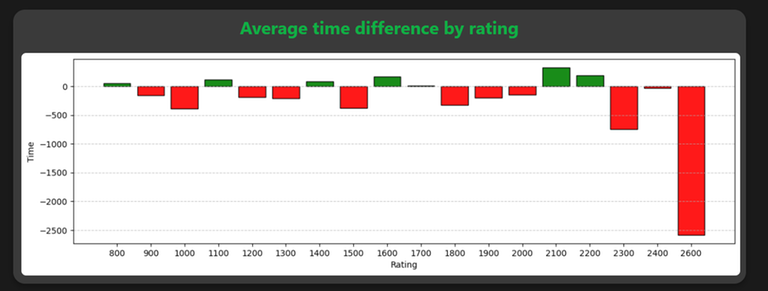

We can see in the picture above the sample statistic of me compared with the average grandmaster telling what is the average time difference (in seconds) of solving problem with some specifing rating. As we can see, I am slower on the most of the ratings, especially in the hardest ones.

The included statistics are (the time unit is in seconds):

- Average time difference by tag — Average time difference with problems of specific tag.

- Average time difference by rating — Average time difference with problems of specific rating.

- Average accuracy difference — Average number of submissions difference to accept the problem with specific tag. It can help to understand on which type of problems we have more problems in implementation.

- Average highest solved problem — Average rating difference of the hardest problem solved in the contest. This helps to understand how much higher rated problems should we solve on average in the contest to be able to get some specific rank.

- Average lowest unsolved problem — Average rating difference of the problem with lowest rating unsolved in the contest.

Note: The green bars mean there is contestant's advantage, red otherwise.

Summary

The goal was to create the tool which will analyze user's performance during live contests and then based on that recommends adequate problems for training. Most apps focus on the stats of the user also during practice, which in my opinion don't provide the best insight e.g. because outside the contest you have unlimited time and also you can look at editorial.

If you find any bugs, please let me know and I will try to fix them as soon as possible. Any constructive criticism is also appreciated. Thanks for checking out the platform.