Introduction

I personally find greedy problems hard to derive. After struggling with proofs for quite a while, there are a few things I realized. Greedy proofs are very dependent on the rules of the problem. Loosely speaking each optimization problem gives you observations, from those observations, you realize that if you take certain choices while avoiding all others, then it will always be optimal. That realization is termed Greedy.

There are a lot of optimization problems, but only a small subset allows you to break the problem into subproblems where solving them optimally leads to the best global answer. This property, known as Optimal Substructure, is found in both DP and Greedy algorithms. Sadly, not every problem has this, for example, checking whether $$$N$$$ is prime, or solving a system of $$$N$$$ linear equations where all variables affect each other.

While DP and Greedy share the optimal substructure property, DP is essentially "smart brute force", where you define the subproblems and check all transitions to find the best one. In Greedy, however, you have to rigorously prove that ignoring all other choices and taking just one specific path works every single time. This takes the difficulty one notch up from DP.

The idea behind DP is the same everywhere, gather enough observations to define your subproblems, transitions, and base cases. This same idea is explored in a lot of diverse places—there is DP on bitmasks, trees, digits, ranges and On and on.., but there are not many discussions on Greedy. To fill this gap, I would like to discuss a lot of problems and proofs to capture the essence of how to prove Greedy solutions.

Idea

To prove a greedy solution, the arguments are almost always Proof by Contradiction or Induction. The argument goes like this: let's assume a greedy solution $$$G$$$ and a magical optimal solution $$$O$$$ that doesn't follow the greedy choices. Then, given our observations, we prove that either $$$G$$$ performs no worse than $$$O$$$, or that given the constraints, the final solution produced by both strategies will look exactly the same.On the flip side, among many greedy choices, to disprove one, the easiest way is to find a counter-example.

There are a lot of problems i have referenced some of them are, Kanade's Perfect Multiples, Game on Array, Stay or Mirror, Ticket Hoarding, Maximum Running time of N computers, Group Increases, Xor factorization, Divine Tree, etc. Below i have mentioned the Greedy aspects, and some common greedy problems. Sometimes greedy seems obvious and intuitive but try to form a rigorous argument while proving the below mentioned problems.

Problems

Basic problems

Try to come up with formal arguments, to get the hang of it. Basic don't always mean easy.

P1. I have $$$n$$$ items and knapsack of capacity $$$W$$$. The $$$i$$$th item has a value and weight $$$v_i$$$ and $$$w_i$$$, im allowed to take fractions of items. Find the strategy that maximizes the total value.

Hint 1.1Imagine a scenario when we all unit weights $$$w = \begin{Bmatrix} 1,1,1,\cdots,1 \end{Bmatrix} $$$ and arbitrary $$$v$$$ value array.

Similarly if we have all unit values $$$v = \begin{Bmatrix} 1,1,\cdots,1 \end{Bmatrix} $$$ and arbitrary weight array.

How should we fill the array ? Can we convert original problem to this?

Solution 1.1Since we are allowed to have fractional values and weights. Following the hints, lets divide every $$$v_i$$$ with its corresponding weights $$$w_i$$$, then the problem becomes problem like we have new $$$v$$$ with all unit weighs and contribution of each can be upto $$$w_i$$$.

Let $$$G$$$ = pick the highest value with highest count as possible, say its $$$v_n, v_{n-1}, \cdots , v_m $$$, then with this strategy $$$v_m$$$ the last one will be the only one whose contribution is $$$\leq w_m$$$ .

Let $$$O$$$ be the optimal set = $$$ \begin{Bmatrix} (v_i,u_i) \mid u_i \leq w_i \end{Bmatrix}$$$. We need to prove that $$$O$$$ is same as $$$G$$$.

Claim: Any value $$$v_i \notin O$$$ must be less than every value in $$$O$$$.

Proof: By Contradiction ( Exchange argument ).

If there was such value such that $$$v_j \in O$$$ with contribution $$$u_j$$$, such that $$$v_j \lt v_i $$$ then replacing $$$v_j$$$ by any amount of contribution from $$$v_i$$$ would increase the final total value.

Hence all the values with greater value must be present in $$$O$$$ will full contribution as possible. And only possible partially filled will be smallest value in $$$O$$$. Which means $$$O$$$ will look exactly like $$$G$$$.

P2. I running along have infinitely long number line. I can run for $$$M$$$ units without refreshments. There are $$$n$$$ refreshment stalls where $$$i$$$th stall is located at $$$x_i$$$ on the number line for refreshment breaks. Find the strategy that minimizes number of refreshment breaks.

Hint 2.1If Greedy $$$G$$$ = $$$g_1, g_2, \cdots, g_k$$$, such that if im at $$$g_i$$$ then next stop $$$g_{i+1}$$$ is the furthest value such that $$$g_{i+1} \leq g_i + M$$$ . And $$$O$$$ = $$$o_1, o_2, \cdots o_m$$$,

Then what inequality between $$$g_1$$$ and $$$o_1$$$ can we guarantee ?

Can we generalize it ?

Solution 2.1Optimal solution takes $$$m$$$ steps, which means $$$m \leq k$$$. By definition, $$$ o_1 \leq g_1 $$$.

Claim: $$$o_i \leq g_i $$$ for all $$$i \leq k$$$ .

Proof: By Induction.

Our Base case $$$o_1 \leq g_1 $$$ is trivially true.

Let assume Hypothesis $$$o_i \leq g_i $$$, is true all $$$i \lt k$$$ .

Lets assume $$$o_{i+1} \gt g_{i+1}$$$ , then by definition $$$g_{i+1}$$$ should be the furthest possible refreshment stop, which is a contradiction. Hence $$$o_{i+1} \leq g_{i+1}$$$ .

Using this proof we can also say that if $$$o_m$$$ is the last refreshment stop, then we our $$$G$$$'s last stop must at $$$m$$$-th or before. Hence $$$k \leq m$$$. But since $$$m$$$ is the optimal value then $$$k=m$$$. Which means $$$G$$$ performs not worse than $$$O$$$.

P3. Given 2 integer arrays $$$a$$$ and $$$b$$$ of size $$$n$$$ filled with positive integers. Reorder arrays to maximize $$$\prod_{i=1}^{n} a_{i}^{b_i}$$$. Too easy :P

Hint 3.1What if problem was $$$ \sum_{i=1}^{n} a_i \cdot b_i $$$ .

What if problem was $$$ \sum_{i=1}^{n} lg(a_i) \cdot b_i $$$ .

What if problem was $$$ \sum_{i=1}^{n} f(a_i) \cdot g(b_i) $$$ . $$$f$$$ is monotonically increasing function and $$$g$$$ is monotonically decreasing function.

Will the argument remains the same ?

Solution 3.1Lets sort array $$$a$$$ and reorder the respective elements in $$$b$$$. Strategy $$$G$$$ be, sort $$$b$$$ such that high values of $$$a$$$ gets high value of $$$b$$$. Let optimal sequence be $$$O$$$.

Claim: Sequence $$$O$$$ must not contain any inversion.

Proof: By Contradiction ( Exchange argument ).

If there was inversion, say at $$$i \lt j$$$ and $$$b_i \gt b_j$$$ . Currently final product looks like $$$ = \cdots a_{i}^{b_i} \cdots a_{j}^{b_j} \cdots $$$ and after swapping $$$b_i$$$ and $$$b_j$$$ product will be $$$ = \cdots a_{i}^{b_j} \cdots a_{j}^{b_i} \cdots $$$. If we divide the products Before upon After $$$ = a_{i}^{b_i - b_j} / a_{j}^{b_i - b_j} = \left( a_i / a_j \right)^{b_i - b_j}$$$ . This fraction is greater than $$$1$$$ and the integer in power is positive integer. Hence correcting every inversion will increase the value. Hence $$$O$$$ must not contain any inversion.

Not having any inversion is the sufficient condition to make $$$O$$$ sequence look exactly like $$$G$$$. Hence $$$G$$$ is as optimal as $$$O$$$.

P4. Given array of $$$n$$$ activities, $$$i$$$th activity takes $$$p_i$$$ time to complete. I want to schedule activities such activities dont overlap,

- Let $$$c_i$$$ be the completion time of the activities. Say $$$i$$$th activity starts at $$$x$$$th time, then its completion time is $$$c_i=x+p_i$$$. Minimize the $$$ \cdot \sum_{i=1}^{n} c_i$$$ , or divide by $$$n$$$ to call it minimum average completion time.

- Given another array $$$s$$$ that tells the starting time of the activities. Find the maximum set of activities you take.

Hint 4.1Convert this problem to P3.

Note: Graph is denoted with $$$H$$$ , as the letter $$$G$$$ represents greedy solution.

By now, we must have gotten some idea about how we try to find properties that connect the greedy solution $$$G$$$ and the optimal solution $$$O$$$ to make them look the same. The same applies to problems involving graphs or trees, where we assume a certain optimal tree $$$T_{O}$$$, or some subgraph $$$H_{O} \subset H$$$, or some partially constructed subtree $$$T_{O}' \subset T_{O} \subset H$$$ basically anything concrete that we can play with and try to form some concrete arguments about their behavior.

P5. Given a undirected graph $$$H=(V,E)$$$ with non-negative weights.

- Find its Minimum spanning tree.

- Find strategy to find the 2nd best Minimum spanning tree.

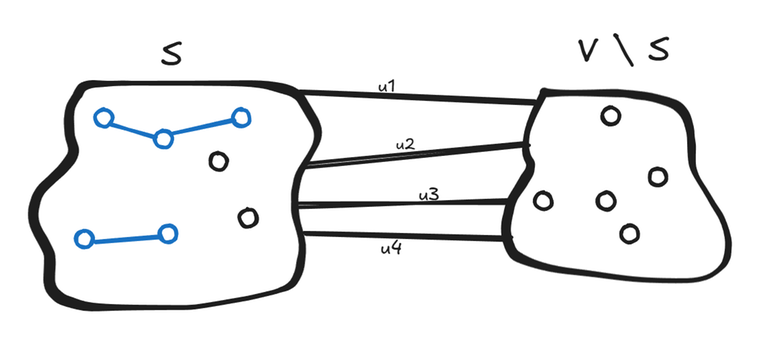

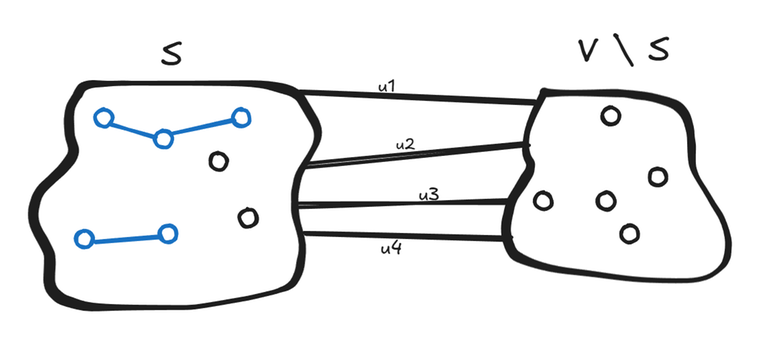

Hint 5.1.1Let $$$T$$$ be the minimum spanning tree. $$$S$$$ be the some arbitrary set of nodes and $$$A$$$ be the set of edges denoted in blue belongs to $$$T$$$. $$$S$$$ is made such that edges from $$$ S $$$ to $$$V \setminus S$$$ are not part of $$$T$$$, hence not denoted in blue.

Will any of the $$$u_i$$$ edge be the part of $$$T$$$ ?

If yes, then which $$$u_i$$$ edge should i pick ? we had drawn boundary around $$$A$$$ because in bucket of choices of edges we don't want edges that are already in $$$T$$$.

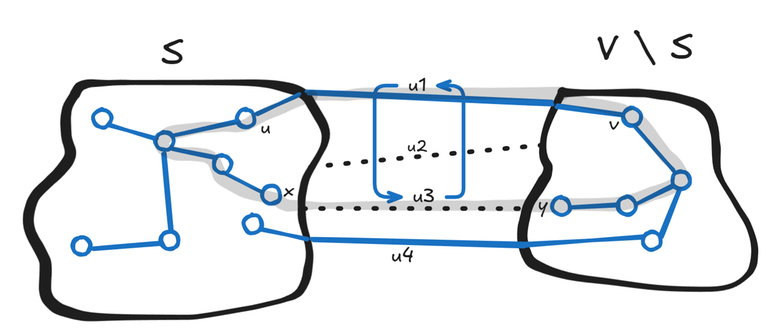

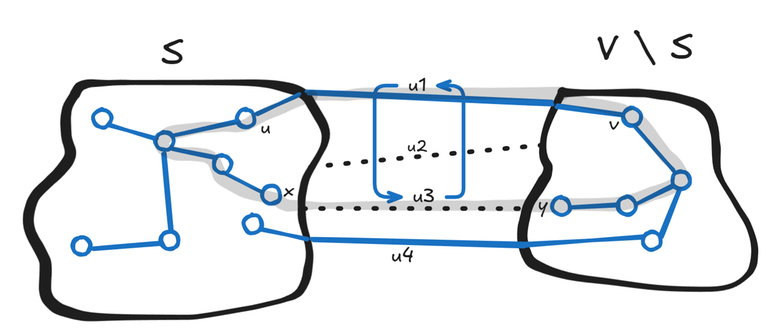

Hint 5.1.2Will any of the $$$u_i$$$ edge be the part of $$$T$$$ ? Yes, otherwise final tree would be disconnected.

If yes, then which $$$u_i$$$ edge should i pick ? Though there can many crossed edge present in the final MST, can we guarantee that the minimum one will always be present in some MST ? . Lets say minimum edge is $$$u_3$$$ with vertices $$$(x,y)$$$.

Claim: Yes we can guarantee.

Proof: By Construction ( Exchange argument ).

Lets say we have some MST $$$T'$$$ that dont contain edge between $$$(x,y)$$$, instead say it contains edge between $$$(u,v)$$$. If we connect $$$(x,y)$$$ it will form a graph with a cycle, and cycle contains $$$(u,v)$$$ and $$$(x,y)$$$ both, because these 2 edges are among the edges that connects $$$S$$$ with $$$V \setminus S$$$. Then removing edge from this cycle will result in tree, and if i remove $$$(u,v)$$$ then cost of this new tree wont increase, hence resulting tree is a valid MST.

Im not saying $$$T'$$$ is not an MST, im saying there is another valid MST with $$$(x,y)$$$ being one of the its edge.

Solution 5.1Now solution is simple. We have $$$A$$$ a set of edges that are present in MST. We know a way to add new edges to this set $$$A$$$. We should keep adding edges until we form a single tree. If you have noticed carefully what we just did was Proof by Induction. Just like we did in previous problems, instead of array now we have a graph $$$H$$$.

Base Case: $$$A = \phi$$$, we will take no edges as zero weight tree, which is lowest weight possible with non-negative edges. Hence its trivially true.

Hypothesis: We have assumed $$$A$$$ is part of edges present in MST. And by above hint we have proved, we can grow $$$A \cup (x,y)$$$ with minimum choice. Hence cost of the tree made by Greedy wont be worse than MST made by any other algorithm.

Practically you can either grow, by making $$$S = A$$$ every time, since at first $$$A = \phi$$$, we can make any partition and add a single edge into it, and continue like this

Or, since we know we are picking minimum edges all the time, so we can sort them, and try to add into $$$A$$$ one by one. In this case at every iteration, say i have an edge $$$(x,y)$$$, if $$$x$$$ and $$$y$$$ are already connected through some path from edges in $$$A$$$, then i cannot form a partition $$$S$$$. Otherwise, if MST is not completely built, then there are still some disconnected parts not joined by edges from $$$A$$$, so partition is possible you just need to keep looking. At the end through induction we are guaranteed to have minimum spanning tree.

Do these 2 algorithms sounds familiar ?

Hint 5.2Second best MST, differ in say how many edges from The best MST ? $$$1$$$ , or $$$2$$$ ?

BonusThese are the exact same steps to prove the Dijkstra as well. Can you form the argument right ?

Base Case, Hypothesis, and Inductive Step.

Ideas used in above

- Kanade's Perfect Multiples,

- Game on Array,

- Stay or Mirror,

- Ticket Hoarding,

- Maximum Running time of N computers,

- Group Increases,

- Xor factorization,

- Divine Tree,