This blog post is a submission for the Codeforces Month of Blog Posts Pt. III challenge. Thank you cadmiumky for the initiative!

This is how I wish I had been introduced to Greedy. Learning how to prove is the truly valuable skill, one that I believe good Greedy problems test rigorously.

Introduction

After struggling with proofs for quite a while, there are a few things I realized. Greedy proofs are very dependent on the rules of the problem. Loosely speaking each optimization problem gives you observations, from those observations, you realize that if you take certain choices while avoiding all others, then it will always be optimal. That realization is termed Greedy.

There are a lot of optimization problems, but only a small subset allows you to break the problem into smaller independent subproblems where solving them optimally leads to the best global answer. This property, known as Optimal Substructure, is found in both DP and Greedy algorithms. We won't go into those details here, instead we will generally assume our problems have the Optimal Substructure property.

Short NoteThis is a deep topic involving complex conditions of optimality, which is outside our current scope. Typically involving online queries or randomization, such as the example below

For example, imagine a "black box" array that is constantly shuffled between queries. We know an element exists $$$\lceil n/3 \rceil$$$ times, and we have a $$$\text{query}(x)$$$ function that returns the count of $$$x$$$. Because the array has no fixed order (no structure to exploit), we cannot decompose it. The only viable strategy is Random Sampling, probabilistically, we are guaranteed to find the answer within a few dozen queries.

I'm sorry, I couldn't find or able to think any good examples.

While DP and Greedy share the optimal substructure property, DP is essentially "smart brute force", where you define the subproblems and check all transitions to find the best one. In Greedy, however, you have to rigorously prove that ignoring all other choices and taking just one specific path works every single time. This takes the difficulty one notch up from DP.

The idea behind DP is the same everywhere, gather enough observations to define your subproblems, transitions, and base cases. This same idea is explored in a lot of diverse places—there is DP on Bitmasks, Trees, Graphs, Digits, Ranges, Weird DP Sorcery? and On and on.., but there are not many discussions on Greedy. To fill this gap, I would like to discuss a lot of problems and proofs to capture the essence of how to prove Greedy solutions.

Idea

To prove a greedy solution, the arguments are almost always Proof by Contradiction or Induction. The argument goes like this: let's assume a greedy solution $$$G$$$ and a magical optimal solution $$$O$$$ that doesn't follow the greedy choices. Then, given our observations, we prove that either $$$G$$$ performs no worse than $$$O$$$, or that given the constraints, the final solution produced by both strategies will look exactly the same. On the flip side, among many greedy choices, to disprove one, the easiest way is to find a counter-example.

Below, I have listed links to various problems and discussed the greedy ideas they use, along with some common greedy examples. Sometimes Greedy seems obvious and intuitive, but I encourage you to try forming a rigorous argument while proving the problems mentioned below.

Problems

I. Basic problems (without links)

Try to come up with formal arguments to get the hang of it. Basic doesn't always mean easy.

P1. I have $$$n$$$ items and a knapsack of capacity $$$W$$$. The $$$i$$$-th item has value $$$v_i$$$ and weight $$$w_i$$$. I am allowed to take fractions of items. Find the strategy that maximizes the total value.

HintImagine a scenario where all items have unit weights $$$w = \begin{Bmatrix} 1,1,1,\cdots,1 \end{Bmatrix} $$$ and but arbitrary value array $$$v$$$ .

Similarly, what if we have all unit values $$$v = \begin{Bmatrix} 1,1,\cdots,1 \end{Bmatrix} $$$ and arbitrary weights.

How should we fill the knapsack in these cases? Can we convert the original problem to look like this?

SolutionSince we are allowed to take fractional amounts, we can normalize the problem. Following the hints, let's divide every value $$$v_i$$$ by its corresponding weight $$$w_i$$$ to get the value density (value per unit weight). Now, the problem transforms into selecting "units" of weight, where each unit from item $$$i$$$ contributes $$$v_{i \text{ new}}=v_i/w_i$$$ to the total score, and contribution of each can be upto $$$w_i$$$.

Let $$$G$$$ be the Greedy strategy: pick the item with the highest density first, taking as much as possible, then the next highest, and so on, say its $$$v_n, v_{n-1}, \cdots , v_m $$$, in this strategy, at most one item (the last one added) will have a partial contribution (weight $$$ \leq w_m$$$) .

Let $$$O$$$ be the optimal set of chosen items = $$$ \begin{Bmatrix} (v_i,u_i) \mid u_i \leq w_i \end{Bmatrix}$$$, where $$$u_i$$$ is the contribution taken from item $$$i$$$. We need to prove that $$$O$$$ is same as $$$G$$$.

Claim: Any value $$$v_i \notin O$$$ must be less than every value in $$$O$$$.

Proof: By Contradiction ( Exchange argument ).

If there was such value such that $$$v_j \in O$$$ with contribution $$$u_j$$$, such that $$$v_j \lt v_i $$$ then replacing $$$v_j$$$ by any amount of contribution from $$$v_i$$$ would increase the final total value, say we remove a small amount of weight $$$\epsilon$$$ from item $$$j$$$ and replace it with amount $$$\epsilon$$$ of item $$$i$$$.

Extra space used is $$$-\epsilon + \epsilon = 0$$$ (Knapsack is still valid) and the total value change is $$$ -\epsilon v_j + \epsilon v_i \gt 0$$$, the total value increases.

This contradicts the assumption that $$$O$$$ was optimal. Therefore, the Optimal solution must prioritize items with higher density, filling them completely before touching lower-density items. This is exactly the Greedy strategy $$$G$$$

P2.I am running along an infinitely long number line. I can run for at most $$$M$$$ units without refreshments. There are $$$n$$$ refreshment stalls, where the $$$i$$$-th stall is located at $$$x_i$$$. Find the strategy that minimizes the number of refreshment breaks.

HintLet Greedy strategy $$$G$$$ = $$$g_1, g_2, \cdots, g_k$$$, where we simply choose the furthest reachable stall, if I am at $$$g_i$$$ then the next stop $$$g_{i+1}$$$ is the largest value such that $$$g_{i+1} \leq g_i + M$$$ . Let Optimal strategy $$$O$$$ = $$$o_1, o_2, \cdots o_m$$$,

What inequality between $$$g_1$$$ and $$$o_1$$$ can we guarantee? Who is further ahead?

Can we generalize this for any step $$$i$$$?

SolutionLet $$$k$$$ be the number of stops for Greedy, and $$$m$$$ be the number of stops for the Optimal solution. Since Optimal minimizes stops, we know $$$m \leq k$$$. By definition, $$$ o_1 \leq g_1 $$$.

Claim: $$$o_i \leq g_i $$$ for all $$$i \leq k$$$ .

Proof: By Induction.

Base case: From the start, Greedy picks the furthest reachable stall. Optimal picks some reachable stall. Therefore, $$$g_1 \geq o_1$$$ is trivially true.

Inductive Hypothesis: $$$o_i \leq g_i $$$, is true till $$$i$$$-th step .

From our hypothesis, $$$g_i \geq o_i$$$, which implies $$$g_i + M \geq o_i + M \geq o_{i+1}$$$.This means $$$o_{i+1}$$$ is a reachable stall from the current Greedy position $$$g_i$$$. By definition, Greedy picks the furthest reachable stall. Thus, $$$g_{i+1}$$$ must be at least as far as $$$o_{i+1}$$$.

By the time Optimal finishes (at step $$$m$$$), it has reached the destination ($$$o_m \geq \text{Target}$$$). Since $$$g_m \geq o_m$$$, Greedy has also reached the destination by step $$$m$$$. This implies Greedy needs no more steps than Optimal, so $$$k \leq m$$$. Since $$$m$$$ is the minimum possible, we must have $$$k = m$$$.

P3. Given two integer arrays $$$a$$$ and $$$b$$$ of size $$$n$$$ filled with positive integers, reorder the elements within the arrays to maximize $$$\prod_{i=1}^{n} a_{i}^{b_i}$$$. Too easy :P

HintWhat if the problem was to maximize $$$\sum_{i=1}^{n} a_i \cdot b_i$$$?

What if the problem was to maximize $$$\sum_{i=1}^{n} \lg(a_i) \cdot b_i$$$?

What if the problem was to maximize $$$\sum_{i=1}^{n} f(a_i) \cdot g(b_i)$$$, where $$$f$$$ is monotonically increasing and $$$g$$$ is monotonically decreasing?

Will the argument remain the same?

SolutionSince we have to match the respective elements, rearranging them won't be an issue, so for simplicity, let's sort array $$$a$$$ in ascending order. Strategy $$$G$$$: Sort $$$b$$$ in ascending order as well, so that larger values of $$$a$$$ are paired with larger values of $$$b$$$. Let $$$O$$$ be the optimal sequence of $$$b$$$.

Claim: The sequence $$$O$$$ must not contain any inversions relative to $$$a$$$.

Proof: By Contradiction ( Exchange argument ).

Suppose $$$O$$$ is optimal but contains an inversion. That is, there exist indices $$$i \lt j$$$ (so $$$a_i \le a_j$$$) where $$$b_i \gt b_j$$$.The current product contribution is $$$P_{\text{current}} = a_{i}^{b_i} \cdot a_{j}^{b_j}$$$.If we swap $$$b_i$$$ and $$$b_j$$$ to remove this inversion, the new contribution is $$$P_{\text{new}} = a_{i}^{b_j} \cdot a_{j}^{b_i}$$$.

Let's look at the ratio $$$\frac{P_{\text{new}}}{P_{\text{current}}}$$$:

$$$\frac{a_{i}^{b_j} \cdot a_{j}^{b_i}}{a_{i}^{b_i} \cdot a_{j}^{b_j}} = a_i^{b_j - b_i} \cdot a_j^{b_i - b_j} = \left( \frac{a_j}{a_i} \right)^{b_i - b_j}$$$Since $$$a_j \ge a_i$$$, the base $$$\frac{a_j}{a_i} \ge 1$$$.Since $$$b_i \gt b_j$$$, the exponent $$$b_i - b_j$$$ is a positive integer. Therefore, the ratio is $$$\ge 1$$$, which means $$$P_{\text{new}} \ge P_{\text{current}}$$$.

Correcting every inversion increases (or maintains) the total product. Thus, the Optimal sequence $$$O$$$ must be sorted exactly like $$$G$$$.

P4. Given an array of $$$n$$$ activities, where the $$$i$$$-th activity takes $$$p_i$$$ time to complete. I want to schedule activities such that they don't overlap.

- Let $$$c_i$$$ be the completion time of the $$$i$$$-th activity in the schedule. If the $$$i$$$-th activity starts at time $$$x$$$, then its completion time is $$$c_i = x + p_i$$$. Goal is to minimize $$$\sum_{i=1}^{n} c_i$$$ (which is equivalent to minimizing the average completion time).

HintConvert this problem to P3.

Can you express the total sum of completion times as a linear combination of processing times $$$p_i$$$?

SolutionLet's look at the contribution of each task to the total sum,

$$$c_1 = p_1$$$ ,

$$$c_2 = p_1 + p_2$$$ ,

$$$c_3 = p_1 + p_2 + p_3$$$ ,

Total Sum = $$$n \cdot p_1 + (n-1) \cdot p_2 + \dots + 1 \cdot p_n$$$ ,

In every permutation of activities, the activity placed first will be summed $$$n$$$ times, the second activity $$$n-1$$$ times, and so on. So, it is just like P3 (Rearrangement Inequality), where we want to minimize the sum of products $$$\sum A_i \cdot B_i$$$. Array $$$A = $$$ processing times $$$p$$$ and array $$$B = \begin{Bmatrix} n, n-1, \dots, 1 \end{Bmatrix}$$$.

To minimize the sum, we must pair the smallest $$$p_i$$$ with the largest multiplier ($$$n$$$). Therefore, we should sort the activities in ascending order of duration (Shortest Job First).

- Each activity has a start time $$$s_i$$$ and a finish time $$$f_i$$$. Find the maximum number of non-overlapping activities you can perform.

HintLet the bold intervals be the set $$$O$$$ (the optimal set of activities), and the dotted intervals be the remaining available activities. Looking at the diagram, which $$$b_i$$$ from the available pool can I safely swap with an $$$a_j$$$ from the optimal set?

Can I replace $$$a_2, a_3, a_4$$$ with $$$b_2, b_4, b_5$$$? Maybe pick the one with the fewest overlaps, like $$$b_2$$$? Or the shortest interval, like $$$b_5$$$? Does this strategy always work?

Can I replace $$$a_1$$$ with $$$b_1$$$? Or $$$a_7$$$ with $$$b_6$$$? Is this swap always safe?

A Greedy strategy must be reliable in every scenario.

Hint - Shortest Duration First? To test this, let's draw a counter-example using only 2 types of lengths. If it fails for just 2 lengths, it will definitely fail for general cases. Consider this scenario, Here, picking the shortest interval (middle) blocks two longer ones. We can easily see that the "Shortest Duration" strategy is not optimal.

- Least Overlapping First? To test this, let's draw a sample with many intervals of the same length. Consider this scenario, Here, the middle interval overlaps with the fewest others (only 2), while the others overlap with 3. But picking the middle one blocks the optimal solution. Thus, "Least Overlapping" also fails.

SolutionLet $$$O= \begin{Bmatrix} o_1,o_2, \cdots , o_m \end{Bmatrix}$$$ be an optimal set of non-overlapping activities, sorted by their finishing times.

Claim: Let $$$g_1$$$ be the activity with the absolute earliest finish time in the entire dataset. There exists an optimal solution $$$O'$$$ that contains $$$g_1$$$.

Proof: By Exchange Argument. Its actually easy to see. Look at the first activity in our optimal set, $$$o_1$$$. If $$$o_1 = g_1$$$, the claim is true. If $$$o_1 \neq g_1$$$, we know by definition that $$$finish(g_1) \le finish(o_1)$$$. Since $$$o_1$$$ finishes before $$$o_2$$$ starts, and $$$g_1$$$ finishes even earlier, $$$g_1$$$ is also compatible with the rest of the schedule ($$$o_2, \dots, o_m$$$). We can replace $$$o_1$$$ with $$$g_1$$$ to create a new set $$$O' = \begin{Bmatrix} g_1, o_2, \dots, o_m \end{Bmatrix}$$$.

This set is valid and has the same size as $$$O$$$. Thus, $$$O'$$$ is also optimal.

Rest of the proof is exactly is same as P2 .

Let the Greedy solution be $$$G = \begin{Bmatrix} g_1, g_2, \dots, g_k \end{Bmatrix}$$$, where each element is picked using the "earliest finishing time" strategy. Since $$$O$$$ is the maximum possible size, we know $$$k \leq m$$$. We want to prove $$$k = m$$$.

Claim: $$$finish(g_i) \leq finish(o_i)$$$ for all $$$1 \le i \le k$$$.

Proof: By Induction,

Base Case: $$$finish(g_1) \leq finish(o_1)$$$ is true by the definition of the Greedy choice (it picks the global minimum finish time).

Hypothesis: Assume the claim holds for step $$$i$$$: $$$finish(g_i) \leq finish(o_i)$$$.

Inductive Step: We want to prove $$$finish(g_{i+1}) \leq finish(o_{i+1})$$$. Since $$$O$$$ is a valid schedule, $$$start(o_{i+1}) \ge finish(o_i) \ge finish(g_i) $$$. This implies that from all compatible activities, $$$o_{i+1}$$$ is one of the candidate. Since Greedy always picks the earliest finishing time, $$$g_{i+1}$$$'s finishing time will be no further than $$$o_{i+1}$$$'s finishing time.

Since the Optimal schedule ends at $$$o_m$$$, and Greedy ends at (or before) that time ($$$finish(g_m) \leq finish(o_m)$$$), Greedy leaves space for at least as many subsequent ranges. Thus, Greedy finds at least as many activities as Optimal ($$$k \ge m$$$).But since $$$O$$$ is optimal, we must have $$$k = m$$$.

Note: The graph is denoted by $$$H$$$, as the letter $$$G$$$ represents the Greedy solution.

By now, we must have gotten some idea about how to find properties that connect the Greedy solution $$$G$$$ and the Optimal solution $$$O$$$, eventually proving they are the same.The same logic applies to problems involving graphs or trees. We often assume the existence of a specific optimal tree $$$T_{O}$$$, a subgraph $$$H_{O} \subset H$$$, or even a partially constructed subtree $$$T_{O}' \subset T_{O} \subset H$$$ , basically anything concrete that we can "play with" to formulate rigorous arguments about their behavior.

P5. Given an undirected graph $$$H=(V,E)$$$ with non-negative weights.

- Find its Minimum Spanning Tree (MST).

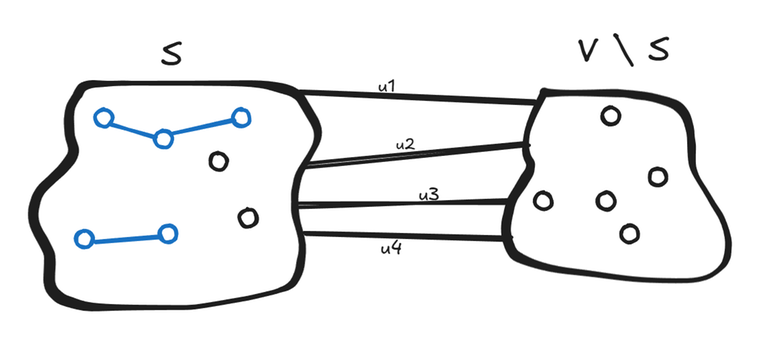

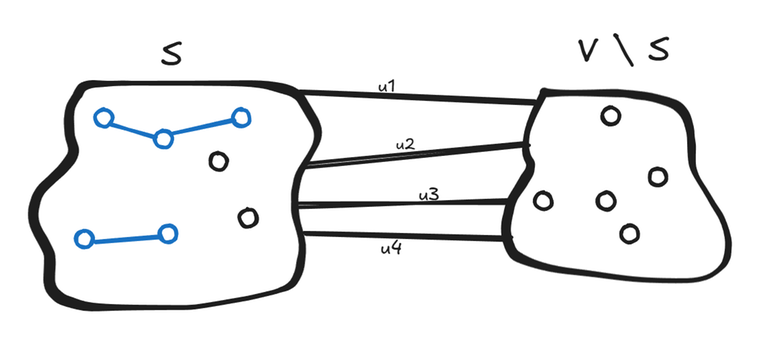

HintLet $$$T$$$ be the Minimum Spanning Tree we are trying to build.

Let $$$S$$$ be an arbitrary set of nodes (a subset of $$$V$$$).

Let $$$A$$$ (denoted in blue) be the set of edges in $$$T$$$ that are strictly inside $$$S$$$.

The edges connecting $$$S$$$ to $$$V \setminus S$$$ (the crossing edges) are not yet part of $$$A$$$.

Will any of the crossing edges $$$u_i$$$ be part of the final MST $$$T$$$? If yes, then which edge $$$u_i$$$ should I pick?

Note: I drew the boundary because, in our bucket of choices, I want to decide from the "fresh" unknown edges, not edges that are already part of the MST.

HintWill any of the crossing edges $$$u_i$$$ be part of $$$T$$$? Yes. If we don't pick at least one edge crossing from $$$S$$$ to the rest of the graph, the final tree would be disconnected.

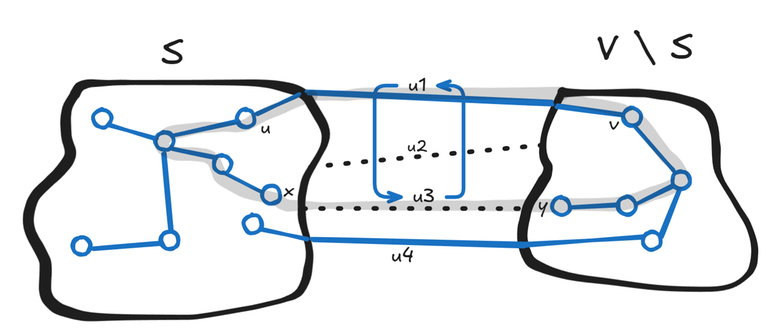

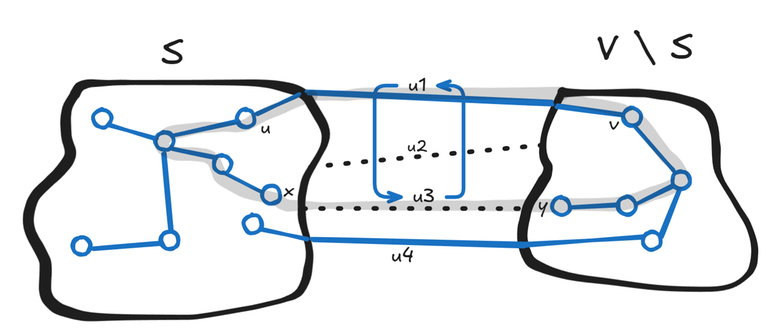

Which edge should I pick? Although the final MST might contain many crossing edges, can we guarantee that the minimum weight crossing edge will always be part of some valid MST? Let's say the minimum weight crossing edge is $$$u_{min}$$$ connecting vertices $$$(x,y)$$$.

Claim: Yes, we can guarantee that there exists an MST containing $$$u_{min}$$$.

Proof: By Construction ( Exchange argument ).

Suppose we have an MST $$$T'$$$ that does not contain the edge $$$(x,y)$$$.Instead, the path from $$$x$$$ to $$$y$$$ in $$$T'$$$ must use some other crossing edge, say $$$(u,v)$$$, to get from $$$S$$$ to $$$V \setminus S$$$. If we add the edge $$$(x,y)$$$ to $$$T'$$$, it creates a cycle. This cycle must cross the boundary between $$$S$$$ and $$$V \setminus S$$$ at least twice (once at $$$(x,y)$$$ to leave $$$S$$$, and at least once more to get back to $$$S$$$).

Let $$$(u,v)$$$ be this other crossing edge on the cycle. Since $$$(x,y)$$$ is the minimal edge by our definition, $$$weight(x,y) \le weight(u,v)$$$. If we remove $$$(u,v)$$$ and keep $$$(x,y)$$$, we break the cycle and restore the tree structure.The cost of this new tree is: $$$Cost(T_{new}) = Cost(T') - weight(u,v) + weight(x,y)$$$. Since $$$weight(x,y) \le weight(u,v)$$$, the new cost is $$$\le Cost(T')$$$.

I am not saying $$$T'$$$ wasn't an MST, I am saying that we can always construct a valid MST that includes the minimum crossing edge.

SolutionNow the solution is simple. Let $$$A$$$ be a subset of edges that are part of some MST. We know a valid way to add new edges to this set $$$A$$$. We just need to keep adding "safe" edges until we form a single spanning tree.

If you noticed, what we just did was essentially a Proof by Induction. Just like in previous problems, instead of an array, we now have a graph $$$H$$$.

Base Case: $$$A = \emptyset$$$. An empty set of edges is trivially a subset of any MST (and has cost 0). Hence, the base case holds.

Inductive Step: Assume $$$A$$$ is currently a subset of some MST. Using the Cut Property proved in the hint, we know there exists a minimum weight crossing edge $$$(u,v)$$$ such that $$$A \cup {(u,v)}$$$ is also a subset of a valid MST. By repeatedly applying this, the tree built by our Greedy strategy is guaranteed to be an MST.

Practically, you can implement this in two ways:

- Make $$$S = A$$$. Start with an arbitrary vertex and keep taking the minimum crossing edge $$$u_{min}$$$ to grow the set of connected vertices.

Or,

- Since we know we always want minimum edges, we can sort all edges by weight.

- If $$$(x,y)$$$ connects two previously disconnected components, we can define $$$S$$$ as the set of vertices in one of those components. Since the edges are sorted, $$$(x,y)$$$ is guaranteed to be the minimum weight edge crossing this specific cut, making it a safe choice.

- If $$$(x,y)$$$ connects vertices that are already in the same component, we cannot form any partition $$$S$$$ where $$$(x,y)$$$ is guaranteed to be the minimum safe cut. If the MST is not completely built, then there are still some disconnected parts not joined by edges from $$$A$$$, so a partition is always possible, you just need to keep looking.

Do these 2 algorithms sound familiar?

- Find a strategy to find the Second Best Minimum Spanning Tree.

HintHow many edges does the Second Best MST differ from the Best MST? Is it $$$1$$$, $$$2$$$, or more?

Solution If it differed by 2 (or more) edges, we could apply our standard Exchange Argument to swap one of those suboptimal edges back to the optimal one. This would create a new tree that is cheaper than our current 2-off tree and more expensive(or equal to) than the best MST. Therefore, the true Second Best MST is just one "swap" away.

Algorithm is brute force all edges $$$(u,v)$$$ that are not in the optimal tree $$$T$$$. Adding $$$(u,v)$$$ to $$$T$$$ creates a cycle. To restore the tree structure remove the maximum weight (excluding $$$(u,v)$$$ itself).The answer is the minimum cost found among all such possible swaps. It's possible that there are no 2nd best.

BonusThese exact same steps can be used to prove Dijkstra's Algorithm as well. Can you form the argument correctly?

The Base Case, Hypothesis and Inductive Step, where we pick the unvisited node $$$v$$$ with the smallest tentative distance. Why is this safe?.

A similar conclusion is reached in the blog Rethink the Dijkstra algorithm -- Let's go deeper , where it is realized that the Greedy argument can be used in creative ways.

II. Basic Problems (with Links)

Random ThoughtAfter struggling and failing to formulate a satisfying argument, it feels very weird to ask for help and hear people in editorials and comments say, "Oh, that's intuitive... it's obvious... it's trivial." I'm left wondering, "How come? Yes it seems intuitive, but on pen and paper, I can't formally proceed".

Usually, their reply is just an AC code link, suggesting that since their code passed, their intuition was right. But I'm not interested in the fact that it's right, I'm interested in how they got there. Proof by AC is not a proof. X_X

Sometimes it's best to leave the problem for your future self, when you have reached a level of maturity where grasping such intuitions is easier. Which, I believe, is lowkey true.

- Kanade's Perfect Multiples,

- Game on Array,

- Stay or Mirror,

- Ticket Hoarding,

- Maximum Running time of N computers,

- Group Increases,

- Xor factorization,

- Divine Tree,

- Tracks in the Snow

- Find the k-sum of an array

- Erasing Vertices 2

- Path and Subsequence

Outro

I hope this blog helps both beginners and intermediate problem solvers.

These are just a few of the interesting Greedy problems I've shared. If you know of other problems with elegant or tricky Greedy arguments, please share them! Also, if you spot any mistakes or if any part of the explanation wasn't clear, don't hesitate to point it out.

Happy Solving! ^_^