Parallel Binary Search — Introduction

Put simply, parallel binary search is performing binary search for multiple queries in parallel while reusing already-built data instead of rebuilding it many times from scratch. The idea is somewhat similar to dynamic programming in that we take advantage of previously computed states.

In problems where we could apply binary search to find the answer for each query independently, we would normally need to perform Q separate searches. This often results in TLE because the data structure or computation must be rebuilt for every query. Parallel binary search helps reduce the complexity by processing queries together.

This is not a new technique, but there are still relatively few detailed articles about it. This post can serve as a reference for those who have not encountered it before.

To make things clearer, let’s start with some example problems.

Example Problem

Suppose we have an array of n elements and a list of Q queries. Each query asks: "What is the smallest index t such that after applying all updates from 1 to t, a certain condition for this query becomes true?" If no such t exists, we should output -1.

A naive approach would be to run binary search for each query separately, rebuilding the data structure for every check. This can easily lead to O(Q × M × log M) complexity, which is too slow.

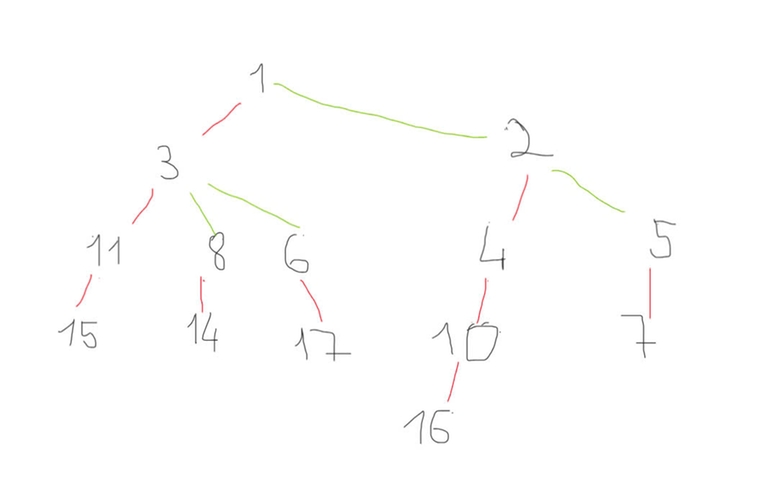

With parallel binary search, we maintain a search range [L[q], R[q]] for each query and group queries by their current midpoint. We process all queries with the same midpoint together, applying updates incrementally. This allows us to reuse computations and avoid unnecessary rebuilding.

Key idea:

- Keep track of queries' binary search intervals.

- Group queries by the middle of their search interval.

- Apply updates in increasing order of t, answering all queries whose midpoint matches the current t.

- Shrink the search interval based on whether the predicate holds.

By repeating this grouping process until all queries are resolved, we reduce complexity to about O((N + Q) × log M) in many cases, making the approach feasible for large inputs.

Deep Dive Analysis — “Ha Noi Road Trip” Problem

For reference, this problem is part of the “Parallel Binary Search” contest in our Codeforces group.

Restating the problem: We have a tree of n cities connected by roads. Each road has:

v– current speed limitc– upgrade costs– upgraded speed limit (strictly greater thanv)

(a, b, m): - We may choose at most one road on the path from

atobto upgrade, provided its cost ≤m. - We must output the maximum possible travel speed between

aandbafter that upgrade (or without upgrading if better).

Naive approach and why it fails

If we process each query independently:

- Find the path from

atob(e.g., using LCA in O(log n)). - Scan edges on that path to check upgrade opportunities within budget.

Key observation

Each query essentially asks: “What is the minimum budget threshold at which the answer improves?” This invites a global offline solution with Parallel Binary Search:

- Sort edges by their upgrade cost

c. - Binary search in parallel across all queries over budget values.

- At each mid-budget, “activate” edges with cost ≤ mid and update our data structure.

- Answer each query with the best path speed, then narrow the search range.

Data structure choice

Since the graph is a tree, we can use:

- Heavy-Light Decomposition (HLD) to map tree edges to segment tree indices.

- A segment tree (or Fenwick tree) maintains the maximum speed per edge.

- When activating an upgrade, we perform:

update(edge) = max(current_speed, upgraded_speed). - To answer a query, query the maximum edge speed along the path from

atob.

Parallel Binary Search workflow

// Initialization: for each query, set L[q] = 1, R[q] = max budget + 1

// Final answer for each query: if L[q] exceeds max budget, only original speeds apply

Complexity analysis

- Each edge is activated O(log M) times across the binary searches → O(n log M) updates.

- Each query does O(log M) path queries → O(Q log M) query operations.

- Each operation (update or query) on HLD + segment tree is O(log n).

Final remarks

This problem elegantly combines Parallel Binary Search with path query data structures (HLD + segment tree). It exemplifies how offline query processing and reusing shared state can dramatically reduce redundant work in competitive programming.

Conclusion

The “Ha Noi Road Trip” problem is a textbook example of how Parallel Binary Search can turn an otherwise intractable query set into something efficient and elegant. By processing all queries offline, grouping them by midpoints, and reusing the same data structure state for multiple queries, we avoid rebuilding from scratch each time.

When combined with Heavy-Light Decomposition and a segment tree, the approach scales to the problem’s high constraints while keeping the implementation structured and modular.

The key takeaway is this: whenever you see multiple independent binary searches over a shared state that is expensive to rebuild, consider running them in parallel. This often transforms the complexity from O(Q × cost_of_state_build) to something much more manageable.

In competitive programming, techniques like this are not just tricks — they are powerful patterns that, once mastered, can be adapted to a wide variety of problems.