Codeforces Round 1097 (Div. 1, Based on Zhili Cup 2026)

4 days

Register now »

Codeforces Round 1097 (Div. 2, Based on Zhili Cup 2026)

4 days

Register now »

| # | User | Rating |

|---|---|---|

| 1 | Benq | 3792 |

| 2 | VivaciousAubergine | 3647 |

| 3 | Kevin114514 | 3603 |

| 4 | jiangly | 3583 |

| 5 | strapple | 3515 |

| 6 | tourist | 3470 |

| 7 | dXqwq | 3436 |

| 8 | Radewoosh | 3415 |

| 9 | Otomachi_Una | 3413 |

| 10 | Um_nik | 3376 |

| # | User | Contrib. |

|---|---|---|

| 1 | Qingyu | 158 |

| 2 | adamant | 152 |

| 3 | Proof_by_QED | 146 |

| 3 | Um_nik | 146 |

| 5 | Dominater069 | 144 |

| 6 | errorgorn | 141 |

| 7 | cry | 139 |

| 8 | YuukiS | 135 |

| 9 | chromate00 | 134 |

| 9 | TheScrasse | 134 |

|

0

Most submissions use under 320, some even close to 300. |

|

+28

|

|

0

I solved Div1D with an alternative network: Replace the DAG with its transitive closure. Now, we can be sure that all paths in the solution are disjoint, except for ones that have a common start. Thus, each vertex is in at most one path where it is not the start. Consider a functional graph of $$$f(u)$$$ where $$$f(u)$$$ is either $$$u$$$ or the predecessor of $$$u$$$ in the only path that contains $$$u$$$ and doesn't start with $$$u$$$, if such path exists. Notice that:

Turns out that any functional graph that fulfills these conditions is a correct solution. With this perspective, it is simple to model with MCMF. |

|

+18

A really cool advanced application of this is AMPPZ 2023 E. Hint Consider cells adjacent to the path, below the path and above the path. For each cell create two vertices $$$A$$$ and $$$B$$$. Model the problem with an s-t cut such that $$$(A, B)$$$ are in $$$(S, S)$$$ if the cell is above the path, $$$(T, T)$$$ if below the path and $$$(S, T)$$$ if adjacent to the path. |

|

+22

Alternative DP solution to F: The problem choose the largest connected set of edges that has exactly $$$k$$$ leaves and contains the root can be solved with DP. Let $$$\mathrm{dp}_{i, j}$$$ be the answer for the subtree rooted at $$$i$$$, when taking exactly $$$j$$$ leaves. The transitions are straightforward — same as in knapsack. This gives us a $$$\mathcal{O}(n^2)$$$ solution. Notice that the sequence $$$(\mathrm{dp}_{i, 0}, \mathrm{dp}_{i, 1}, \dots)$$$ is always concave for a fixed $$$i$$$. This can be easily proven with induction — the sequence in leaves is concave, and the max-plus convolution of two concave sequences is also concave. This gives us a faster solution. Instead of storing the DP directly, store the adjacent differences (they will always be sorted in non-increasing order). Then, we can use small-to-large merging, which gives us $$$\mathcal{O}(n \log^2 n)$$$ complexity. |

|

0

Alternative solution to F using Aliens trick and CHT: Let $$$b_1, \dots, b_m$$$ be the leaves of the tree in DFS order. We want to select a subsequence of size at most $$$k + 1$$$ to minimize $ where $$$a_1, \dots, a_{k + 1}$$$ is the chosen subsequence. Why? First of all, let's consider ending the path as another jump to the root. Let's count the contribution of each edge to the answer. Let $$$x$$$ be the number of selected leaves in the subtree of $$$u$$$. Notice that if $$$x = 0$$$ the edge between $$$u$$$ and $$$p_u$$$ will be traversed 2 times. Otherwise it will be traversed $$$x$$$ times. This simplifies into the above formula. This can be calculated with dp. After guessing that the answer is convex for increasing $$$k$$$, we can apply Aliens trick. This reduces the complexity from $$$\mathcal{O}(n^2k)$$$ to $$$\mathcal{O}(n^2 \log n)$$$. Let's now notice that $$$d(\mathrm{lca}(b_i, b_k)) \leq d(\mathrm{lca}(b_j, b_k))$$$ for $$$i \leq j \leq k$$$. This property allows us to apply CHT, and to find intersections we can use binary search. This makes the final complexity $$$\mathcal{O}(n \log^2 n)$$$, which is fast enough. |

|

+8

Here is my implementation of persistent DSU: For each set, store its elements. Instead of storing the parent of each element store a vector of pairs $$$(time, parent)$$$. Since we want the length of the path from each element to its root to always be 1, when merging two sets update the parent of each element in the smaller set. This results in an amortized space and time complexity of $$$O(n \log n)$$$. Proof of complexity Let's count the contribution to the sum of each element. When a parent of an element is changed, the size of its set is at least doubled. Since the size of each set is bounded by $$$n$$$, an element can only change $$$\log n$$$ times, resulting in $$$O(n \log n)$$$ complexity. To get the root of an element at time $$$t$$$ we can simply binary search to find the last $$$(time, parent)$$$ entry with $$$time \le t$$$. |

|

+16

G can be solved online using persistent DSU, which also allows us to solve the problem of finding the minimum energy needed to go from $$$a$$$ to $$$b$$$ in $$$O(\log n)$$$. |

|

+10

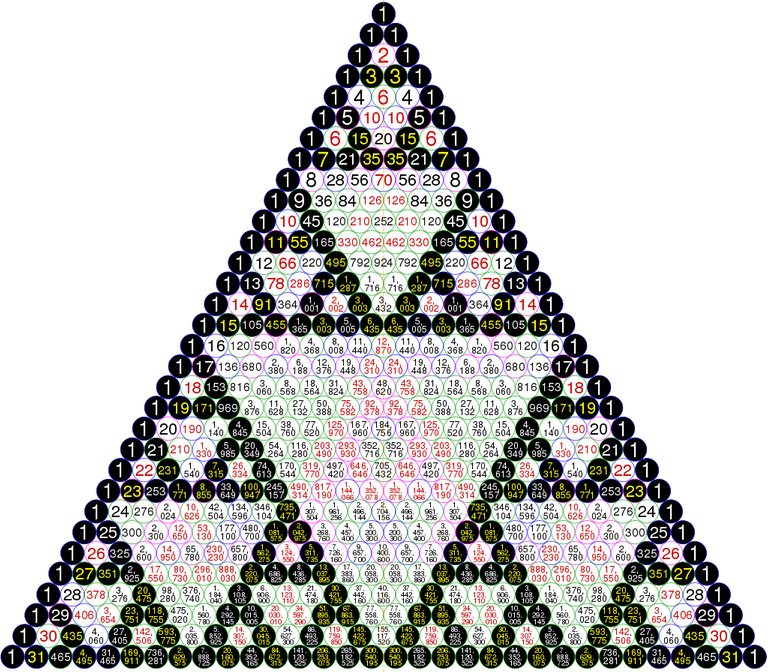

Alternative solution to F using SOS DP: Notice that the value of $$$a_0$$$ after $$$k$$$ operations is the xor of all $$$a_i$$$ such that $$$\binom{k}{i}$$$ is odd. Why? Let's consider a binary mask $$$b$$$, where $$$a_0$$$ is the xor of all $$$a_i$$$ such that the $$$i$$$-th bit in $$$b$$$ is set. Initially $$$b=1$$$. An operation changes $$$b$$$ in the following way: $$$b \leftarrow b \oplus 2b$$$. This is equivalent to moving a row down in a Pascal's triangle that only considers parities.

Lucas's theorem states that $$$\binom{p}{q}$$$ is odd if and only if $$$q$$$ is a submask of $$$p$$$. Thus, the value of $$$a_0$$$ after $$$k$$$ operations is the xor of all $$$a_i$$$ such that $$$i$$$ is a submask of $$$k$$$. This can be computed in $$$O(n \log n)$$$ using SOS DP. We know that after $$$n$$$ operations all $$$a_i$$$ become zero, so the answer is the first moment that $$$a_0$$$ becomes zero and stays zero. |

|

0

|

| Name |

|---|