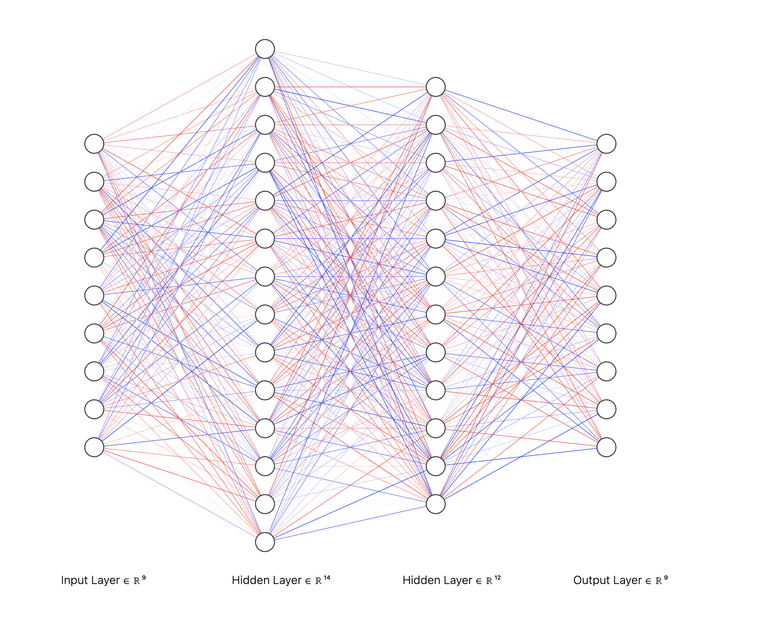

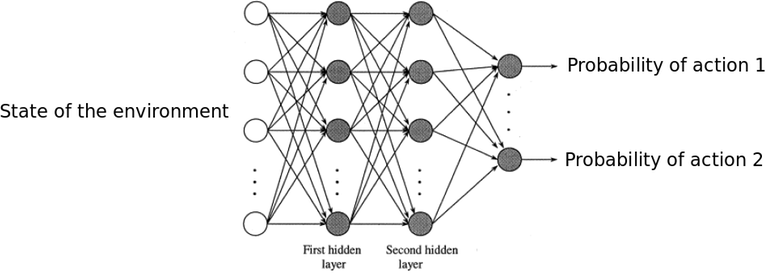

If we build an interval tree as shown in the following picture, we can get super effective RMQ data structure as good as iterative segment tree. I have benchmarked against Fenwick RMQ and it turns out this is much much better time complexity wise than log square range decomposition. All queries take 3 logn at most because the search interval is halved every third step in worst case once we determine the offshoot segment which exceeds our leftmost point l.

https://cses.fi/problemset/task/1649/

Of course this uses a lot of arrays but if you are quite good in the skewed number system, you could compute the length and parent on the fly just like the Fenwick data structure.

To summarize, we are just doing the decomposition as shown in the diagram above and it turns out to dissect a range into subsegments, we just need around 3 logn node values.

Hence we have a super easy RMQ. Lazy propagation should be easy as we already have the required information len and parent is enough. Each node has maximum three children, the leaf node itself and the equal sized intervals.

What do you think? Is this interesting enough for you or were you already familiar with this esoteric structure.